Faster Diffusion on Blackwell: MXFP8 and NVFP4 with Diffusers and TorchAO

8 Apr 2026, 4:40 pmDiffusion models for image and video generation have been surging in popularity, delivering super-realistic visual media. However, their adoption is often constrained by the sheer requirements in memory and compute. Quantization is essential for efficient serving of these models.

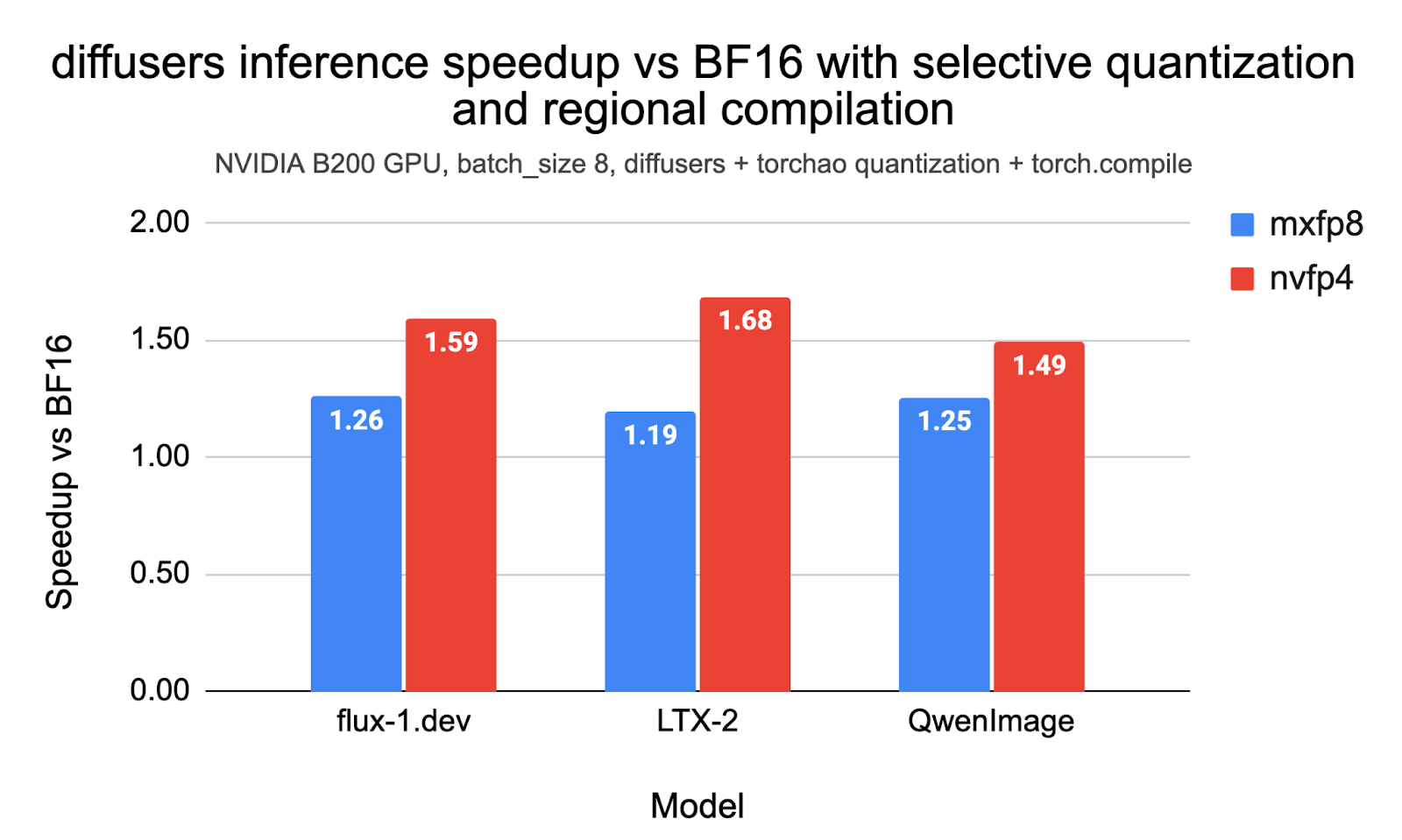

In this post, we demonstrate reproducible end-to-end inference speedups of up to 1.26x with MXFP8 and 1.68x with NVFP4 with diffusers and torchao on the Flux.1-Dev, QwenImage, and LTX-2 models on NVIDIA B200. We also outline how we used selective quantization, CUDA Graphs, and LPIPS as a measure to iterate on the accuracy and optimal performance of these models. The code to reproduce the experiments in this post is here.

Table of contents:

Table of contents:

- Background on MXPF8 and NVFP4

- Basic Usage with Diffusers and TorchAO

- Benchmark Results

- Technical Considerations

Background on MXFP8 and NVFP4

MXFP8 and NVFP4 are microscaling formats supported natively by NVIDIA’s Blackwell architecture (e.g., B200 GPUs). Unlike standard quantization, which scales an entire tensor, microscaling groups elements into small blocks (e.g., 16 or 32 values) that share a high-precision scale factor. This allows for significantly lower bit-depths while preserving dynamic range and accuracy.

- MXFP8 (OCP Microscaling FP8): An 8-bit industry-standard format (E4M3/E5M2) from the Open Compute Project (OCP). It uses a block size of 32 with 8-bit scaling. It provides a “sweet spot” balance, delivering faster inference than BF16 with virtually no loss in visual quality (lower LPIPS), and often achieves the lowest latency at smaller batch sizes.

- NVFP4 (NVIDIA FP4): A 4-bit floating-point format (E2M1) uniquely accelerated by Blackwell Tensor Cores. It uses a block size of 16 with FP8 scaling factors. It offers the highest theoretical throughput and lowest memory footprint (approx. 3.5x smaller than BF16), making it ideal for high-batch, compute-bound workloads.

Refer to this post to know more.

Basic Usage with diffusers and TorchAO

Prerequisites

NVFP4 requires a CUDA capability of at least 10.0. So, make sure you have a GPU that fits the bill. The benchmarks presented in this document were conducted on a B200 machine (B200 DGX).

For the virtual environment, you can use conda:

conda create -n nvfp4 python=3.11 -y

conda activate nvfp4

pip install --pre torch --index-url

https://download.pytorch.org/whl/nightly/cu130

pip install --pre torchao --index-url

https://download.pytorch.org/whl/nightly/cu130

pip install --pre mslk --index-url

https://download.pytorch.org/whl/nightly/cu130

pip install diffusers transformers accelerate sentencepiece protobuf av imageio-ffmpegAt the time of writing, the nightlies were 2.12.0.dev20260315+cu130, 0.17.0.dev20260316+cu130, and 2026.3.15+cu130 for PyTorch, TorchAO, and MSLK, respectively.

Some models require users to be authenticated on the Hugging Face Hub platform. So, please make sure to run hf auth login before running the examples, if not already done.

Basic Usage

Using the NVFP4 quantization config from TorchAO is straightforward with its native integration in Diffusers:

from diffusers import DiffusionPipeline, TorchAoConfig, PipelineQuantizationConfig

import torch

from torchao.prototype.mx_formats.inference_workflow import (

NVFP4DynamicActivationNVFP4WeightConfig,

)

config = NVFP4DynamicActivationNVFP4WeightConfig(

use_dynamic_per_tensor_scale=True, use_triton_kernel=True,

)

pipe_quant_config = PipelineQuantizationConfig(

quant_mapping={"transformer": TorchAoConfig(config)}

)

pipe = DiffusionPipeline.from_pretrained(

"black-forest-labs/FLUX.1-dev",

torch_dtype=torch.bfloat16,

quantization_config=pipe_quant_config

).to("cuda")

pipe.transformer.compile_repeated_blocks(fullgraph=True)

pipe_call_kwargs = {

"prompt": "A cat holding a sign that says hello world",

"height": 1024,

"width": 1024,

"guidance_scale": 3.5,

"num_inference_steps": 28,

"max_sequence_length": 512,

"num_images_per_prompt": 1,

"generator": torch.manual_seed(0),

}

result = pipe(**pipe_call_kwargs)

image = result.images[0]

image.save("my_image.png")The code snippet above quantizes every torch.nn.Linear layer of the model.

For this post, we always use regional compilation with fullgraph=True, as it significantly reduces compilation time and yields results almost as good as full model compilation. Know more about regional compilation from here.

Recipe Selection

The code snippet below shows how to configure MXFP8 and NVFP4 inference with TorchAO:

# MXFP8

quant_config = MXDynamicActivationMXWeightConfig(

activation_dtype=torch.float8_e4m3fn,

weight_dtype=torch.float8_e4m3fn,

kernel_preference=KernelPreference.AUTO,

)

# NVFP4

quant_config = NVFP4DynamicActivationNVFP4WeightConfig(

use_dynamic_per_tensor_scale=True,

use_triton_kernel=True,

)Benchmark Results

Flux.1-Dev

The following inference params were used during benchmarking FLUX.1-dev:

{

"prompt": "A cat holding a sign that says hello world",

"height": 1024,

"width": 1024,

"guidance_scale": 3.5,

"num_inference_steps": 28,

"max_sequence_length": 512,

}Performance and Peak Memory

First, we present latency and peak memory consumption across different settings and different benchmarks, with speedups up to 1.26x with MXFP8 and up to 1.59x with NVFP4. Note that these results use selective quantization, wherein we exclude certain layers from getting quantized. We discuss more about selective quantization later in this post.

Flux-1.dev performance and peak memory with MXFP8 and NVFP4 quantization |

||||

|---|---|---|---|---|

| Quant Mode | Batch Size | Latency (s) | Memory (GB) | Speedup vs BF16 |

| None | 1 | 2.10 | 38.34 | 1.00 |

| MXFP8 | 1 | 1.75 | 26.90 | 1.21 |

| NVFP4 | 1 | 1.41 | 21.33 | 1.50 |

| None | 4 | 7.87 | 44.39 | 1.00 |

| MXFP8 | 4 | 6.36 | 32.95 | 1.24 |

| NVFP4 | 4 | 5.09 | 27.39 | 1.55 |

| None | 8 | 15.57 | 53.00 | 1.00 |

| MXFP8 | 8 | 12.40 | 41.56 | 1.26 |

| NVFP4 | 8 | 9.81 | 36.00 | 1.59 |

NVIDIA B200, selective quantization, torch.compile with regional compilation; batch_size=1 uses torch.compile(..., mode='reduce-overhead'). Quant Mode “None” means no quantization.

Accuracy

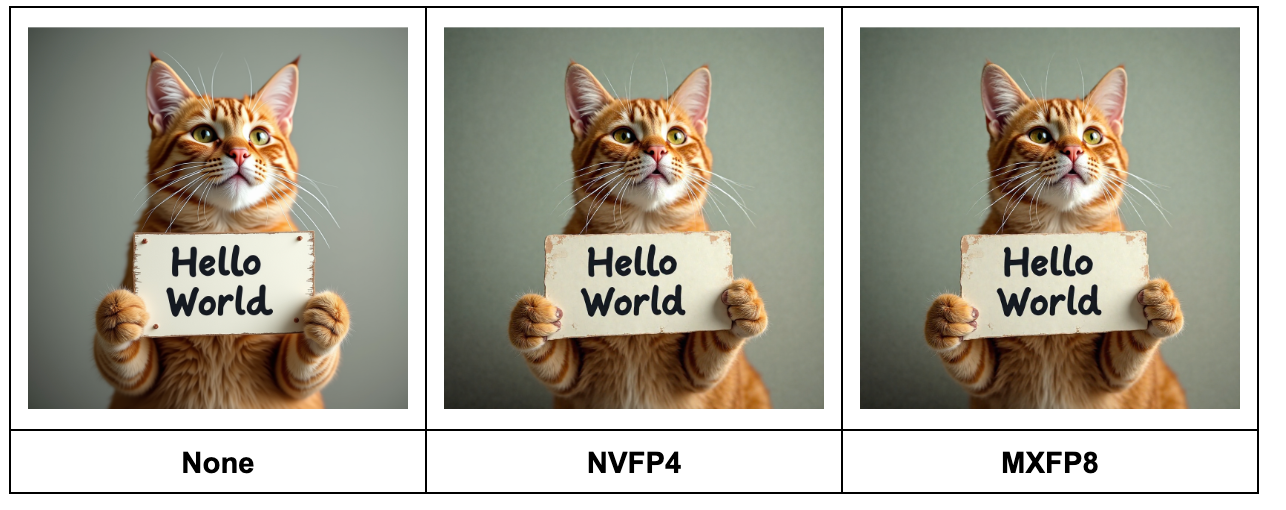

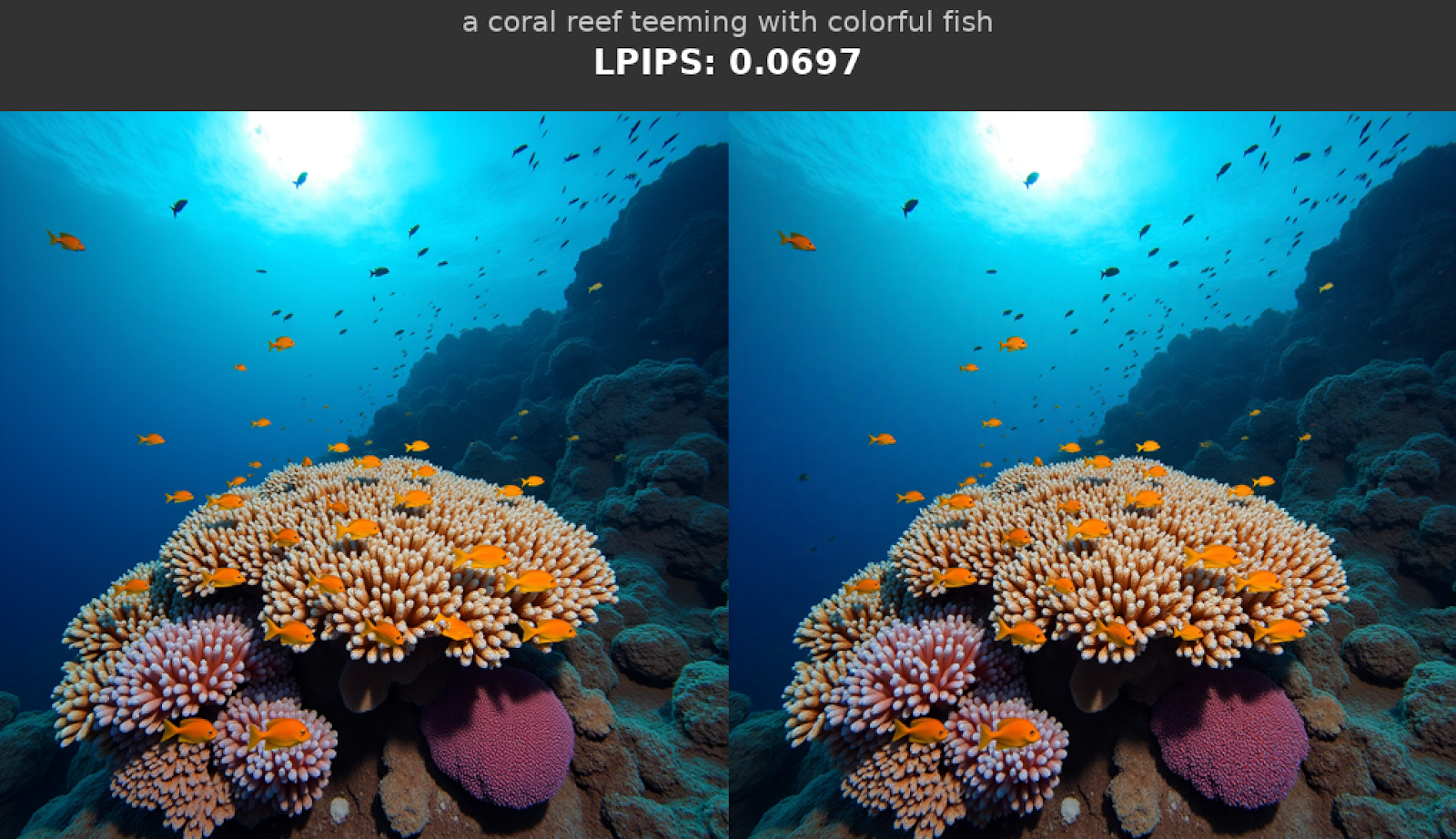

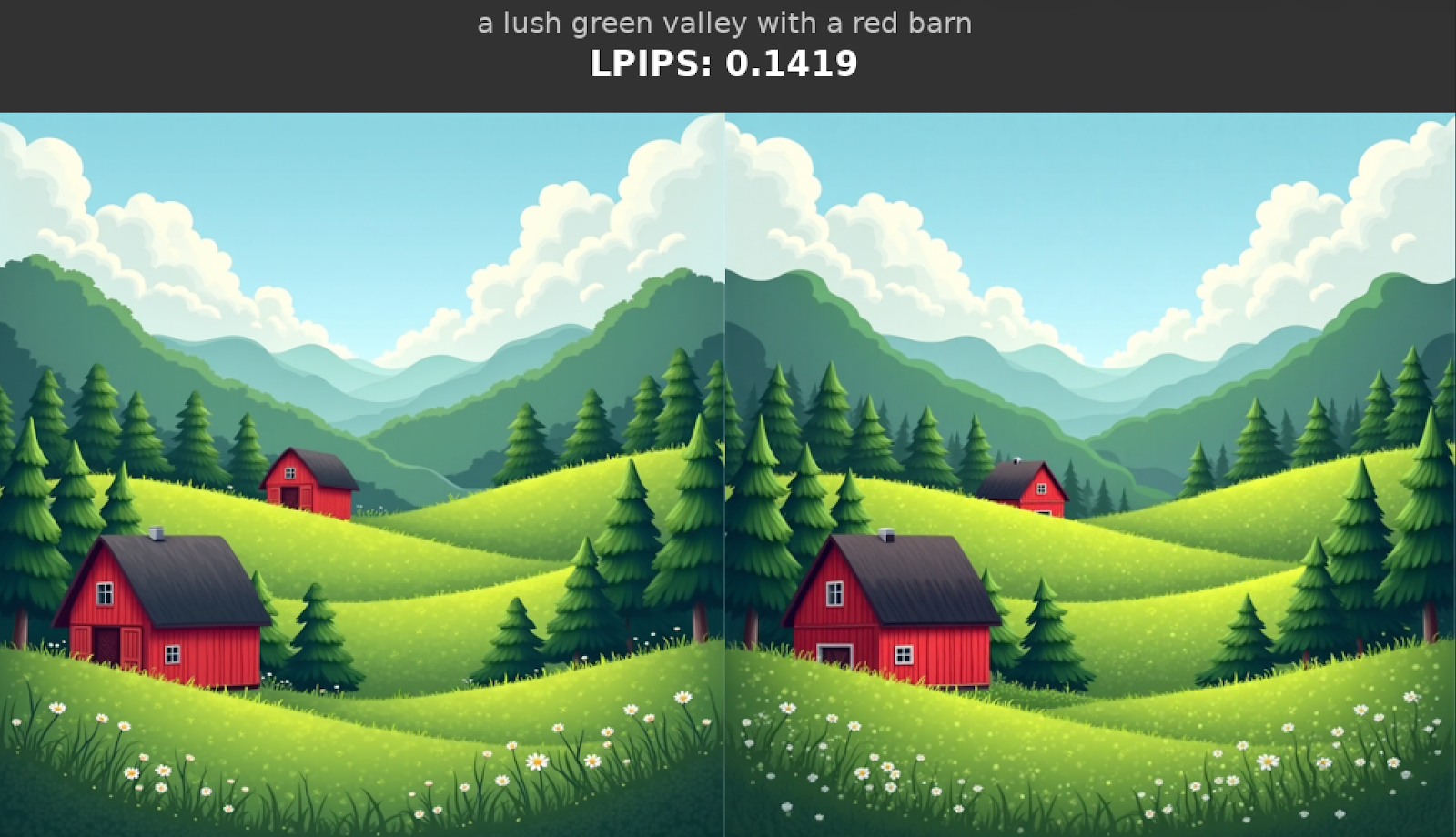

The MXFP8 and NVFP4 images generated for a test prompt are close to the bfloat16 baseline:

For a more thorough accuracy evaluation, we computed the mean LPIPS score between the bfloat16 images (baseline) and MXFP8|NVFP4 images (experiment), averaged over the prompts in the Drawbench dataset:

Flux-1.dev mean LPIPS score with MXFP8 and NVFP4 quantization |

|

|---|---|

| Quant Mode | Mean LPIPS on Drawbench |

| None | 0 |

| MXFP8 | 0.11 |

| NVFP4 | 0.44 |

NVIDIA B200, selective quantization, torch.compile with regional compilation.

An LPIPS score of zero means “identical images”, and lower LPIPS scores correspond to higher perceptual similarity. The code we used to compute the mean LPIPS score is here. Please see the LPIPS section further in this post for more details on accuracy evaluations with LPIPS.

LTX-2

For LTX-2, we enabled tiling on the VAE to keep the memory requirements manageable. The following inference-time parameters were used to obtain the results:

{

"prompt": (

"INT. HOME OFFICE - DAY. Soft natural daylight lights a desk with an open laptop. The camera holds a steady medium shot. A small real house cat sits naturally on all fours in front of the laptop, much smaller than the desk and computer. The cat looks at the screen curiously. Suddenly, with a soft magical sparkle effect, a pair of tiny reading glasses appears in midair and gently lands on the cat's face. A faint whimsical chime sound plays. The cat pauses for a split second, then begins pressing the keyboard clumsily with one paw, producing rapid typing sounds. The laptop screen glow reflects softly on the cat's fur while light playful music continues."

),

"negative_prompt": "worst quality, inconsistent motion, blurry, jittery, distorted",

"width": 768,

"height": 512,

"num_frames": 121,

"frame_rate": 24.0,

"num_inference_steps": 40,

"guidance_scale": 4.0,

}Performance and Peak Memory

LTX-2 performance and peak memory with MXFP8 and NVFP4 quantization |

||||

|---|---|---|---|---|

| Quant Mode | Batch Size | Latency (s) | Memory (GB) | Speedup |

| None | 1 | 16.230 | 72.77 | 1.00 |

| MXFP8 | 1 | 13.724 | 54.54 | 1.18 |

| NVFP4 | 1 | 10.374 | 45.72 | 1.56 |

| None | 4 | 61.591 | 87.61 | 1.00 |

| MXFP8 | 4 | 50.956 | 69.38 | 1.21 |

| NVFP4 | 4 | 36.963 | 60.56 | 1.67 |

| None | 8 | 122.427 | 107.40 | 1.00 |

| MXFP8 | 8 | 102.546 | 89.18 | 1.19 |

| NVFP4 | 8 | 72.689 | 80.36 | 1.68 |

NVIDIA B200, selective quantization, torch.compile with regional compilation. Quant Mode “None” means no quantization.

Accuracy

Check out this link for a comparison of the video results on a test prompt. Calculating eval scores over a prompt dataset (like we did for Flux-1.dev) is left for a future study.

QwenImage

The following inference-time parameters were used to obtain the results:

{

"prompt": "A cat holding a sign that says hello world",

"negative_prompt": " ",

"height": 1024,

"width": 1024,

"true_cfg_scale": 4.0,

"num_inference_steps": 50,

}Performance and Peak Memory

QwenImage performance and peak memory with MXFP8 and NVFP4 quantization |

||||

|---|---|---|---|---|

| Quant Mode | Batch Size | Latency (s) | Memory (GB) | Speedup |

| None | 1 | 7.454 | 62.21 | 1.00 |

| MXFP8 | 1 | 6.430 | 55.65 | 1.16 |

| NVFP4 | 1 | 5.369 | 52.45 | 1.39 |

| None | 4 | 26.779 | 75.52 | 1.00 |

| MXFP8 | 4 | 21.835 | 68.97 | 1.23 |

| NVFP4 | 4 | 18.279 | 65.76 | 1.47 |

| None | 8 | 52.095 | 92.47 | 1.00 |

| MXFP8 | 8 | 41.569 | 85.91 | 1.25 |

| NVFP4 | 8 | 34.969 | 82.7 | 1.49 |

NVIDIA B200, selective quantization, torch.compile with regional compilation, batch_size=1 uses torch.compile(..., mode='reduce-overhead'). Quant Mode “None” means no quantization.

Accuracy

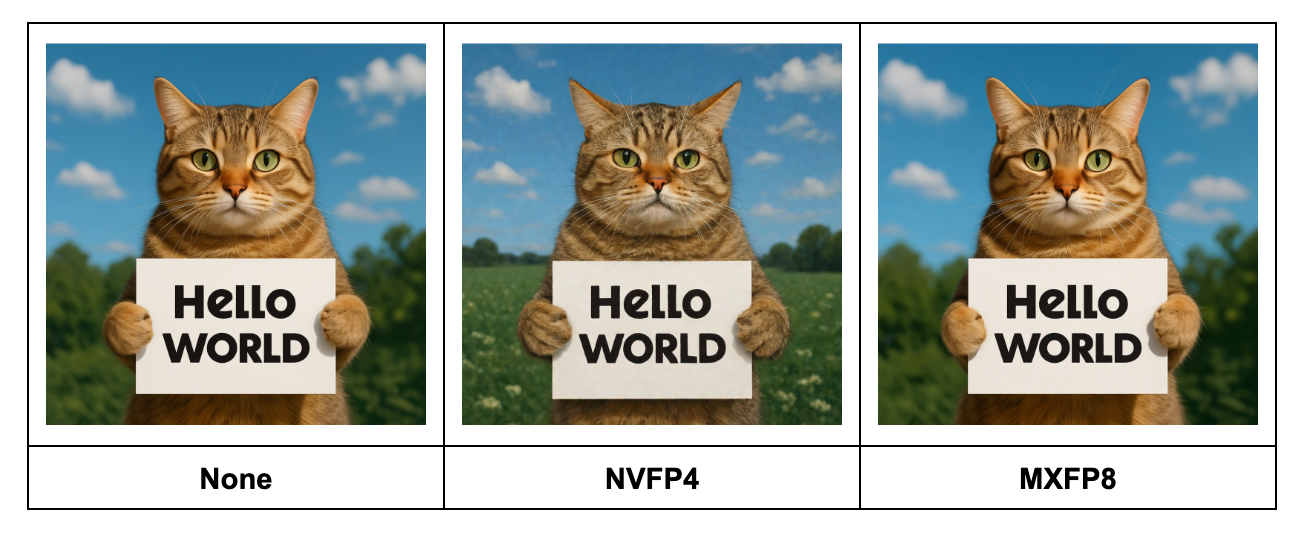

The MXFP8 and NVFP4 images generated for a test prompt are close to the bfloat16 baseline, with NVFP4 showing slightly larger differences vs MXFP8:

In the following table, we report the LPIPS scores similar to Flux.1-Dev.

QwenImage mean LPIPS score with MXFP8 and NVFP4 quantization |

|

|---|---|

| Quant Mode | Mean LPIPS on Drawbench |

| None | 0 |

| MXFP8 | 0.34 |

| NVFP4 | 0.41 |

Note: In our experiments, we found QwenImage to be more sensitive to quantization than Flux.1-Dev, as evidenced by the higher mean MXFP8 LPIPS score of 0.34 for QwenImage (compared to a mean LPIPS score of 0.11 for MXP8 on Flux-1.Dev). Reducing the mean LPIPS score for QwenImage further via more aggressive selective quantization or more advanced numerical algorithms (GPTQ, QAT, etc) is left for a future study.

Technical Considerations

In this section, we share how we used selective quantization, CUDA Graphs, and LPIPS to iterate on the performance and accuracy metrics presented in this post.

Optimizing Accuracy and Performance with Selective Quantization

We used selective quantization to optimize for latency (all models) and LPIPS (Flux-1.dev), skipping layers based on two simple heuristics:

- If the weight or activation shape of a

torch.nn.Linearis too small to benefit from quantizationmin(M, K, N) < 1024), skip it. This is to ensure that the speedup from quantizing the matrix multiply is larger than the additional overhead of quantizing the activation (more context: here).- A tutorial for how to find the weight and activation shapes in your model using

torchaotooling is here. Note that even if the weight is large, a small activation shape could make quantization not profitable.

- A tutorial for how to find the weight and activation shapes in your model using

- If the layer is likely to meaningfully contribute to model accuracy (such as embeddings, normalization), skip it.

- To apply this on your model, you can print out the model (

print(model)) and inspect the FQNs manually, then skip the FQNs you suspect could be impacting accuracy based on your knowledge of the model architecture.

- To apply this on your model, you can print out the model (

The exact heuristics we used for each model are:

To quantify the impact of selective quantization, we measure performance, memory, and mean LPIPS (with AlexNet) between the images with pure Bfloat16 and images generated with NVFP4 and MXFP8.

Impact of full vs selective quantization on Flux-1.dev |

|||

|---|---|---|---|

| Quant Mode | LPIPS | Latency (s) | Memory (GB) |

| MXFP8 + full quantization | 0.138128 | 1.774 | 26.84 |

| MXFP8 + selective quantization | 0.107562 | 1.746 | 26.90 |

| NVFP4 + full quantization | 0.479679 | 2.112 | 21.25 |

| NVFP4 + selective quantization | 0.438337 | 2.076 | 21.33 |

(Lower LPIPS is better, with LPIPS of ~0.1 usually meaning that the images are nearly indistinguishable. LPIPS computation code is available here).

As we can notice from the results above, excluding certain layers from quantization (aka “selective quantization”) provides the best trade-off between latency, peak memory consumption, and LPIPS. Therefore, we follow the recipe of selective quantization for the rest of the two models reported in this post.

We used simple heuristics to find our selective quantization recipes. There are more advanced approaches for selective quantization, such as this layer sensitivity study.

Note that while iterating on our selective quantization recipes, we found performance gaps in TorchAO’s kernel for quantizing tensors to NVFP4. We improved NVFP4 performance in this PR by upgrading the `to_nvfp4` kernel to use MSLK.

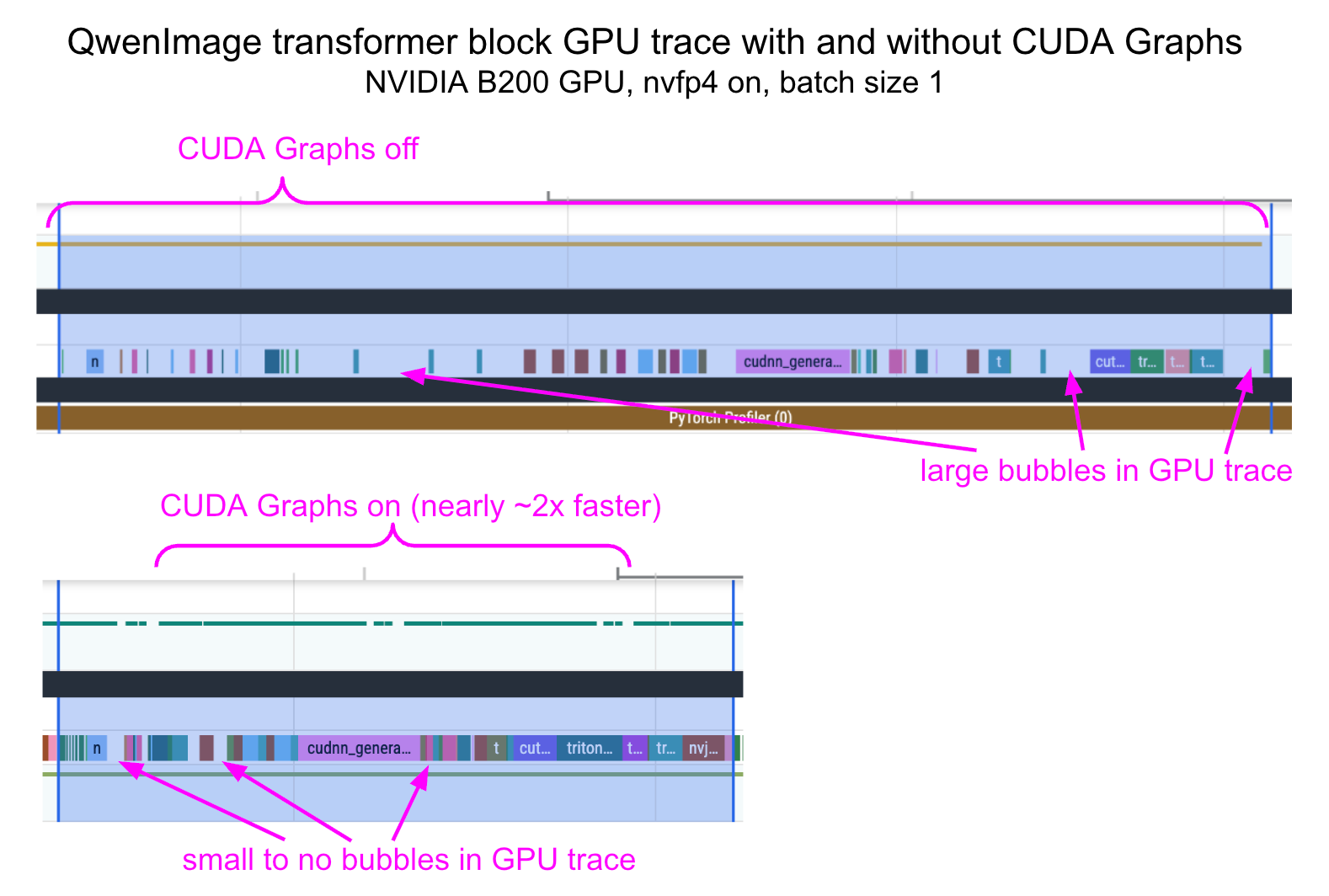

Improving CPU Overhead with CUDA Graphs

We noticed that when using NVFP4 with small batch sizes like 1, CPU overhead tends to have a nontrivial impact on latency improvements. To significantly reduce this overhead, we used the “reduce-overhead” compilation mode, which enables CUDA graphs. Below, we provide the profile traces before and after applying CUDA Graphs.

To cleanly compose torch.compile(..., mode='reduce-overhead') with the per-block compilation from the diffusers library, we had to wrap each transformer block in a function that clones its inputs. The PR to do this is here, showing a 1.81x speedup for QwenImage + nvfp4 at batch_size==1.

Evaluating Image Generation Accuracy with LPIPS

We used the LPIPS (GitHub) metric to compare how similar images generated by a quantized model are from the images generated by the baseline (bfloat16) model. In pseudocode:

lpips_scores = []

for text_prompt in dataset:

generator = torch.Generator(device=device).manual_seed(seed)

kwargs = {"prompt": prompt, "generator": generator, ...}

image_baseline = pipe_bf16(**kwargs)

image_quantized = pipe_quantized(**kwargs)

lpips_score = calculate_lpips_score(image_baseline, image_quantized)

lpips_scores.append(lpips_score)

lpips_mean = lpips_scores.sum() / len(lpips_scores)The actual code we used is here.

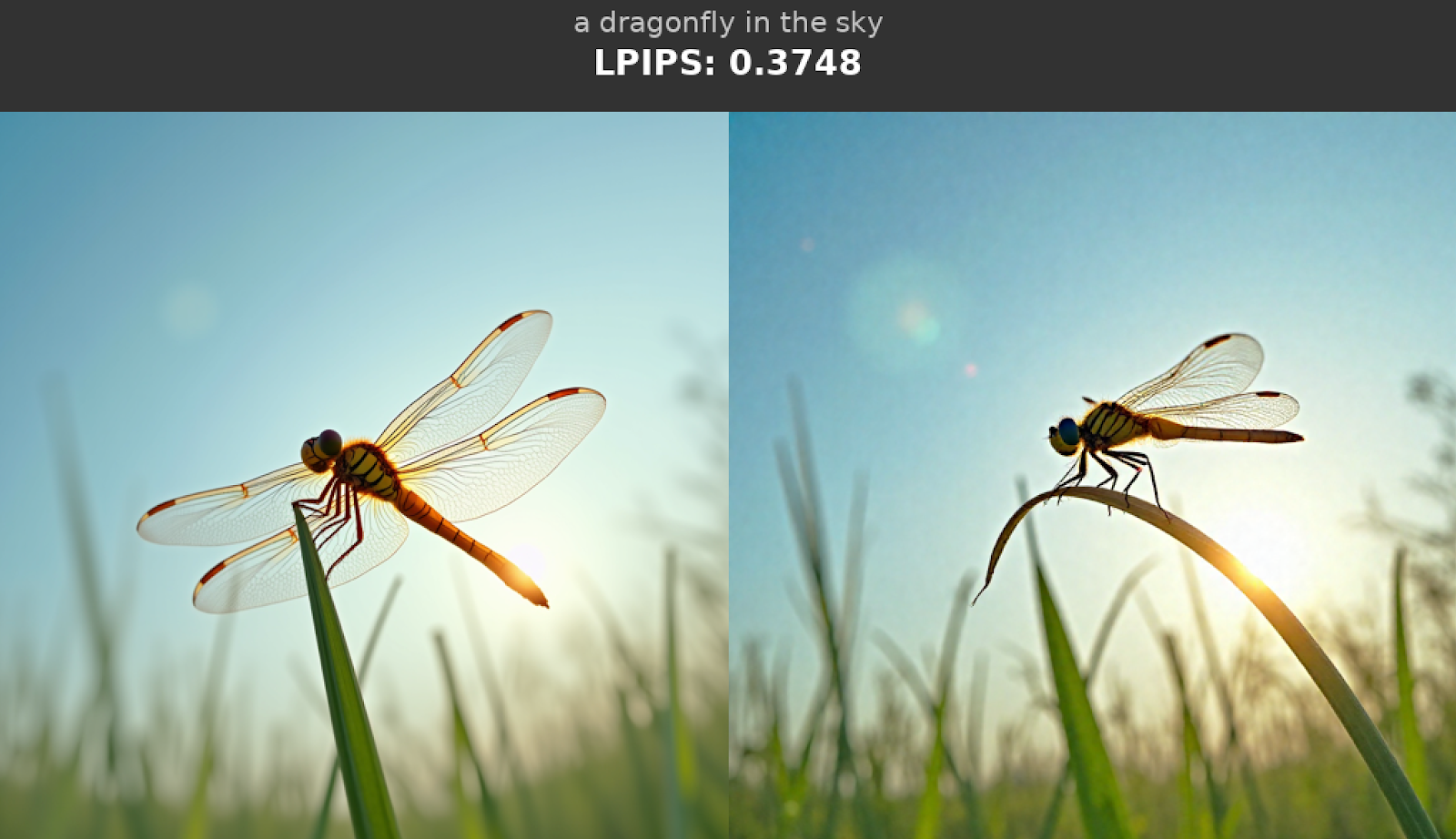

Example LPIPS Scores for Pairs of Images

This section provides example LPIPS scores for pairs of images to help put the LPIPS metrics reported above into context, and enable readers to reason about “what is a good LPIPS score”.

The images below were generated with FLUX.1-dev. The images on the left are the baseline (bfloat16), and the images on the right are from quantizing every torch.nn.Linear of the model with MXFP8. The LPIPS scores are based on the comparison of the image on the right (experiment) to the image on the left (baseline).

Below, we provide a similar comparison but with NVFP4 images on the right-hand side.

Conclusion

In this post, we investigated the performance of NVFP4 and MXFP8 quantization schemes on popular image and video generation models. We presented the recipes that provide a reasonable trade-off between speed, quality, and memory. We also uncovered some important issues that can get in the way of optimal performance and how we can approach them. We hope these recipes will help improve the performance of your image and video generation workloads.

Resources

- Code repository

- TorchAO docs:

- Diffusers x TorchAO integration

All outputs can be found here

PyTorch Foundation Announces Safetensors as Newest Contributed Project to Secure AI Model Execution

8 Apr 2026, 7:00 am

Safetensors is welcomed into the PyTorch Foundation to secure model distribution and build trusted agentic solutions

PARIS – PyTorch Conference EU – April 8, 2026 – The PyTorch Foundation, a community-driven hub for open source AI under the Linux Foundation, today announced that Safetensors has joined the Foundation as its newest foundation-hosted project alongside DeepSpeed, Helion, PyTorch, Ray, and vLLM. Safetensors’ contribution by Hugging Face prevents arbitrary code execution risks and enhances model performance across multi-GPU and multi-node deployments, addressing growing technical needs of the AI era.

As AI model development accelerates, security risks in the production pipeline inherently increase, necessitating secure, high-performance formats that can keep pace with deployment. Safetensors joining the Foundation minimizes security risks associated with model architectures and execution, providing developers with a trusted path to production.

“Safetensors’ contribution to the PyTorch Foundation is an important step towards scaling production-grade AI models,” said Mark Collier, Executive Director of the PyTorch Foundation. “Safetensors ensures secure model distribution and de-risks code execution, all while offering significant speed across complex computing architectures. For security, Safetensors is a crucial piece of the open source AI stack that will drive fast, secure, and technically advanced AI.”

Developed and maintained by Hugging Face, Safetensors has become one of the most widely adopted tensor serialization formats in the open source (machine learning) ML ecosystem. In previous pickle formats, opportunities existed for developers, or bad actors, to execute arbitrary, untrusted code within model files when shared. Acting as a table of contents for an AI model’s data, Safetensors prevents arbitrary code execution and is now one of the most widely used metadata formats for model distribution.

Developers and contributors interested in participating in the PyTorch project ecosystem are encouraged to join the community onsite at upcoming events like PyTorch Conference China (Shanghai, September 8-9) and PyTorch Conference North America (San Jose, October 20-21).

Supporting Quotes

Safetensors joining the PyTorch Foundation is an important step towards using a safe serialization format everywhere by default. The new ecosystem and exposure the library will gain from this move will solidify its security guarantees and usability. Safetensors is a well-established project, adopted by the ecosystem at large, but we’re still convinced we’re at the very beginning of its lifecycle: the coming months will see significant growth, and we couldn’t think of a better home for that next chapter than the PyTorch Foundation.

– Luc Georges, Co-Maintainer, Safetensors & Lysandre Debut, Chief Open Source Officer, Hugging Face

“Safetensors joining the PyTorch Foundation promises safer, more interoperable packaging for model artifacts. The project has become a de facto standard for open-weight model distribution by halting risk associated with arbitrary code execution while also supporting fast, practical loading workflows. Together with Helion, these contributions to the Foundation solidify the technical future for open source AI.”

– Matt White, Global CTO of AI at the Linux Foundation and CTO of the PyTorch Foundation

###

About the PyTorch Foundation

The PyTorch Foundation is a community-driven hub supporting the open source PyTorch framework and a broader portfolio of innovative open source AI projects, including DeepSpeed, Helion, PyTorch, Ray, Safetensors, and vLLM. Hosted by the Linux Foundation, the PyTorch Foundation provides a vendor-neutral, trusted home for collaboration across the AI lifecycle—from model training and inference, to domain-specific applications. Through open governance, strategic support, and a global contributor community, the PyTorch Foundation empowers developers, researchers, and enterprises to build and deploy AI at scale. Learn more at https://pytorch.org/foundation.

About the Linux Foundation

The Linux Foundation is the world’s leading home for collaboration on open source software, hardware, standards, and data. Linux Foundation projects are critical to the world’s infrastructure, including Linux, Kubernetes, LF Decentralized Trust, Node.js, ONAP, OpenChain, OpenSSF, PyTorch, RISC-V, SPDX, Zephyr, and more. The Linux Foundation focuses on leveraging best practices and addressing the needs of contributors, users, and solution providers to create sustainable models for open collaboration. For more information, please visit us at linuxfoundation.org.

The Linux Foundation has registered trademarks and uses trademarks. For a list of trademarks of The Linux Foundation, please see its trademark usage page: www.linuxfoundation.org/trademark-usage. Linux is a registered trademark of Linus Torvalds.

Media Contact

Grace Lucier

The Linux Foundation

pr@linuxfoundation.org

Monarch: an API to your supercomputer

8 Apr 2026, 7:00 amGetting distributed training jobs to run on huge clusters is hard! This is especially true when you start looking at more complex setups like distributed reinforcement learning. Debugging these kinds of jobs is frustrating, and the turnaround time for changes tends to be very slow.

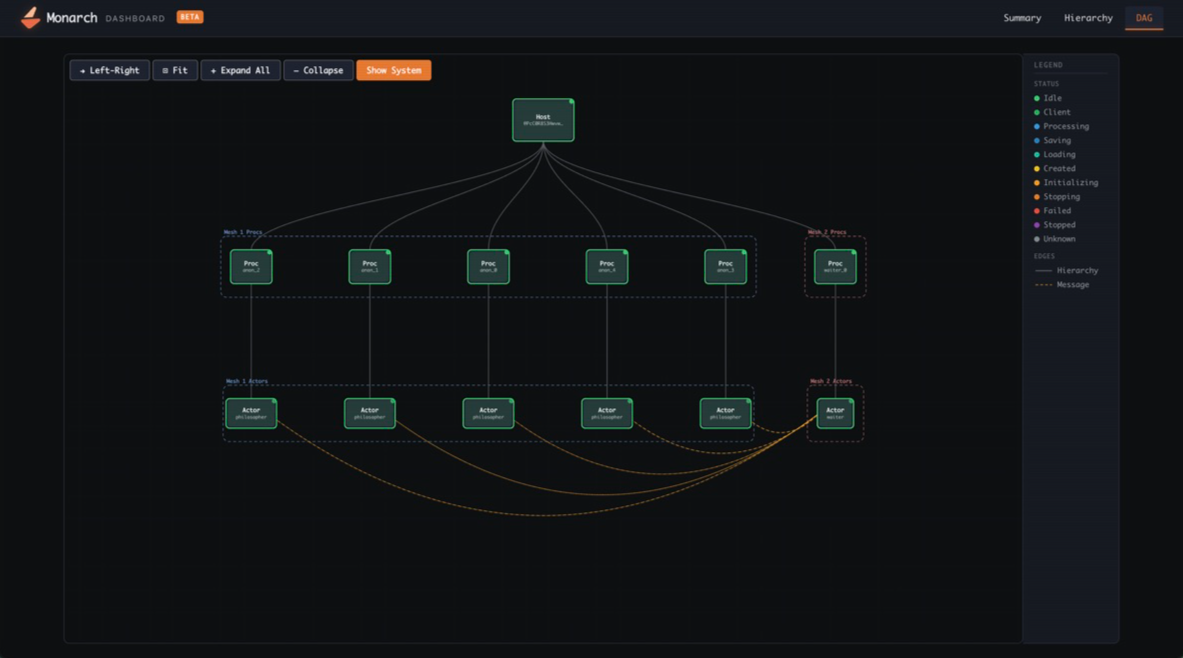

Monarch is a distributed programming framework for PyTorch that makes the cluster programmable through a simple Python API. It exposes the supercomputer as a coherent, directly controllable system—bringing the experience of local development to large-scale training, as if your laptop had 1000s of GPUs attached. A complete training system can be defined in a single Python program. Core primitives are explicit and minimal, enabling higher-level capabilities—fault tolerance, orchestration, tooling integration—to be built as reusable libraries.

Monarch is optimized for agentic usage, providing consistent infrastructure abstractions and exposing telemetry via standard SQL-based APIs that agents already excel at using. Agents can do a lot of development tasks by just running on your dev machine, and Monarch is really good at turning your devmachine into a supercomputer, leveling-up those agents.

The project launched at the PyTorch conference in October 2025; you can read about it here: Introducing PyTorch Monarch. This blog covers how Monarch has evolved into an effective framework for agent-driven training development. It will also cover Monarch’s major improvements since October, including native Kubernetes support, RDMA improvements, distributed telemetry, and more.

Agentic Development in Monarch

By representing your supercomputing cluster through a coherent model of hosts, procs, and actors, and pairing it with “batteries included” infrastructure, Monarch gives your agent superpowers! It can directly manage and debug running code, rapidly sync dependencies and data, run new code, and provision additional hosts, procs, and actors in an efficient and consistent way regardless of where it is deployed.

Let’s quickly review some key features Monarch uses to empower agentic development:

- RDMA-Powered Remote File System – Distribute files from the client on a read-only mounted filesystem to every host in the job via RDMA. This lets you very rapidly sync code, dependencies, and containers while iterating on the machine learning ideas. Monarch’s RDMA filesystem in turn is built on Monarch RDMA buffers and PyFuse.

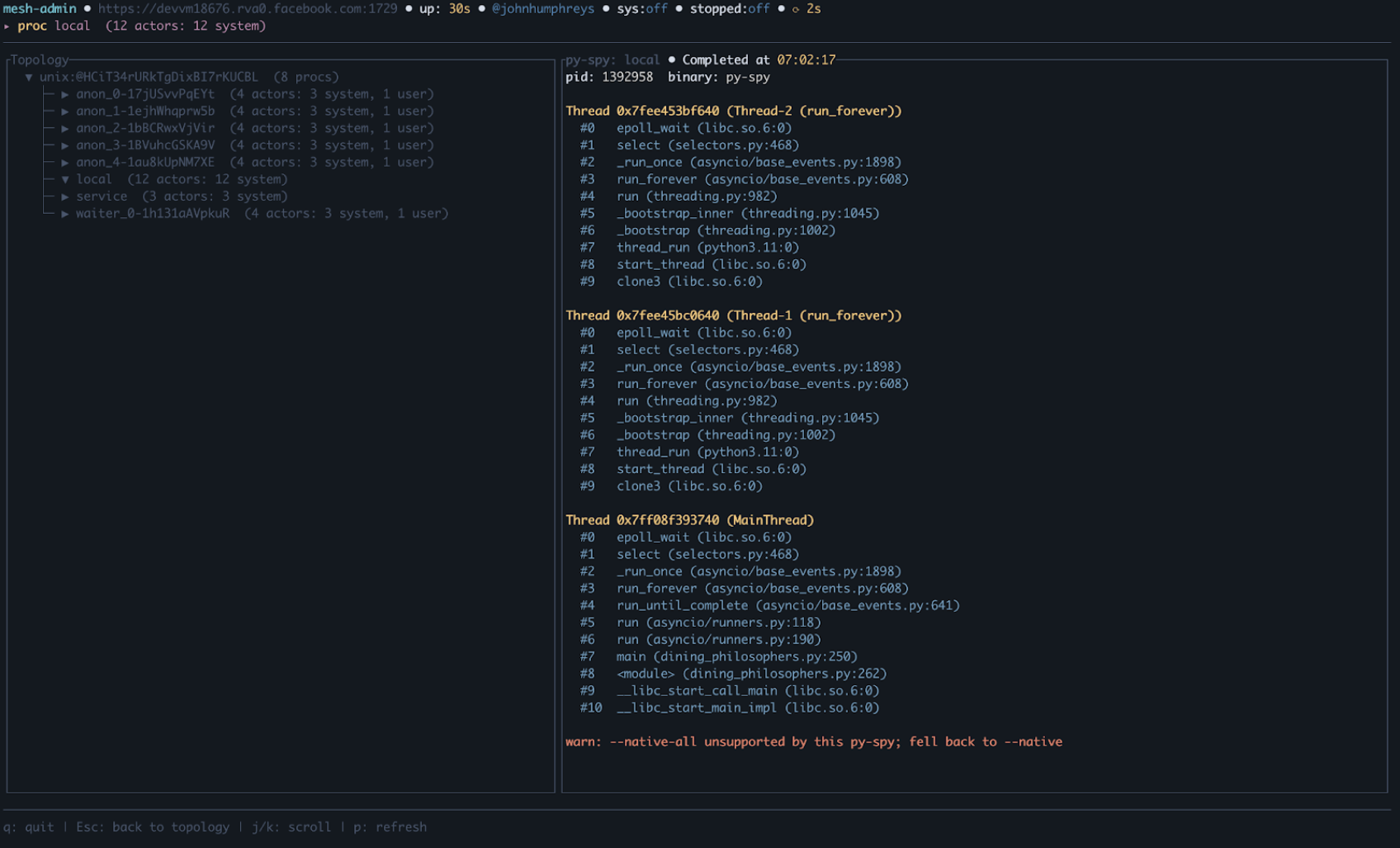

- Distributed SQL Telemetry – Use Monarch’s integrated lightweight distributed SQL engine to collect live state information, pyspy traces, and logs from all distributed processes/actors/etc. We used Monarch to directly run a DataFusion distributed SQL query engine *in situ*; each node in turn writes live state information into a set of tables that can then be queried directly and efficiently by an agent. This makes it very easy to explore the state of the system when debugging.

- Jobs API – Provision resources (hosts) once and run as many jobs as needed on them without paying the repeated allocation penalty. Monarch comes with support for Kubernetes and SLURM; other schedulers can be integrated by implementing a Monarch Job.

Collectively, these features enable agents to be efficient across some key phases of development; they can restart jobs fast, sync new code, dependencies, and data fast, and debug fast, all from a central point. In short, Monarch makes the distributed system feel local and provides a toolbox to reduce the iteration time when tackling problems.

What’s new in Monarch?

Let’s review what is new in Monarch since its launch at the PyTorch Conference in October 2025 (~6 months ago).

Kubernetes

Monarch now has first-class Kubernetes support.

- Monarch-kubernetes OSS repository – A dedicated repo (github.com/meta-pytorch/monarch-kubernetes) with a MonarchMesh Custom Resource Definition, a reference KubeBuilder operator, and a hello-world demo. The MonarchMesh label propagation also enables scheduling via Kueue.

- Just-in-time pod provisioning – Pods are allocated on demand rather than reserved upfront, improving cluster utilization.

- External gateway – Out-of-cluster clients can now connect to Monarch meshes running inside Kubernetes (landing in 0.5).

- Versioned and nightly Docker containers – Published to GHCR for reproducible deployments.

RDMA & Networking

Monarch has continued its investment in RDMA, adding support for multiple new backends and providing a higher-level API to make supporting and using them easier.

- AWS EFA RDMA support – Monarch’s RDMABuffer now supports Elastic Fabric Adapter (EFA) on AWS, extending high-performance networking beyond InfiniBand. Validated at 16 Gbps – 10x faster than TCP (14.5 GB in 7.6 seconds). Available in PyPI nightlies.

- AMD ROCm GPU support – GPU-direct RDMA and RCCL collective communication now work on AMD GPUs via ROCm with Mellanox interfaces.

- Unified RDMA API – A hardware-portable RDMA interface that works across InfiniBand (mlx5), AWS EFA, and ROCm, letting users write once and run on any fabric, or fall back to Monarch actor messaging when not available.

Observability & Telemetry

Monarch has leaned heavily into observability & telemetry, adding programmatic mechanisms to empower agentic development. There are also new native dashboards, Terminal UI (TUIs), and support for OSS standards commonly used by DevOps teams.

- Distributed SQL Telemetry – A client-accessible SQL endpoint, enabling easy analysis of the distributed system without 3rd party dependencies.

- Admin API & Terminal UI – A terminal-based interface for inspecting and managing live Monarch jobs, backed by a powerful API for accessing internals.

- OpenTelemetry integration – Native OTel support for metrics, logs, and visualization on Kubernetes, giving users full observability on any cluster. This is easily integrated with Prometheus, Loki, Grafana, and other common OSS tooling.

- Per-job OSS dashboard (Beta) – A built-in web dashboard for visualizing and debugging distributed jobs without external tooling.

Portability & Installation

Monarch is now significantly more compact and much faster to start, making it easier to use than ever.

- 100x smaller install, 8x faster startup – The pip wheel footprint was reduced by two orders of magnitude with dramatically faster cold-start times. libpython linking requirements were removed entirely.

- Torch dependency removed – As of v0.2, torchmonarch no longer pulls in torch as a pip dependency, simplifying installation and avoiding version conflicts.

- Native uv support – Monarch works out of the box with uv, the fast Python package manager. Three commands to get started: git clone, cd, uv run example.py. See the example repo.

- Consolidated PyPI packaging – All packages unified under a single torchmonarch name with PEP 440 pre-release versioning for nightlies: pip install –pre torchmonarch. ARM64 Linux builds are added as well to v0.4

Developer Experience

- Interactive SPMD – Improved support for interactive, notebook-style development with SPMD (Single Program, Multiple Data) jobs.

- RDMA File System – Fast, convenient file-sync across hosts.

Collaborations

We’d also like to take a moment to acknowledge some collaborators that have helped make Monarch better since its release.

- SkyPilot

- Run Monarch on any Kubernetes cluster with a single command – the SkyPilot integration lets users sky launch Monarch workloads on any K8s cluster or cloud without changing their Monarch code. Great for teams that need GPUs wherever they’re available.

- Multi-node distributed training with zero infra setup – SkyPilot handles node provisioning, networking, and gang scheduling so users can focus on their Monarch training logic. The integration uses Monarch’s JobTraits API to plug into SkyPilot as the job backend. No need to install separate operators on your k8s clusters.

- See https://github.com/meta-pytorch/monarch/tree/main/examples/skypilot for more.

- VERL

- VERL is a popular open-source framework for distributed RLHF post-training. In collaboration with ByteDance’s VeRL team, we developed a Monarch backend for VeRL’s single-controller architecture, implementing new resource pool abstractions built on Monarch’s Job API, colocated multi-role worker support, an RDMA-based transport layer that moves tensors out-of-band for VeRL’s DataProto exchange pattern, and a vLLM server integration that solves actor handle discovery without relying on a global actor registry. We validated that VeRL’s PPO and GRPO training loops can run on Monarch through this backend using VeRL’s hybrid-engine training mode, producing numerically identical results with no performance regression. One finding from this work: while VeRL’s single-controller interface is cleanly abstracted, Ray API usage surfaces throughout the broader codebase — making a non-invasive backend swap more involved than the interface alone suggests. This is a common pattern in frameworks built on Ray, and something the Monarch and VeRL communities can collaborate on over time.

- AMD

- Monarch expanded its compatibility and performance across leading hardware infrastructure adding AMD as a supported platform. Our partners at AMD validated Monarch on their ROCm platform, enabling seamless SLURM-based orchestration for MI300/325/355 clusters. This integration allows users to efficiently schedule, manage, and scale AI workloads across AMD GPUs, leveraging the familiar SLURM ecosystem widely used in HPC and AI research.

- Thanks to their effort, Monarch now supports RDMA (Remote Direct Memory Access) for fast GPU-to-GPU communication on AMD clusters equipped with Mellanox network interfaces. This hardware combination is available on major cloud providers like Azure and Oracle, enabling high-throughput, low-latency data transfers essential for distributed RL training and large-scale AI workloads.

Conclusion

Monarch is the API for your supercomputer; making distributed AI development feels like building a local app. The future of AI training demands speed and simplicity Monarch provides for both humans and agents. We encourage you to explore the latest features, join our growing OSS-first development community, and help shape the next chapter of distributed computing.

Acknowledgments

Thank you to the whole Monarch team for making this work possible. Also, a special thanks to our Top Contributors on GitHub!

Special thanks to our partners at Google Cloud and Runhouse for helping integrate monarch with kubernetes, and to our partners at SkyPilot and AMD for their contributions!

Special thanks to our partners at Google Cloud and Runhouse for helping integrate monarch with kubernetes, and to our partners at SkyPilot and AMD for their contributions!

Ahmad Sharif, Allen Wang, Ali Sol, Amir Afzali, Carole-Jean Wu, Chris Gottbrath, Christian Puhrsch, Colin Taylor, Do Hyung (Dave) Kwon, Gayathri Aiyer, Hamid Shojanazeri, Jiyue Wang, Joe Spisak, John William Humphreys, Jun Li, Lucas Pasqualin, Marius Eriksen, Matthew Zhang, Matthias Reso, Peng Zhang, Riley Dulin, Rithesh Baradi, Robert Rusch, Sam Lurye, Samuel Hsia, Shayne Fletcher, Tao Lin, Thomas Wang, Victoria Dudin, Zachary DeVito

SOTA Normalization Performance with torch.compile

8 Apr 2026, 7:00 amIntroduction

Normalization methods (LayerNorm/RMSNorm) are foundational in deep learning and are used to normalize values of inputs to result in a smoother training process for deep learning models. We evaluate and improve torch.compile performance for LayerNorm/RMSNorm on NVIDIA H100 and B200 to reach near SOTA performance on a kernel-by-kernel basis, in addition with further speedups through automatic fusion capabilities.

Forwards

LayerNorm

LayerNorm was first introduced in this paper: https://arxiv.org/abs/1607.06450. It normalizes the inputs by taking the mean and variance, along with scaling by learnable parameters, gamma (weight) and Beta (bias).

RMSNorm

RMSNorm (root mean square norm) was introduced as a follow up of LayerNorm in this paper: https://arxiv.org/abs/1910.07467. Instead of centering on the mean, the RMS is used to normalize, which is a sum of the squares of x values. We still use gamma (weight) as a learnable parameter for scaling, although there is no longer a bias term.

The forward pass for both LayerNorm and RMSNorm are relatively similar, typically with a reduction across the contiguous dimension and some extra pointwise ops, with RMSNorm typically being a bit more efficient as there are fewer flops and no bias. For the purposes of this study, we present benchmark results among LayerNorm and RMSNorm interchangeably given the similarity of the kernels.

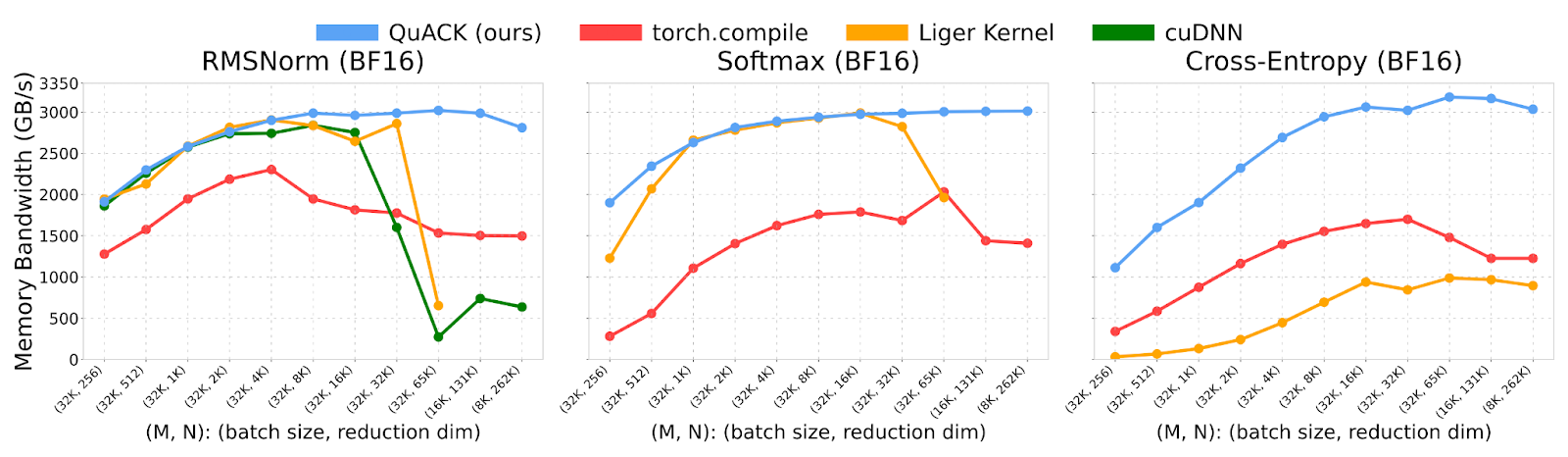

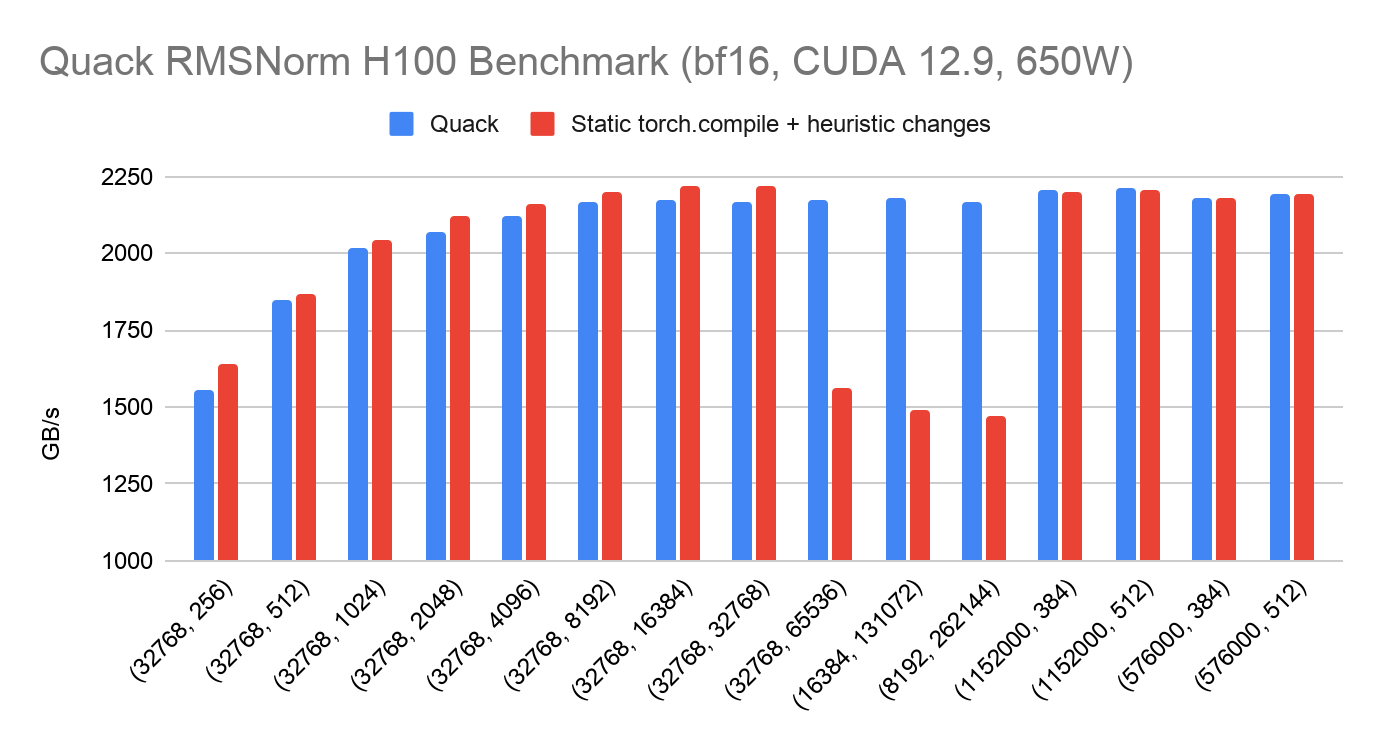

Quack

Quack is a library of hyper optimized CuteDSL kernels from Tri Dao: https://github.com/Dao-AILab/quack. Their current README shows on H100 how Quack outperforms torch.compile for these reduction kernels. We use Quack as the SOTA baseline of which we evaluate the performance of torch.compile on. Quack’s README showcases previous results from torch.compile performance below, of which it can be observed that torch.compile ~50% of Quack performance typically.

torch.compile

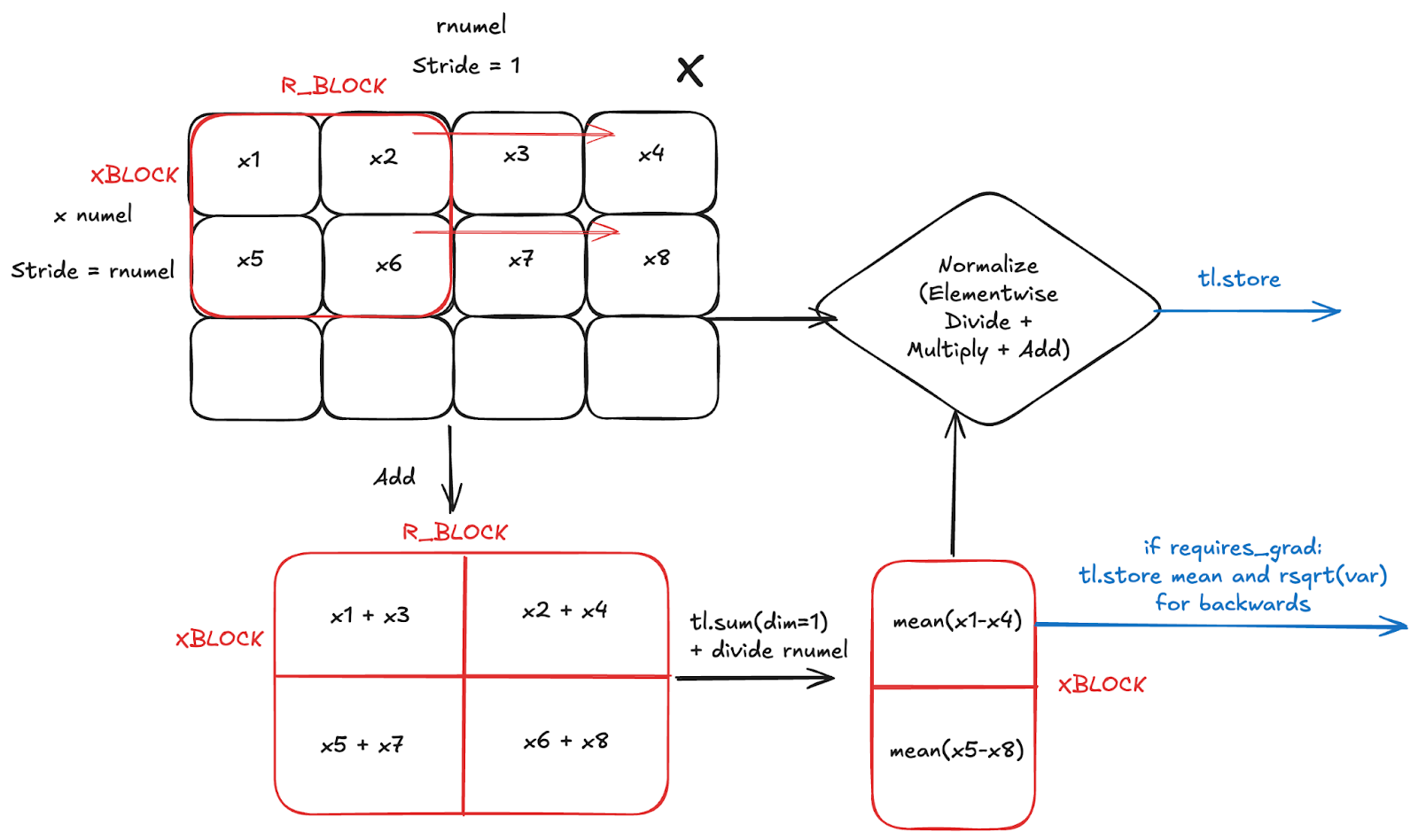

Below we illustrate the general logic of a torch.compile generated kernel for LayerNorm forwards, with the same approach for RMSNorm). We assume that the input reduction dimension (rnumel) is contiguous, which we refer to in Inductor as an Inner reduction.

While the kernel might look a bit confusing, what’s actually happening is very simple:

- Maintain partial sums of size R_BLOCK for each row in X the input

- Use partial sums to calculate mean and variance

- Apply elementwise to X based on layernorm formula

- Store output of elementwise

- Store mean and variance if elementwise_affine=True and requires_grad=True for backwards

As a side note, if R is smaller than some heuristic (1024), then Inductor generates a persistent reduction, where we no longer need to loop over the r dimension. Instead, we go directly to taking the mean.

In comparing the torch.compile vs Quack versions of RMSNorm forwards, we can reproduce the poor performance of torch.compile compared to Quack on H100 and B200. However, after autotuning and using that to motivate Inductor defaults, we arrive at SOTA performance on H100 and B200. In general, the following was done to achieve this result:

- Inserting torch._dynamo.reset() during benchmarking – makes sure that torch.compile does not use automatic dynamic shapes, as previously a torch.compile call per shape was performed, making the compiler assume dynamic shapes

- Poor Autotune Configuration Decisions – By default was making suboptimal decisions for the autotune configs on H100 and B200, leading to poor performance, though this is mitigated with mode=’max-autotune’. Several improvements were made to the default heuristics:

- Scale up inner reduction RBLOCK

- Scale XBLOCK in persistent reductions for smaller reductions, numel <= 2048

- Decrease num_warps based on certain reduction dimensions. Num_warps would often be too large for peak vectorization. Peak vectorization is essential for maximizing bytes in flight -> saturating peak memory bandwidth for memory bound workloads, of which Blackwell is more sensitive to given the higher memory bandwidth.

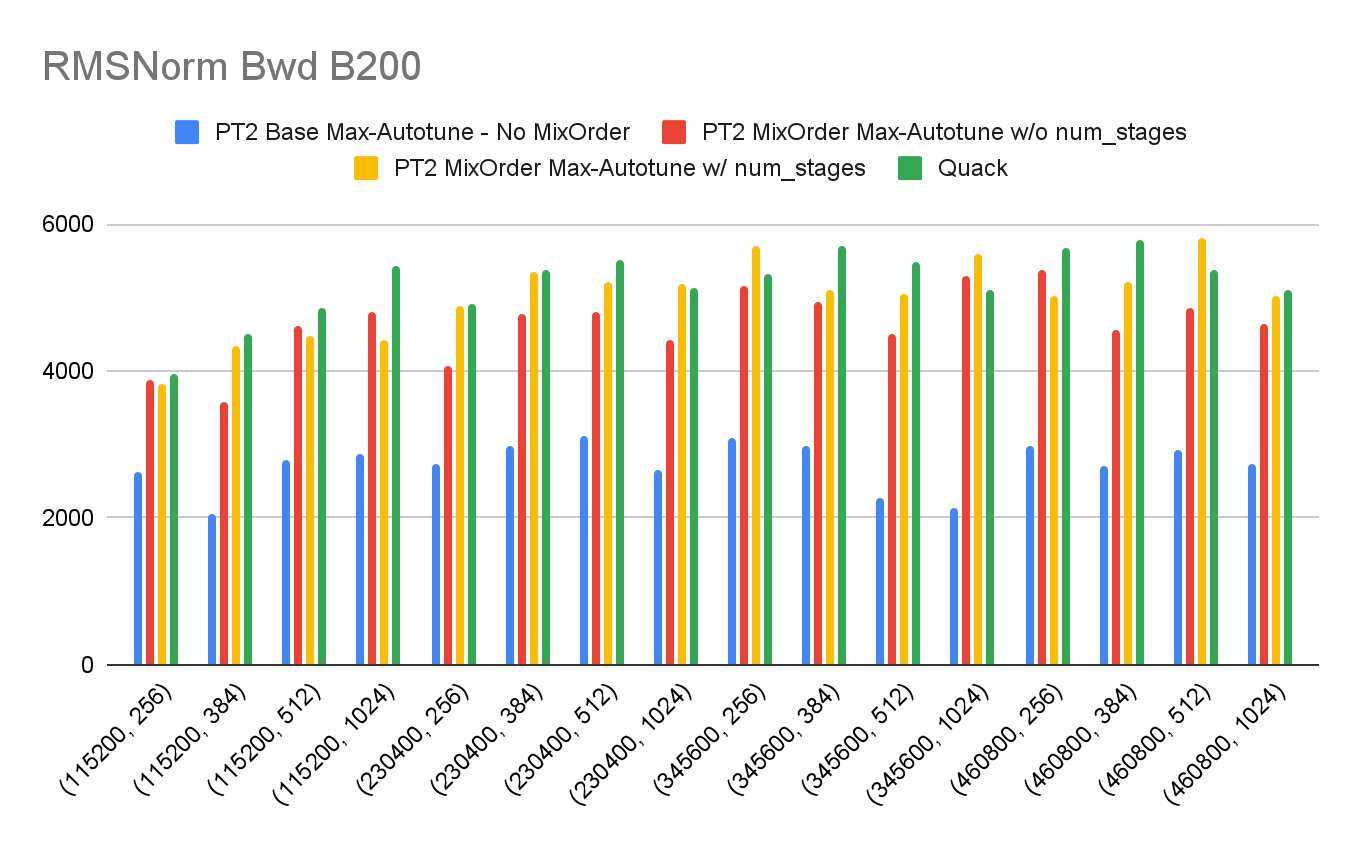

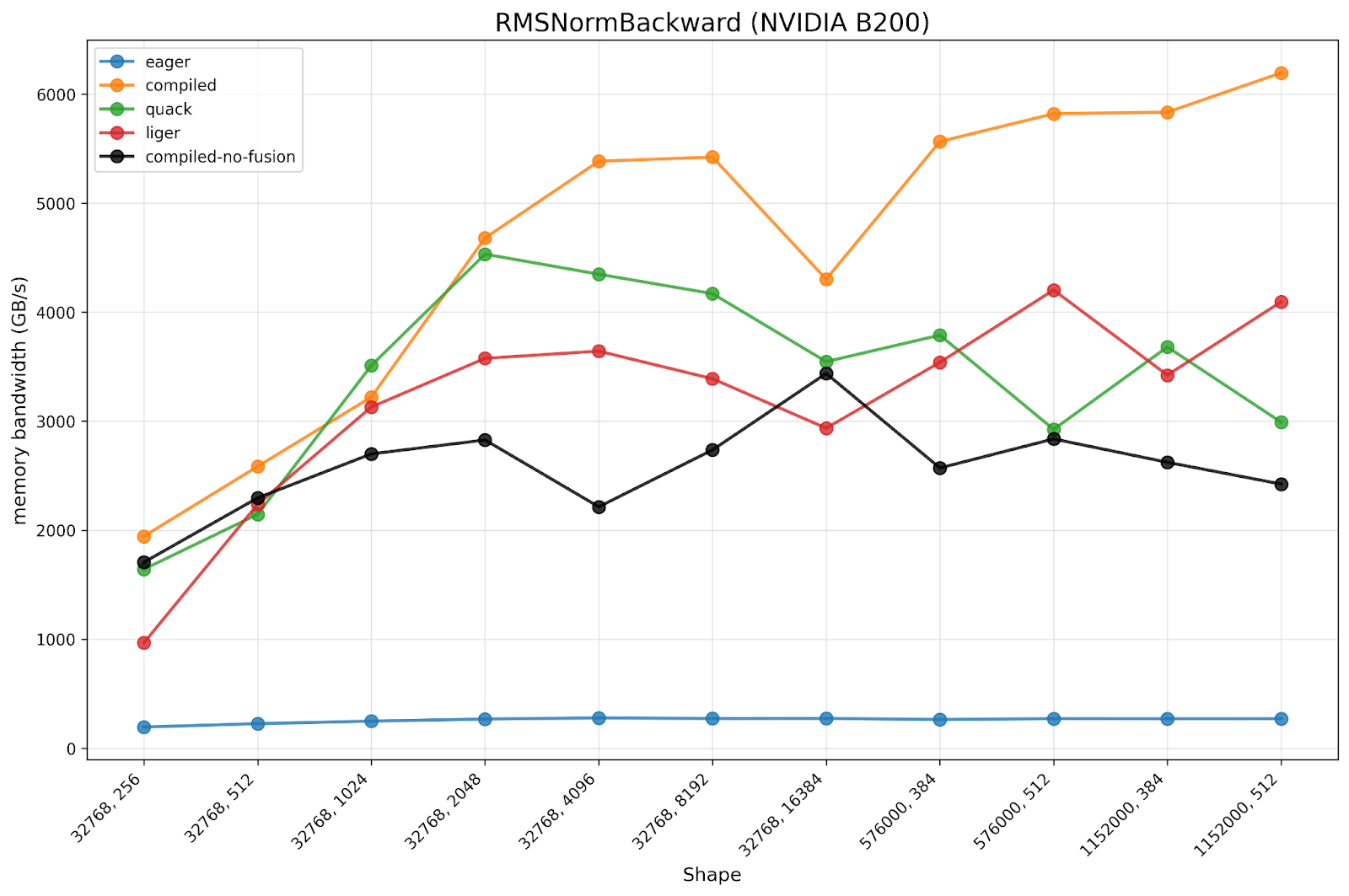

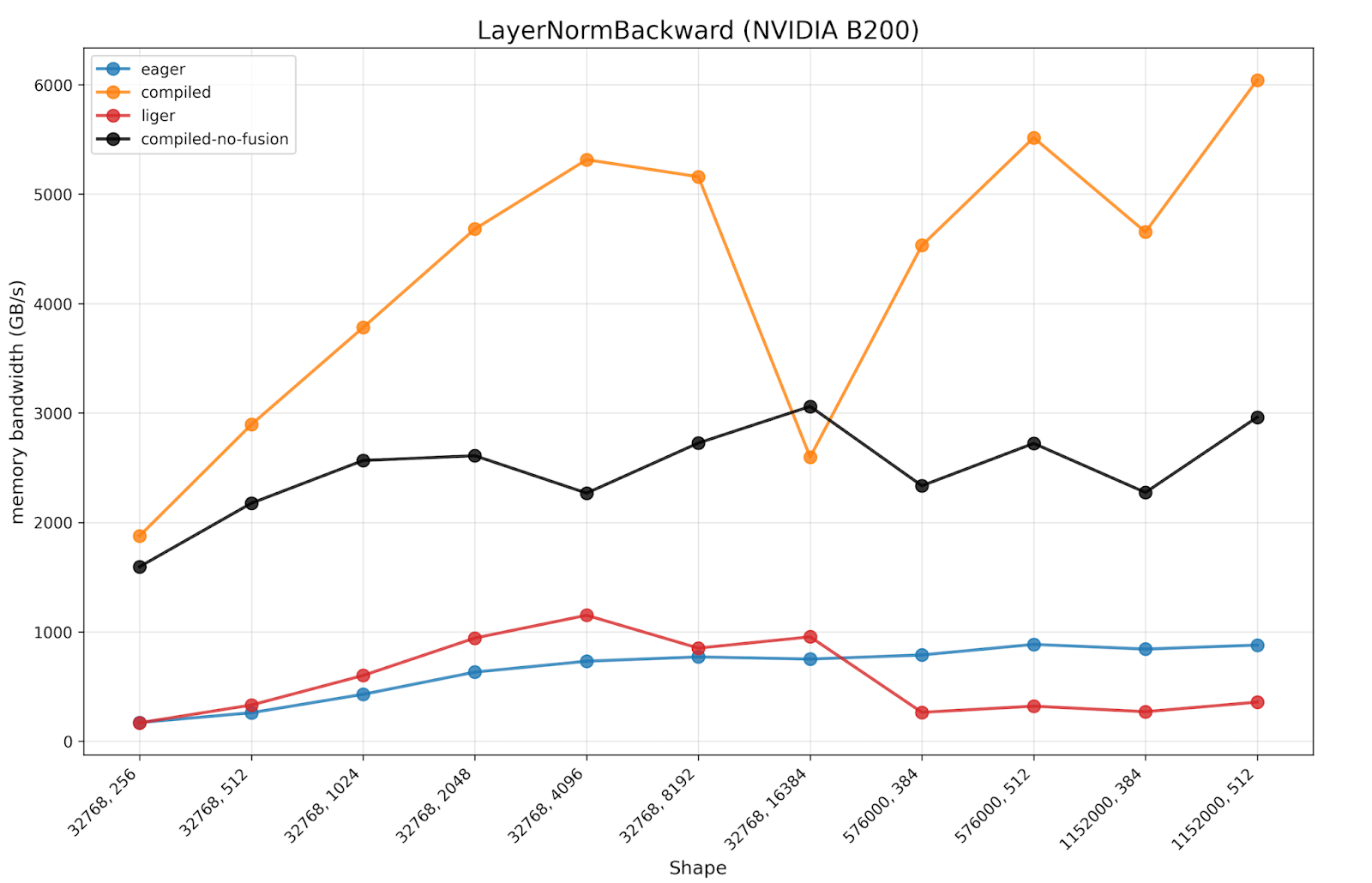

Benchmark Results

Below we present benchmark results of torch.compile 2.11 vs Quack (March 24th 2026 trunk) on the Quack benchmark shapes alongside some common shapes in the wild, with large M, small N. We demonstrate that torch.compile is generally on parity with Quack. There are two classes of regressions that do occur:

- Small regressions on N=384, as Triton is unable to cleanly represent non power-of-2 block size

- Large regressions on very large N for H100, due to the inability to represent distributed shared memory in Triton

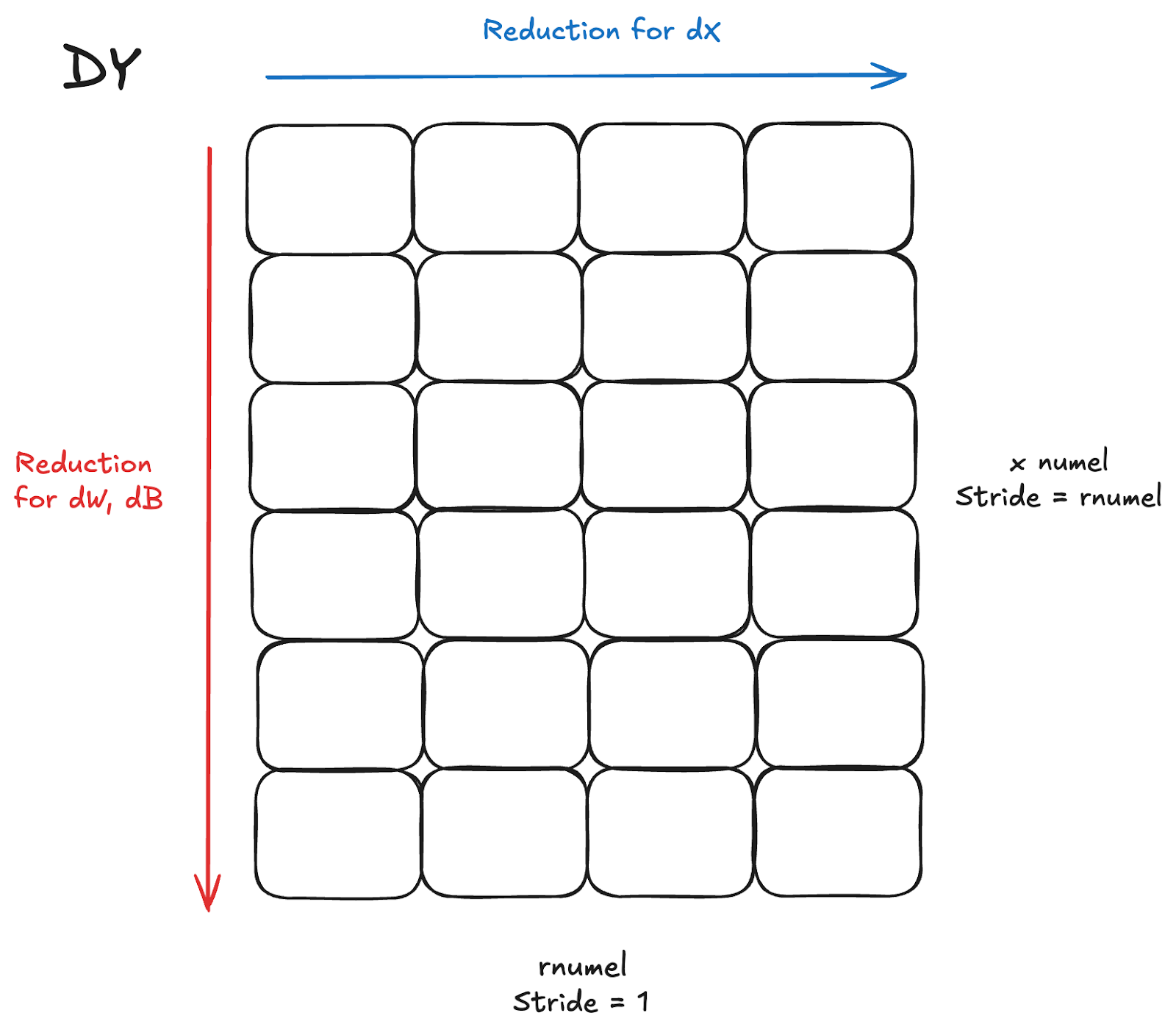

Backwards

The backwards pass for LayerNorm/RMSNorm is a bit more involved than the forward pass. We have to calculate at least 2 gradients, dX for the input, dW for the weights, and optionally dB for the bias in LayerNorm. To simplify and avoid the associated complex math formulas, for performance considerations, these gradient calculations require reductions across both dimensions of dY, the incoming gradient to the backwards pass (the gradient of the previous output in the forwards).

The naive option here, and what is sometimes unavoidable with a very large reduction dimension, is to perform the reductions in separate kernels, one for dX, and one for dW, dB. However, that leads to reading the same inputs (dY) in 2 separate kernels, doubling the bytes being read. Given the memory bound nature of normalization kernels, leads to significant additional latency.

Fused Reductions

For reasonable shapes where numel is generally not too large and a single row can fit adequately in a thread block, generally <= 16384, it is possible to have a more performant fused kernel that doesn’t blow up shared memory/registers. Essentially, the kernel would perform the reduction for dW, dB as normal but for each row also reduce the columns for dX at the same time. Existing literature exists for this type of fusion, such as in Liger, a fused semi-persistent normalization backwards from Meta, and Quack’s fused kernel in CuteDSL.

In Inductor, we represent reductions with distinct types, such as:

- INNER reduction: reductions that reduce thru the stride=1 dimension

- OUTER reduction: reductions that reduce thru the remaining dimension

Based on these definitions, the fused kernel is an INNER and OUTER reduction on the same input tensor, with the INNER reduction as dX (contiguous) and the OUTER reduction as (dW, dB).

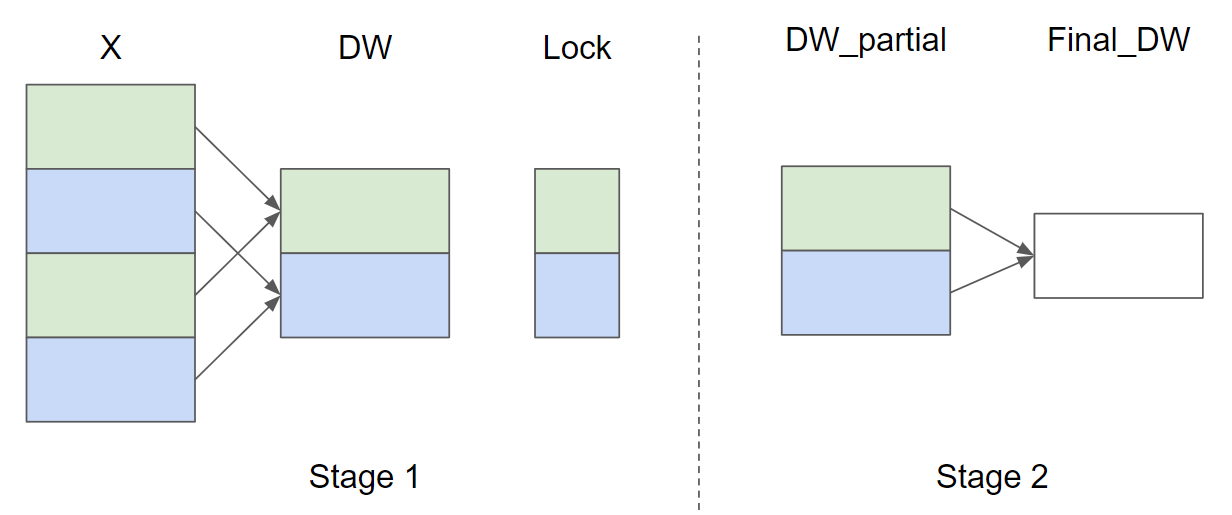

Split Reduction

Typically for many shapes in the wild, xnumel or the batch dimension is large, much larger than rnumel. In this case, it is generally preferred to process partial sums of the reduction across X and a final torch.sum of the partial sums to allow for better parallelism. The Triton tutorial layernorm illustrates the split reduction, though they utilize locks with atomics with a single thread-block being responsible for individual rows, which is poor for performance on a larger batch dimension (X) and leads to numerical inconsistencies:

Inductor has similar capabilities currently with split reduction, which allocates a workspace tensor for the partial sums, like above, but does not use atomics, instead ensuring that a single CTA processes multiple rows and writes to one unique spot in the workspace tensor.

Inductor Generated Fused Norm Backwards

Combining the fused and split reduction paradigms described above, we enable TorchInductor to automatically generate fused state-of-the-art normalization backward kernels. Furthermore, allowing the compiler to generate such kernels allows for more autotuning and automatic fusion capabilities with surrounding operations. Since the main challenge here is to fuse reductions with the same input but different reduction order, we call this optimization MixOrderReduction.

For a given [M, N] shape input, the generated kernel performs:

- Split-reduction by splitting the M dimension with SPLIT_SIZE chunks

- for each chunk, we have one row in the workspace tensor saving the partial reduced results for the OUTER reduction (e.g. partial sum of each column or dW, dB)

- for each chunk, we want to load each row in the chunk by a loop

- do the INNER reduction as usual (e.g. sum the entire row or dX)

- Combine the loaded row with the row in the workspace tensor as the updated partial reduced result

We have an extra reduction to reduce the partial reduced results in the workspace tensor to get the final result for the OUTER reduction. The extra kernel works on much smaller input tensors so it’s not a huge performance hit to have it in a separate kernel.

In the Inductor codegen logic itself, we perform the following steps after recognizing the mix order reduction pattern:

- for the OUTER reduction kernel, we replace the reduction and store_reduction nodes with a new type of partial_accumulate node. This node tracks the value being reduced, what kind of reduction we do etc. This transformation converts the OUTER reduction kernel into a pointwise kernel (PW1)

- Reorder loops for the transformed pointwise kernel (PW1) leveraging the previous loop reordering work and we get (PW2)

- Now PW2 and the INNER reduction have the same loop order and we can fuse them

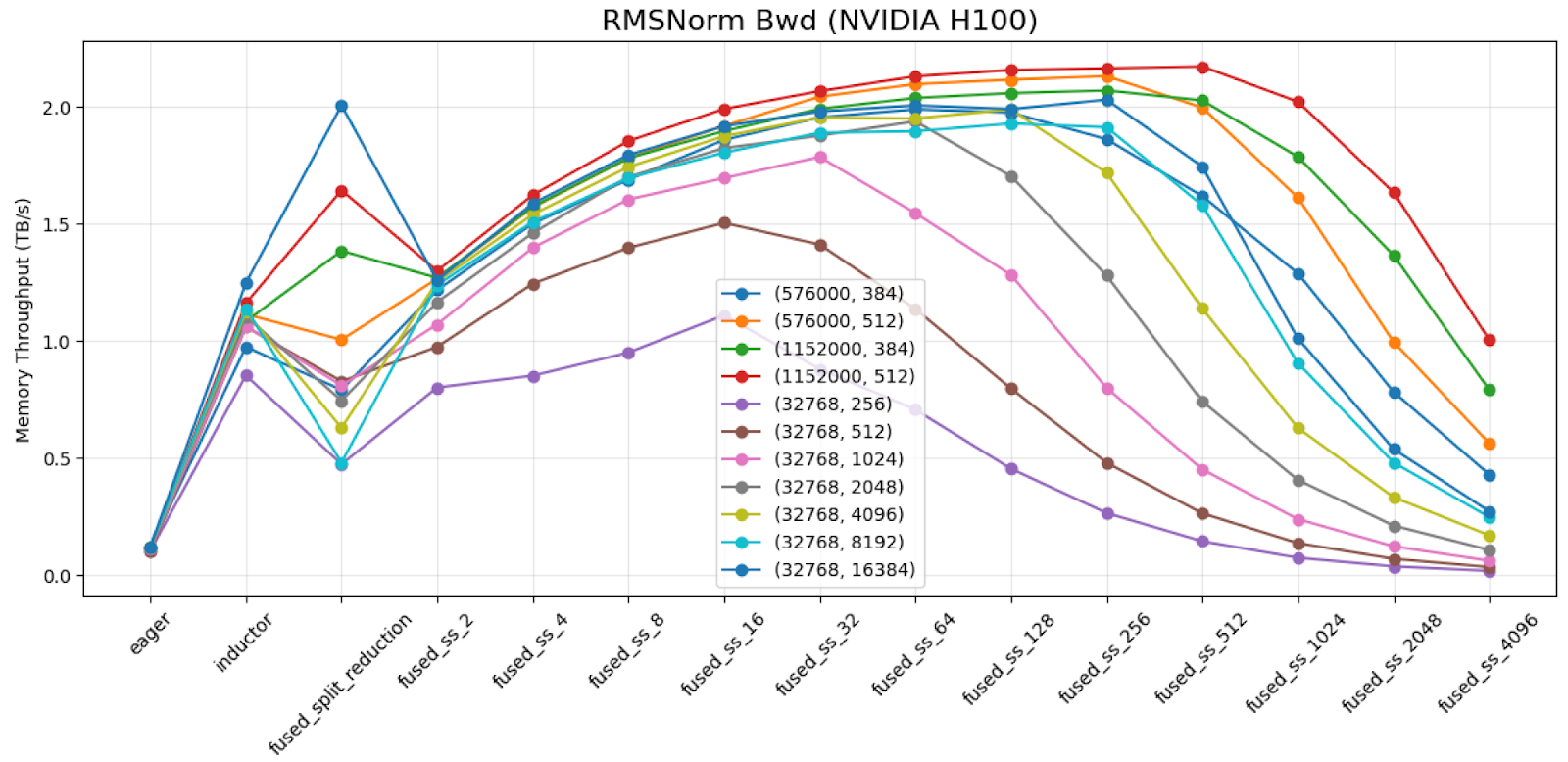

Autotuning for Split-Size

SPLIT_SIZE is very critical to the perf of mix-order reduction kernels. The default perf of the Liger RMSNorm backwards kernel on shape (1152000, 384) with dtype=bfloat16 achieves 0.417 TB/s on H100. When reducing the SPLIT_SIZE by 32x, we get 1.912 TB/s.

We demonstrate results across the shapes we benchmark and different split sizes on H100 for torch.bfloat16 dtype.

As shown above, we can conclude that:

- An improper split-size choice can cause > 2x perf degradation

- The curve is more or less a parabola shape. An autotuning strategy to keep expanding to 2x or 1/2 split size until we found a maximum should be a very effective strategy for this problem.

Inductor’s existing split-reduction feature may split the outer reduction for better perf. The split size picked by split-reduction (shown as ‘fused_split_reduction’ column in the chart) may be bad due to using an unrelated heuristics. We make MixOrderReduction ignore the split size picked for split-reduction and use its own heuristics or autotuning mechanism to pick a better split-size.

Software Pipelining

Another discovery while trying to achieve peak bandwidth on the backwards kernel is the addition of software pipelining, aka prefetching loads. Typically, only compute intensive workloads like GEMM and Attention performed pipelining as more memory bound workloads did not need it, with no num_stages autotuning in Inductor for pointwise/reduction kernels or the Liger examples. However, we observed that in the Quack kernels there was some notion of prefetching. We added num_stages as an autotuning parameter for Inductor kernels generally, and saw significant speedups for some shapes, especially for large M, small N, up to 20% when applied to MixOrderReduction:

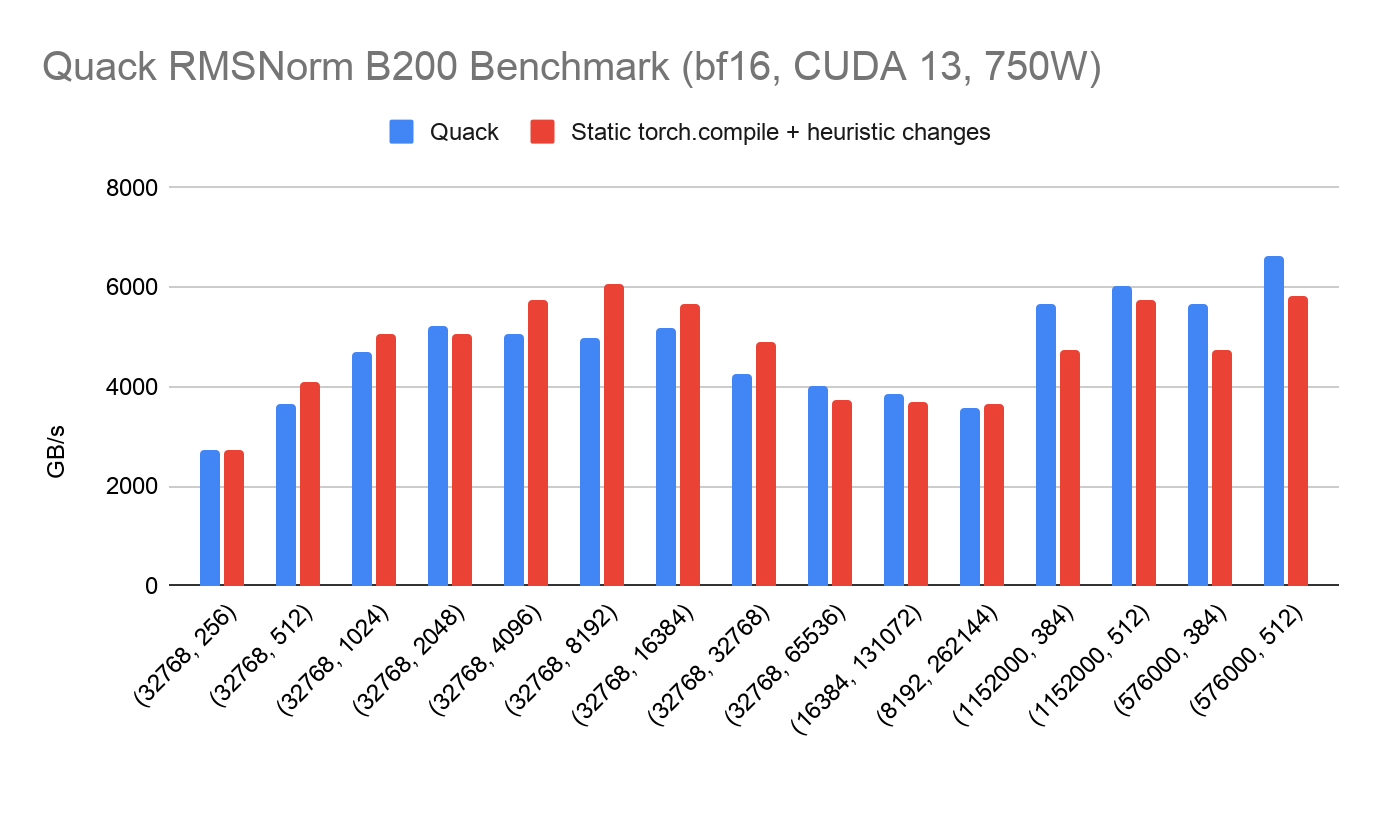

Benchmark Results

Below we present benchmark results for MixOrderReduction compared to PyTorch eager and previous compile, alongside OSS baselines such as Quack and liger. Both of these benchmarks were run on a 750W B200 machine on CUDA 12.9 in late 2025.

We observe that:

- The torch.compile w/ MixOrderReduction is 17.07x faster than eager, while torch.compile w/o MixOrderReduction is only 9.93x faster than eager.

- We observe the torch.compile w/ MixOrderReduction is 1.45x faster than Liger and 1.34x faster than Quack

We also present benchmarking results for LayerNorm, expecting similar results to RMSNorm due to the similarity in the kernels.

We observe the same trend in the results as RMSNorm, where torch.compile w/o MixOrderReduction has a significant speedup compared to PyTorch eager. However, torch.compile w/ the new MixOrderReduction paradigm has almost a 2x speedup compared to the previous torch.compile baseline, much closer to peak memory bandwidth.

Conclusion

We improved torch.compile to generate near SOTA forward and backward normalization kernels on H100 and B200 through torch.compile on standard shapes compared to Quack. On top of these optimized kernels, torch.compile provides automatic fusion capabilities of surrounding ops, other pointwise/reductions, allowing for better e2e performance than hand authored kernels.

ExecuTorch Becomes a Part of PyTorch Core to Expand On-Device Inference Capabilities

7 Apr 2026, 7:10 am

Today, we’re excited to share that ExecuTorch is becoming a part of PyTorch Core. ExecuTorch extends PyTorch functionality for efficient AI inference on edge devices, from desktop/laptop to mobile phones and embedded systems.

Becoming a PyTorch Core project under the PyTorch Foundation will provide vendor‑neutral governance, clear IP, trademark, and branding, and ensure that business and ecosystem decisions are made transparently by a diverse group of members, while technical decisions remain with individual maintainers and open source contributors, ultimately strengthening ExecuTorch’s adoption within the PyTorch ecosystem.

At this moment, we want to reflect briefly on how ExecuTorch started, share why we’re becoming a PyTorch Core project, and what’s ahead.

How ExecuTorch started

ExecuTorch began at Meta as part of our effort to make it easier to run state-of-the-art PyTorch models efficiently on edge and on-device environments—from mobile phones and AR/VR headsets and Glasses to embedded devices and custom accelerators.

When we first introduced ExecuTorch publicly at PyTorch Conference 2023, it was designed around a small set of core principles:

- End-to-end developer experience: From authoring in PyTorch to deployment on-device, with a consistent, predictable workflow.

- Portability across hardware: A runtime that could target a wide variety of CPUs, GPUs, NPUs, DSPs, and other accelerators across platforms.

- Small, modular, and efficient: A lean runtime and a composable architecture suitable for constrained environments.

- Open by default: A project positioned to benefit from and contribute to the broader open-source AI ecosystem.

Since then, ExecuTorch has evolved from an internal runtime into an open platform for on-device AI. It underpins model deployment in Meta products and is increasingly being adopted by partners and the broader community as a flexible way to bring PyTorch-based models to production on edge devices.

Growth and community

ExecuTorch has quickly grown beyond its initial use cases. It is now used as a foundation for on-device inference across a variety of scenarios, including:

- Mobile and AR/VR experiences

- Generative AI and LLM-based assistants on devices

- Computer vision and sensor processing at the edge

- Low-latency, privacy-preserving applications where models run locally

While Meta has been the primary initial contributor to ExecuTorch, a growing set of companies and individual developers have started investing in the project—adding backends, operators, tooling, and integrations, as well as building their products and research efforts on top of ExecuTorch.

We see contributions and ecosystem work emerging around:

- Hardware-specific optimizations and backends

- Tooling to convert, quantize, and package PyTorch models for ExecuTorch

- Integrations with mobile, AR/VR, IoT, and robotics platforms

- Benchmarks, testing, and documentation improvements

ExecuTorch is becoming an important part of how organizations think about portable, hardware-agnostic on-device AI, and it’s clear the project is transitioning into a multi-stakeholder ecosystem. That makes this the right time to move to a broader open-source foundation.

Why Become a PyTorch Core Project?

In its early phase, ExecuTorch’s business governance was intentionally lightweight—we operated a lot like a small startup team within a larger organization. Meta helped put in the initial scaffolding: shaping the project’s roadmap, setting up basic contribution processes, aligning ExecuTorch with PyTorch’s model export and runtime stack, and engaging with early partners.

As ExecuTorch scaled, we realized that:

- Multiple companies want to invest in ExecuTorch as a neutral, shared layer in their on-device AI stack.

- Hardware vendors and platform providers need a clear and transparent way to influence direction and contribute.

- The project needs a governance structure that outlives any single organization and keeps ExecuTorch vendor-neutral and open.

Becoming a PyTorch core project under the PyTorch Foundation gives ExecuTorch:

- Vendor-neutral governance with the Foundation’s governing board and charters.

- Clear IP, trademark, and branding stewardship, independent of any single company.

- Proven open source structures for membership, working groups, and strategic initiatives.

- A natural home alongside adjacent projects in the PyTorch ecosystem.

Meta will remain a major contributor and a key community member, but no single company will control ExecuTorch’s business governance. The PyTorch Foundation’s experience hosting large, multi-stakeholder projects gives ExecuTorch the right blend of structure and flexibility for this next stage as a PyTorch core project.

Strengthening technical governance

Since its inception, ExecuTorch has operated under a community-driven open source model: maintainers and contributors working across components such as model conversion, runtimes, kernels, backends, and tooling. Responsibilities have been tied to individuals, not just their employers, and we’ve followed the spirit and many of the practices of the PyTorch ecosystem. As ExecuTorch grows, we need more explicit, transparent technical governance to scale responsibly.

The ExecuTorch Technical Governance will be as follows:

- The project will adhere to the already existing hierarchical technical governance structure of PyTorch. Core PyTorch maintainers will oversee larger cross-cutting changes while existing Module maintainers will oversee ExecuTorch specific changes. The maintainer membership will be individual and merit-based.

In the coming weeks, we will:

- Publish clear, documented technical decision-making processes, proposals, and escalation paths

- Alignment with familiar open-source patterns (e.g., RFC / proposal processes, release management, standards for compatibility and deprecation)

- Invest in shared CI/CD infrastructure for hardware partners to test and validate their backends

This does not fundamentally change how contributors build ExecuTorch day to day. Instead, it adds clarity, predictability, and openness, which are essential for a project that aims to be the neutral, shared runtime layer for on-device AI across the industry.

What’s next

As ExecuTorch become a PyTorch core project, our priorities are:

- Growing a diverse contributor and maintainer base across companies, hardware vendors, and independent developers.

- Deepening the integration with PyTorch for model export, quantization, and deployment flows.

- Expanding hardware and platform coverage so ExecuTorch can run efficiently wherever developers need it—on mobile devices, XR headsets, edge boxes, and embedded systems.

- Continuing to invest in documentation, tooling, and examples to make on-device AI development with ExecuTorch as accessible as possible.

Thank you.

PyTorch Foundation Welcomes Helion as a Foundation-Hosted Project to Standardize Open, Portable, and Accessible AI Kernel Authoring

7 Apr 2026, 7:00 am

Helion joins community of leading open source AI projects to simplify kernel development across the open AI ecosystem

PARIS – April 7, 2026 – The PyTorch Foundation, a community-driven hub for open source AI under the Linux Foundation, today announced that it has welcomed Helion as its newest foundation-hosted project alongside DeepSpeed, PyTorch, Ray, and vLLM. This contribution by Meta addresses a critical layer of the AI stack, making kernel authoring a first-class part of PyTorch by strengthening custom kernel creation and reducing manual coding effort through autotuning.

Helion joins the Foundation as AI model development expands from training to an inference boom, elevating the importance of serving models at scale. In this landscape, in which hardware, software, and model architectures are shifting simultaneously, engineering teams face significant hurdles in cross-platform compatibility. Helion eliminates bottlenecks associated with model architectures and execution, providing developers with radically simpler kernels, automated ahead-of-time autotuning, and greater hardware performance portability.

“Helion joining the PyTorch Foundation as its newest project reflects where the open AI ecosystem needs to go next: higher-level performance portability for kernel authors,” said Matt White, Global CTO of AI at the Linux Foundation and CTO of the PyTorch Foundation. “Helion gives engineers a much more productive path to writing high-performance kernels, including autotuning across hundreds of candidate implementations for a single kernel. As part of the PyTorch Foundation community, this project strengthens the foundation for an open AI stack that is more portable and significantly easier for the community to build on.”

Helion is a Python-embedded domain-specific language (DSL) for authoring machine learning kernels, designed to compile down to multiple backends for hardware heterogeneity (Triton, TileIR, and more coming soon). Helion aims to raise the level of abstraction compared to kernel languages, making it easier to write efficient kernels while enabling more automation in the autotuning process.

In addition to Helion joining the Foundation, ExecuTorch is becoming part of PyTorch Core. Started at Meta, ExecuTorch continues to extend PyTorch model functionality for on edge and on-device environments under the Foundation, ensuring that ecosystem and technical decisions are made in an open, community-guided manner.

Developers and contributors interested in participating in the PyTorch project ecosystem are encouraged to join the community onsite at upcoming events like PyTorch Conference China (Shanghai, September 8-9) and PyTorch Conference North America (San Jose, October 20-21).

Supporting Quotes

“Helion brings kernel authoring into PyTorch – making it simpler, portable, and accessible to every developer. Joining the PyTorch Foundation opens Helion to the broader hardware ecosystem, so developers write one kernel and it runs fast everywhere.”

– Jana van Greunen, Director of PyTorch Engineering, Meta

“By bringing Helion into the PyTorch Foundation community, we are meeting the technical frontier of AI head on. The project provides a vital layer of abstraction that makes it easier for developers to target different architectures and accelerate AI adoption. This addition is integral to shaping and fueling production-grade AI across industries.”

– Mark Collier, Executive Director, PyTorch Foundation.

###

About the PyTorch Foundation

The PyTorch Foundation is a community-driven hub supporting the open source PyTorch framework and a broader portfolio of innovative open source AI projects, including DeepSpeed, Helion, PyTorch, Ray, and vLLM. Hosted by the Linux Foundation, the PyTorch Foundation provides a vendor-neutral, trusted home for collaboration across the AI lifecycle—from model training and inference, to domain-specific applications. Through open governance, strategic support, and a global contributor community, the PyTorch Foundation empowers developers, researchers, and enterprises to build and deploy AI at scale. Learn more at https://pytorch.org/foundation.

About the Linux Foundation

The Linux Foundation is the world’s leading home for collaboration on open source software, hardware, standards, and data. Linux Foundation projects are critical to the world’s infrastructure, including Linux, Kubernetes, LF Decentralized Trust, Node.js, ONAP, OpenChain, OpenSSF, PyTorch, RISC-V, SPDX, Zephyr, and more. The Linux Foundation focuses on leveraging best practices and addressing the needs of contributors, users, and solution providers to create sustainable models for open collaboration. For more information, please visit us at linuxfoundation.org.

The Linux Foundation has registered trademarks and uses trademarks. For a list of trademarks of The Linux Foundation, please see its trademark usage page: www.linuxfoundation.org/trademark-usage. Linux is a registered trademark of Linus Torvalds.

Media Contact

Grace Lucier

The Linux Foundation

pr@linuxfoundation.org

Generating State-of-the-Art GEMMs with TorchInductor’s CuteDSL backend

7 Apr 2026, 7:00 amIntroduction

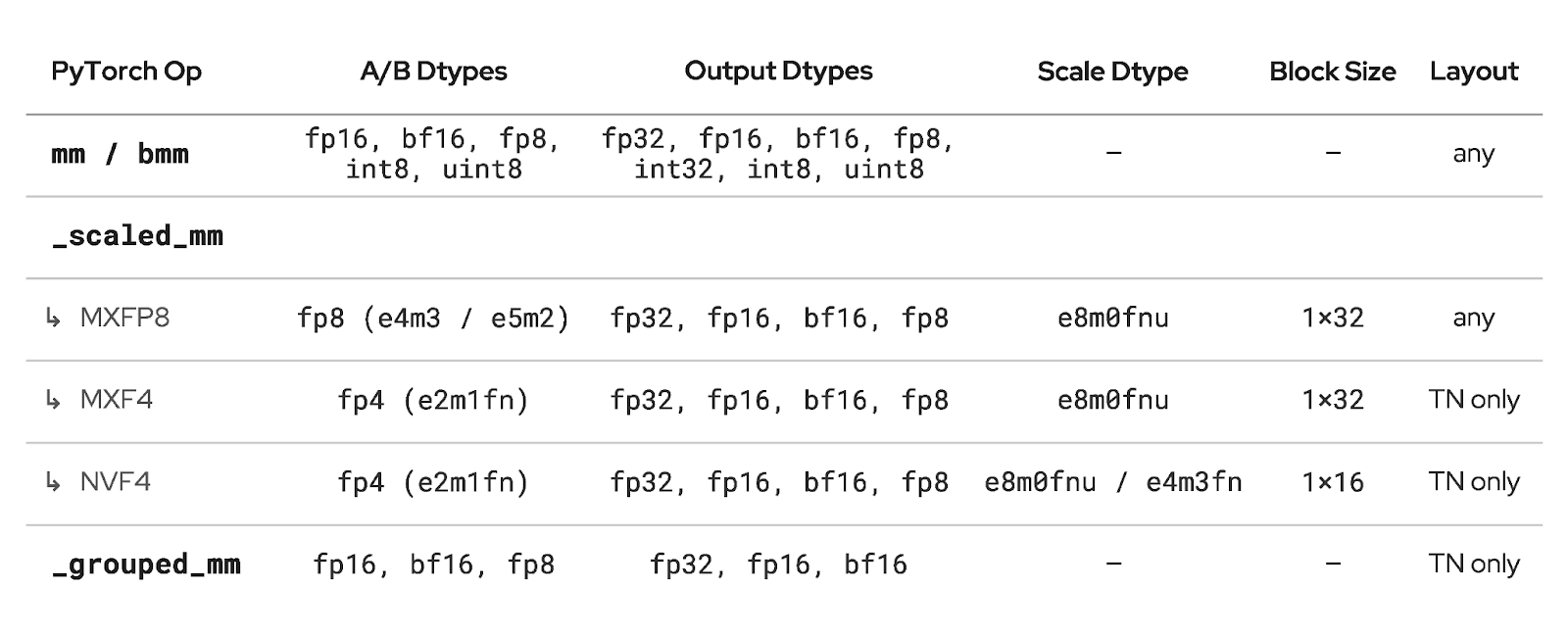

TorchInductor currently supports three autotuning backends for matrix multiplications: Triton, CUTLASS (C++), and cuBLAS. This post describes the integration of CuteDSL as a fourth backend, the technical motivation for the work, and the performance results observed so far.

The kernel-writing DSL space has gained significant momentum, with Triton, Helion, Gluon, CuTile, and CuteDSL each occupying a different point in the abstraction-performance tradeoff. When evaluating whether to integrate a new backend into TorchInductor, we apply three criteria: (1) the integration does not impose a large maintenance burden on our team, or there is a long-term committed effort from the vendor; (2) it does not regress compile time or benchmarking time relative to existing backends; and (3) it delivers better performance on target workloads.

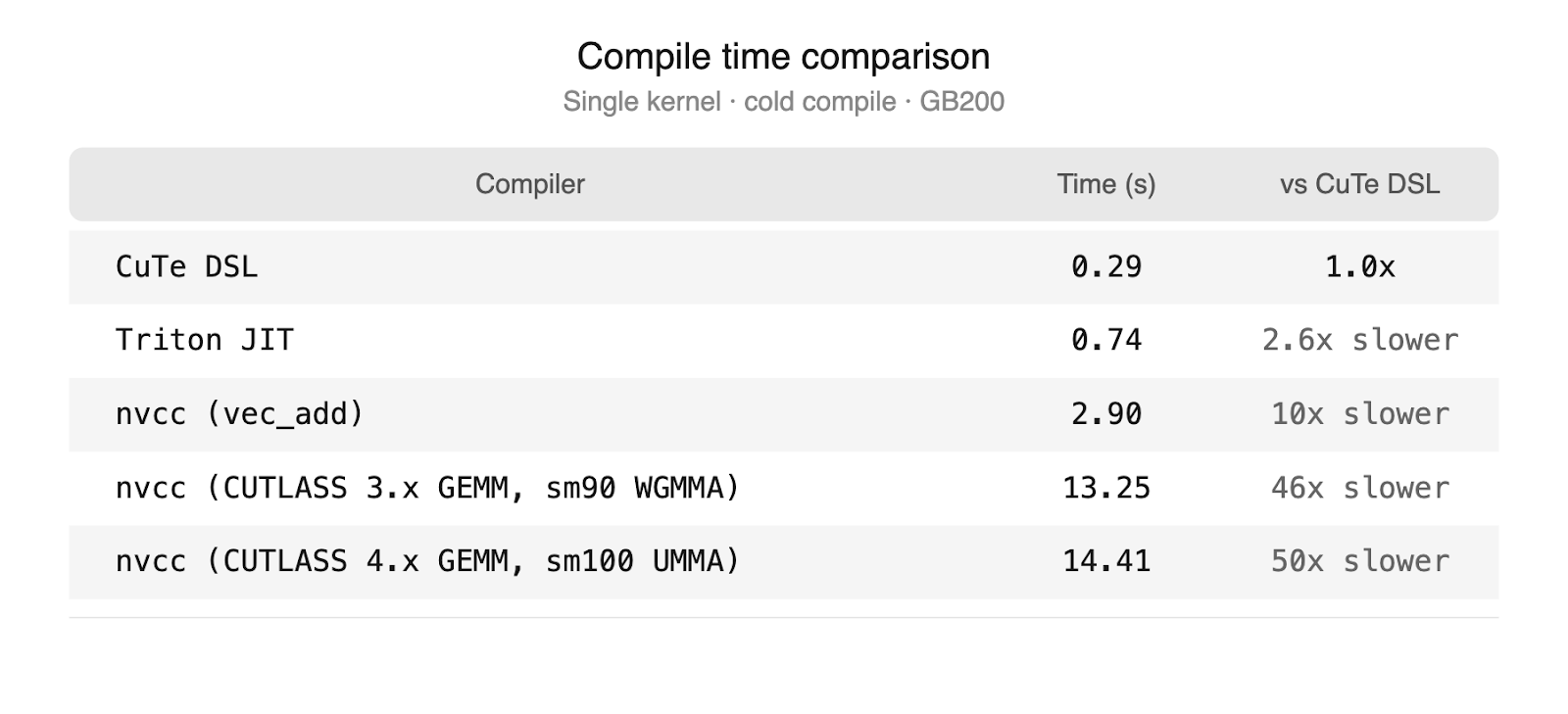

CuteDSL satisfies all three. NVIDIA is actively developing CuteDSL and provides optimized kernel templates, which limits the maintenance burden on TorchInductor. Compile times are at parity with our other backends, a significant improvement over the CUTLASS C++ path which requires full nvcc invocations.

Beyond these immediate benefits, CuteDSL represents a longer-term strategic investment. It is built on the same abstractions as CUTLASS C++, which has demonstrated strong performance on FP8 GEMMs and epilogue fusion, but it is written in Python, has faster compile times, and is less complex to maintain. As NVIDIA continues to invest in CuteDSL performance, CuteDSL is positioned to serve as an eventual replacement for the CUTLASS C++ integration on newer hardware generations, simplifying the TorchInductor codebase. The combination of aligned incentives, growing open-source adoption (Tri Dao’s Quack library, Jay Shah at Colfax International), and a lower-level programming model that exposes the full thread and memory hierarchy makes CuteDSL a well-positioned backend for delivering optimal GEMM performance on current and future NVIDIA hardware.

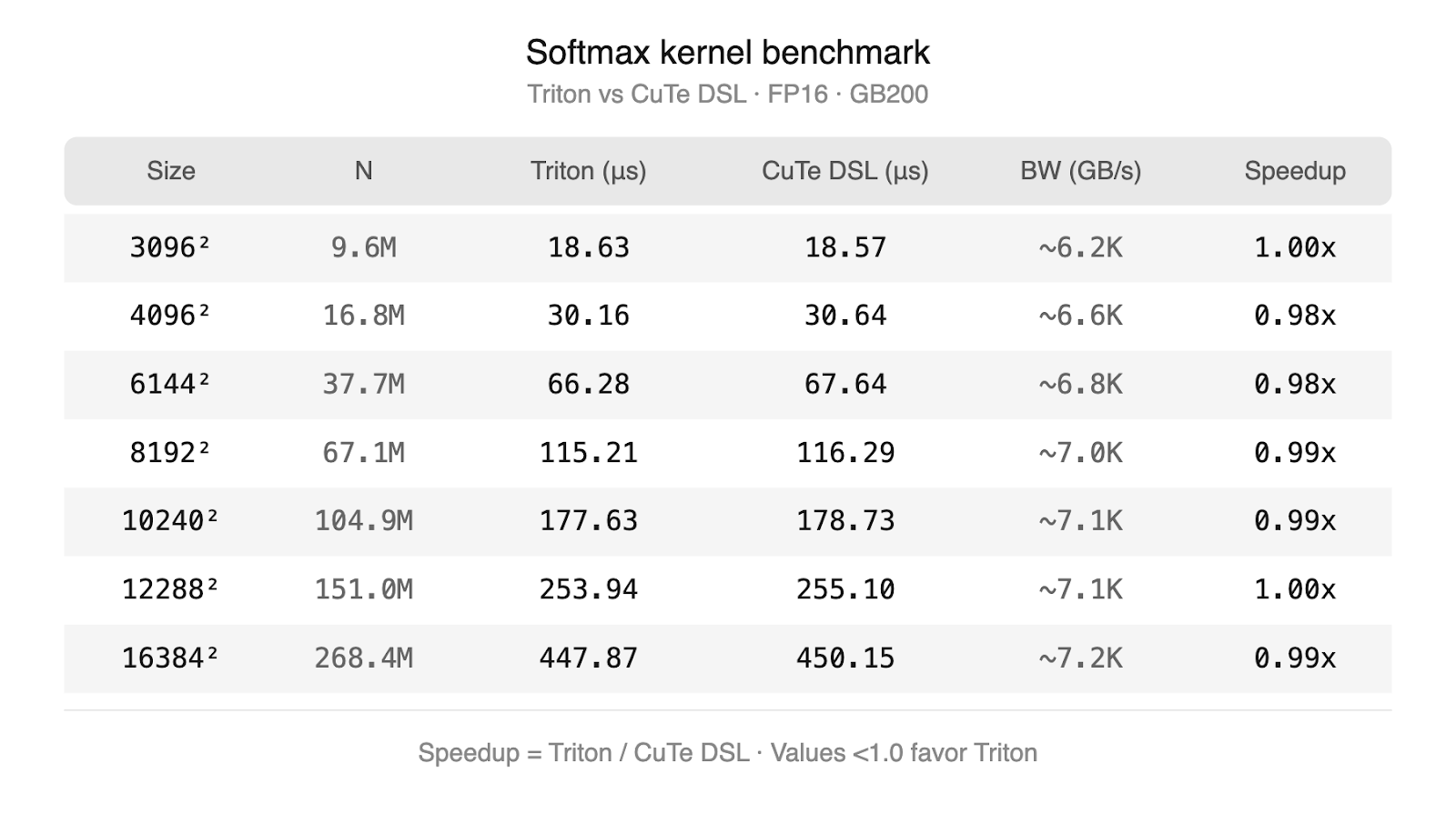

Strategy: Why We Target GEMMs

Not all operations benefit equally from a new backend. For memory-bound operations — elementwise math, activations, and reductions— Triton already generates high-quality code. Its block-level programming model is well-suited to these workloads which only require vectorized memory accesses, and the performance gap between Triton and hand-written kernels is small. CuteDSL can express pointwise operations and reductions, but due to its low-level nature, automatically generating CuteDSL kernels from scratch is complex. In practice the two DSLs produce kernels that perform comparably on these workloads, so this extra complexity would not provide any benefit. Our own experiments are shown below which validate this theory. We ran a triton and cuteDSL softmax kernel on progressively larger input sizes – both approach terminal bandwidth on GB200.

GEMMs are a different story. Matrix multiplications dominate the compute profile of transformer-based models: in a typical LLM forward pass, GEMMs in the attention projections, FFN layers, and output head account for the majority of GPU cycles. Achieving near-peak utilization on these operations requires precise control over the hardware features that each new GPU generation introduces — tile sizes tuned to the tensor core pipeline, explicit management of shared memory staging, warp-level scheduling, and on newer architectures like B200, thread block clusters and distributed shared memory. These are exactly the concerns that higher-level languages abstract away for ease of use. To simplify generating the low-level code, we avoid generating the kernel from scratch by starting with hand-optimized templates which expose the tunable parameters needed for adapting performance to different problem shapes.

The existing CUTLASS C++ backend addresses this by providing low-level control, but the C++ compilation overhead creates practical limitations: each kernel variant requires a full nvcc invocation, making it expensive to evaluate many candidates during autotuning and impractical to benchmark epilogue fusion decisions at scheduling time.

CuteDSL resolves this issue via a custom Python to MLIR compiler. The DSL itself is built on the same abstractions as CUTLASS C++ — the same tile algebra, the same memory hierarchy primitives, the same epilogue fusion model — but compiles at speeds comparable to TorchInductor’s other backends. This combination makes it possible to apply the full autotuning and benchmark fusion pipeline that TorchInductor uses for other backends to GEMM kernels that have CUTLASS-level hardware control. The specific properties that enable this are:

Full thread and memory hierarchy exposure. CuteDSL provides primitives for synchronization, warp-level control, thread block clusters, and the complete thread/memory hierarchy. This enables use of architecture-specific features such as distributed shared memory on H100 and B200.

Compile time improvements. The CUTLASS C++ path requires a full nvcc invocation for each kernel variant. This overhead makes benchmark fusion — where the compiler evaluates multiple GEMM candidates with different epilogue fusions during scheduling — impractical. CuteDSL compiles at speeds comparable to our other backends, removing this constraint and enabling new autotuning strategies.

NVIDIA Optimized GEMM templates. A dedicated team at NVIDIA is actively developing CuteDSL, providing optimized kernel templates for GEMMs and epilogue fusion, and working toward performance parity with the CUTLASS C++ backend. For future generations of hardware, CuteDSL will have an early advantage for hardware-specific optimizations with access to the newest hardware sooner.

In short: Triton handles pointwise well, so our focus for the CuteDSL backend is where the most performance is left on the table — GEMMs, attention, and epilogue fusions on the latest hardware.

Background: How TorchInductor Generates GEMMs

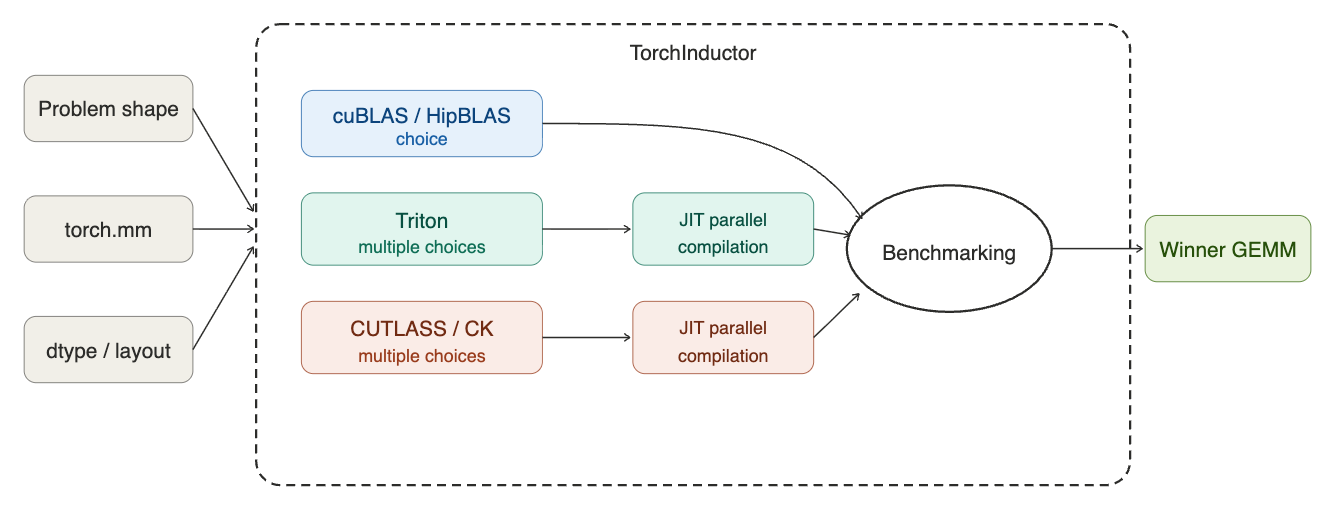

GPU architectures have become extremely complex with the rise of deep learning and AI use cases. As a result of this complexity, there are a lot of choices to make when designing a GEMM kernel such as: tile sizes, warp-specialization, instruction shapes and whether to use asynchronous memory transfers (TMA on Hopper and Blackwell). Torch.compile is uniquely positioned to tackle this problem at runtime because as a JIT compiler, it is able to identify the problem shapes of a model and select the best performing configuration using this information. This technique of tuning a kernel to a specific workload automatically is called autotuning. The flow for the Triton autotuning system for TorchInductor is shown below.

TorchInductor’s GEMM autotuning pipeline operates in several stages. When the compiler encounters a matrix multiplication during lowering, it first queries each enabled backend (Triton, CUTLASS, cuBLAS) to determine whether the backend supports the given problem shape, layout, and datatype. Backends that cannot handle the configuration are filtered out at this stage.

For each eligible backend, TorchInductor generates a set of candidate kernels from the backend’s template library. These candidates vary in tile size, warp configuration, and other backend-specific parameters. All candidates are then benchmarked on the target hardware, and the fastest kernel is selected.

The selected kernel and its compiled output are written to TorchInductor’s cache, so subsequent compilations with the same problem configuration can skip benchmarking entirely. This caching operates at both the individual kernel level (compiled code) and the selection level (which candidate won for a given problem size and backend set).

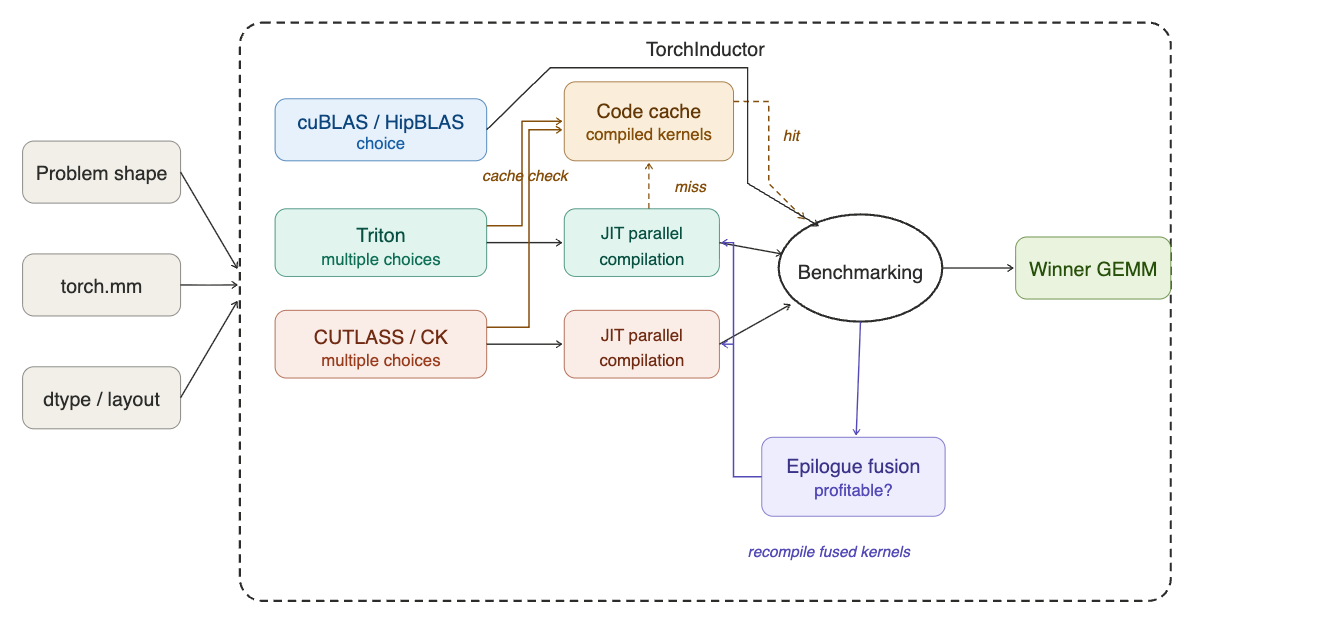

On top of this base pipeline, TorchInductor supports epilogue fusion for GEMM kernels. During scheduling, the compiler evaluates whether fusing downstream pointwise operations into the GEMM epilogue is profitable. For Triton, this is implemented via the MultiTemplate buffer: the top N GEMM candidates from lowering are carried forward, and possible fusions are benchmarked during scheduling to determine whether a fused variant outperforms the unfused GEMM followed by a separate pointwise kernel. Final kernel selection is deferred until after fusion passes complete. This full flow is shown below.

The CUTLASS C++ backend supports epilogue fusion through the Epilogue Visitor Tree (EVT), but the nvcc compile cost per variant limits the number of configurations that can be practically evaluated. This compile time constraint is one of the primary motivations for introducing CuteDSL as an alternative. Note: epilogue fusion is not supported today in the CuteDSL backend, but this work is planned (see Future Work).

Architecture of the CuteDSL Backend

The CuteDSL backend plugs into the autotuning pipeline described above. When Inductor encounters a matrix multiplication during lowering, the backend proceeds in three steps: (1) query cutlass_api. for all kernel configurations compatible with the problem, (2) rank them using nvMatmulHeuristics to select the top candidates, and (3) compile and benchmark those candidates on the target hardware alongside ATen and Triton. It differs from the Triton and CUTLASS C++ paths in two key ways.

Kernel selection via cutlass_api. The Triton backend generates kernel candidates from templates maintained inside TorchInductor. The CuteDSL backend takes a different approach: it queries cutlass_api, an NVIDIA-maintained Python library that contains the full space of CuTeDSL GEMM kernel configurations — tile shapes, cluster sizes, and scheduling parameters. Inductor describes the problem (shape, dtype, layout, scaling mode, and GPU compute capability) and the API returns all compatible kernels. When NVIDIA adds new kernel configurations or hardware support, they land in cutlass_api without changes to Inductor. The API is also extensible: TorchInductor can register its own kernel classes into the same library. We used this to add FP4 GEMM support (NVFP4, MXF4) before it was available upstream — our vendored kernels go through the same filter, rank, and profile pipeline as NVIDIA’s.

Heuristic-guided search space reduction. Querying cutlass_api for a given problem can return hundreds of compatible kernel configurations. Benchmarking all of them would be prohibitively expensive. To address this, the CuteDSL backend integrates nvMatmulHeuristics, an NVIDIA analytical performance model that scores each configuration by estimated hardware throughput — accounting for tile efficiency, memory bandwidth, and occupancy. This narrows hundreds of candidates to a handful (5 by default, configurable via nvgemm_max_profiling_configs). Only those top-ranked configurations are compiled and benchmarked on the target hardware. Neither Triton nor CUTLASS use an analytical model in this way; they rely on benchmarking over a smaller, template-defined search space.

Once autotuning selects a winning kernel, the compiled artifact is cached in memory — subsequent calls invoke the compiled function directly with no repeated compilation overhead.

Importantly, the CuteDSL backend is purely additive. If a problem is not compatible with NVGEMM — unsupported dtype, layout, or hardware — no NVGEMM candidates are generated, and the autotune proceeds with ATen and Triton as usual. If NVGEMM candidates are generated but lose the benchmark, the faster backend is selected automatically. Enabling NVGEMM cannot cause a performance regression.

Results

All benchmarks were run on a single NVIDIA B200 GPU at 850W with dynamic clocking (no tensor parallelism), using PyTorch nightly and Cuda 13.1.

Kernel-level results measure isolated GEMM latency via Inductor autotuning.

End-to-end results measure vLLM V1 decode latency on Llama 3.1 8B, Qwen3 32B, and Llama 3.3 70B with a 32-token input prompt and 128-token generation, serial execution, and a clean cache between runs.

Kernel-Level Speedups

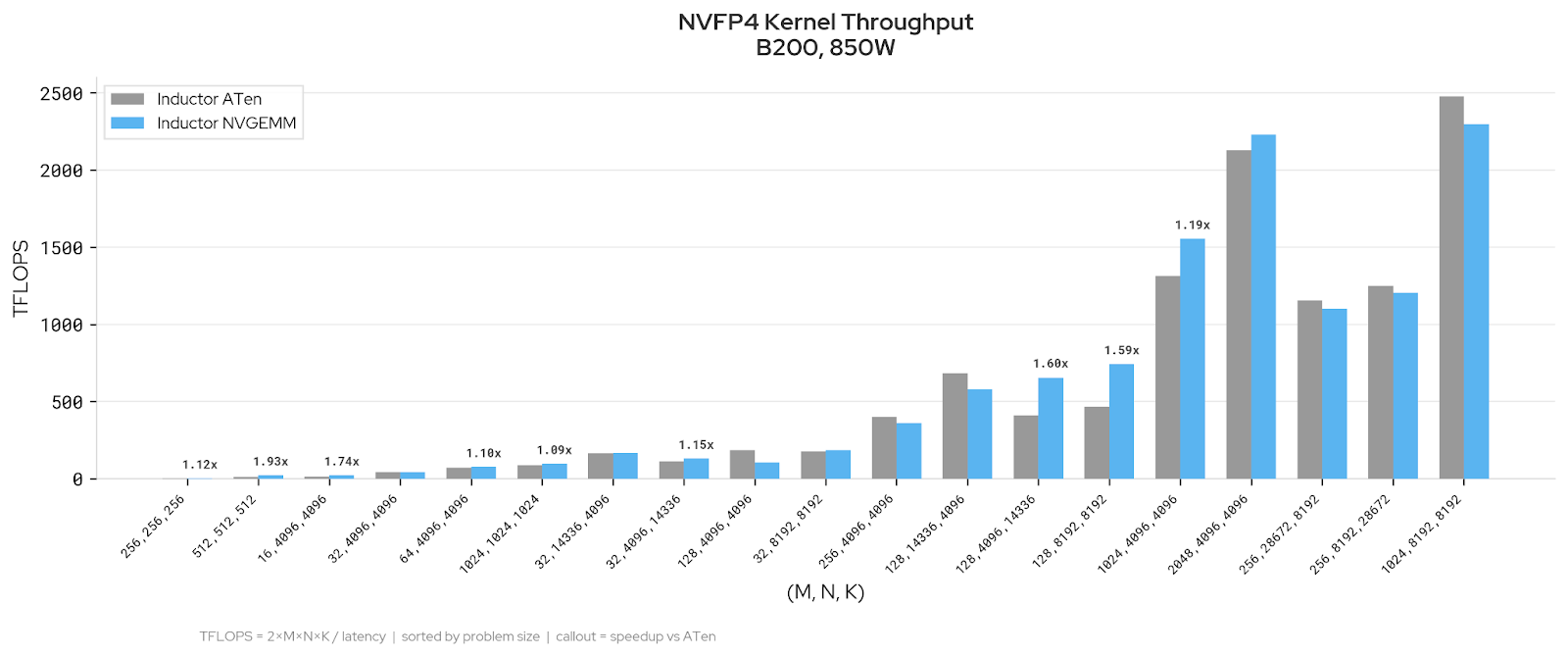

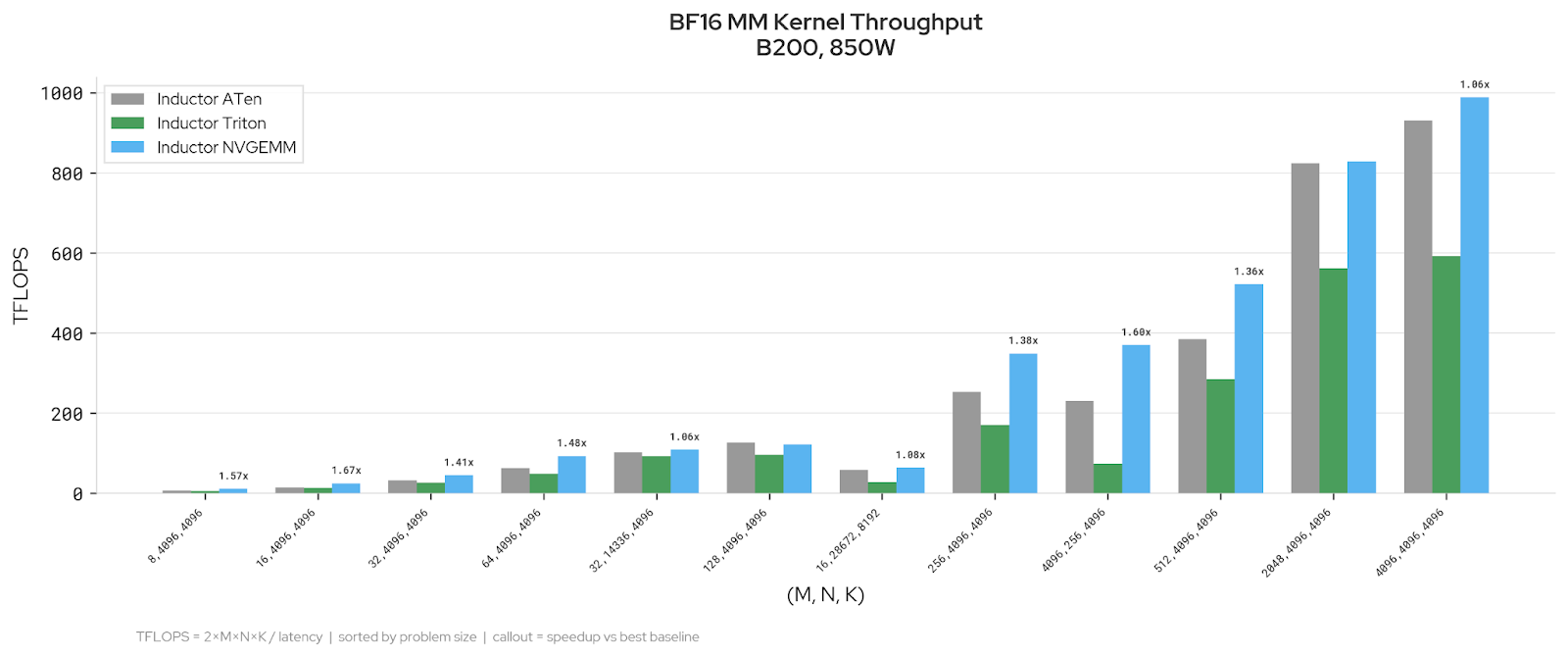

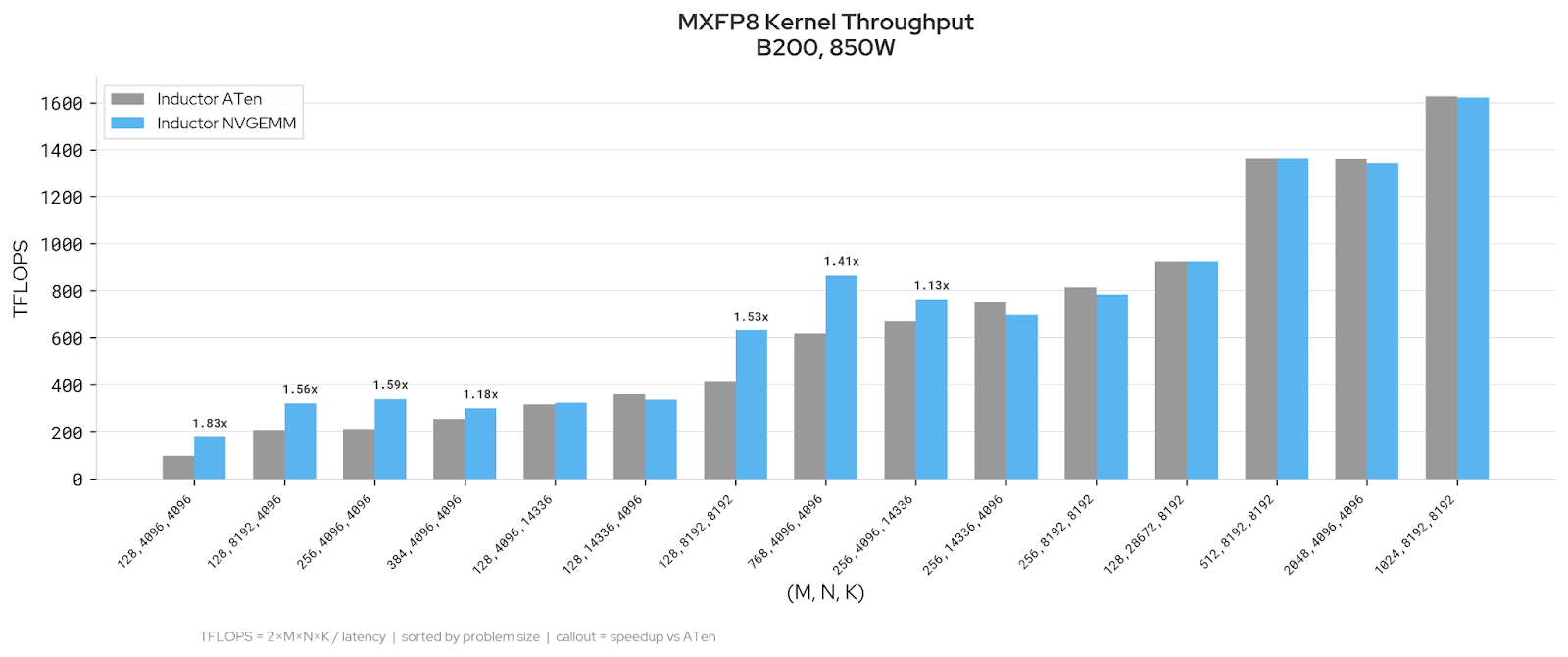

We evaluated Inductor NVGEMM against existing Inductor backends across three dtype regimes on LLM-relevant GEMM shapes. The charts below show kernel throughput in TFLOPS; callouts indicate speedup over the faster existing backend.

BF16: NVGEMM improves decode-regime shapes (M = 8 to M = 64, up to 1.73x) and tall-skinny shapes like (4096, 256, 4096) at 1.54x. Prefill-sized shapes are at parity.

MXFP8: NVGEMM improves on medium shapes (up to 1.78x) and is at parity on large shapes. Wide-N rectangular shapes favor ATen.

NVFP4: NVGEMM improves throughput on decode-sized shapes (M ≤ 256), with speedups up to 1.6x over the faster existing backend. At larger M (≥ 512), ATen is well-tuned and the backends converge.

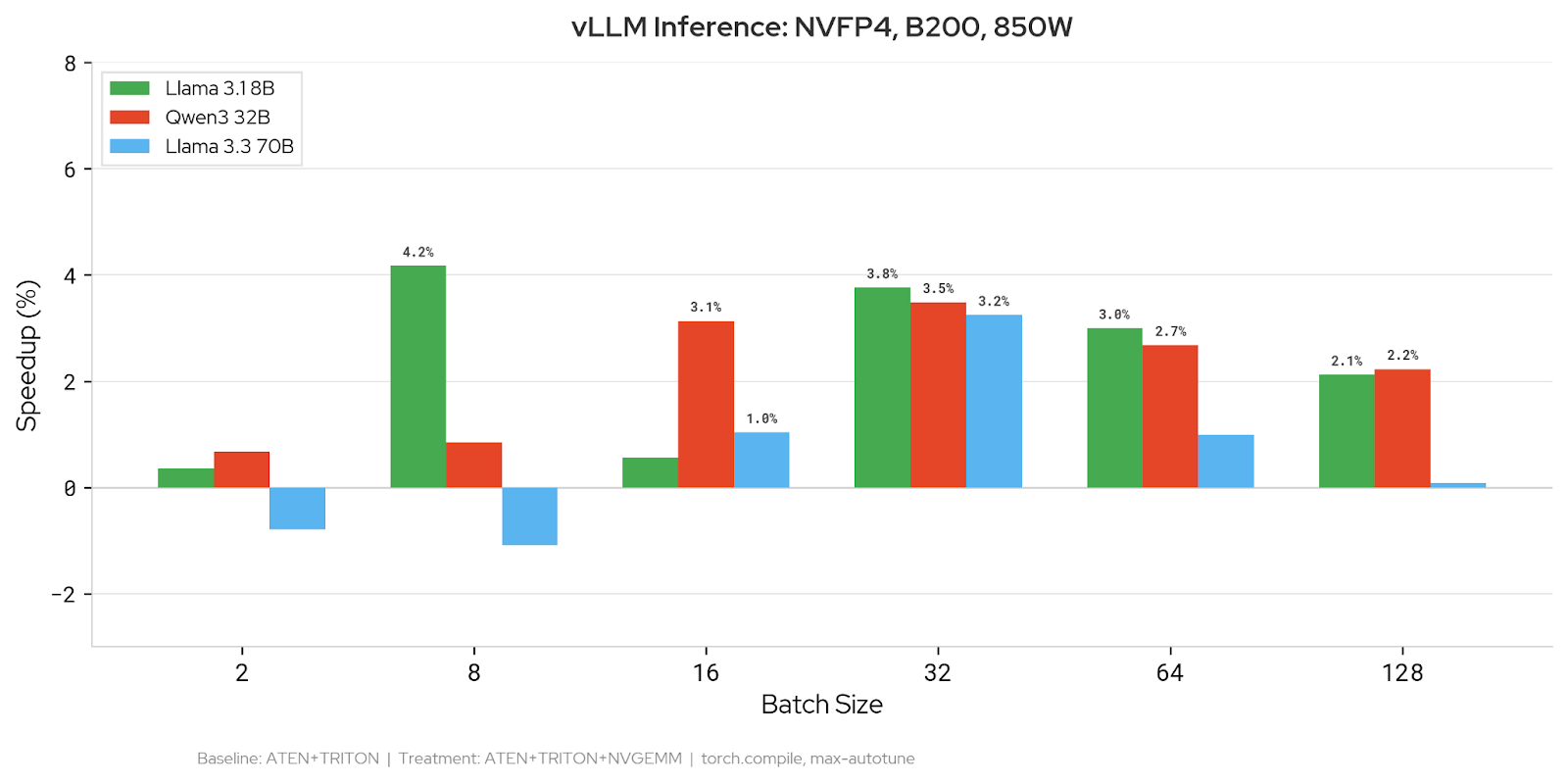

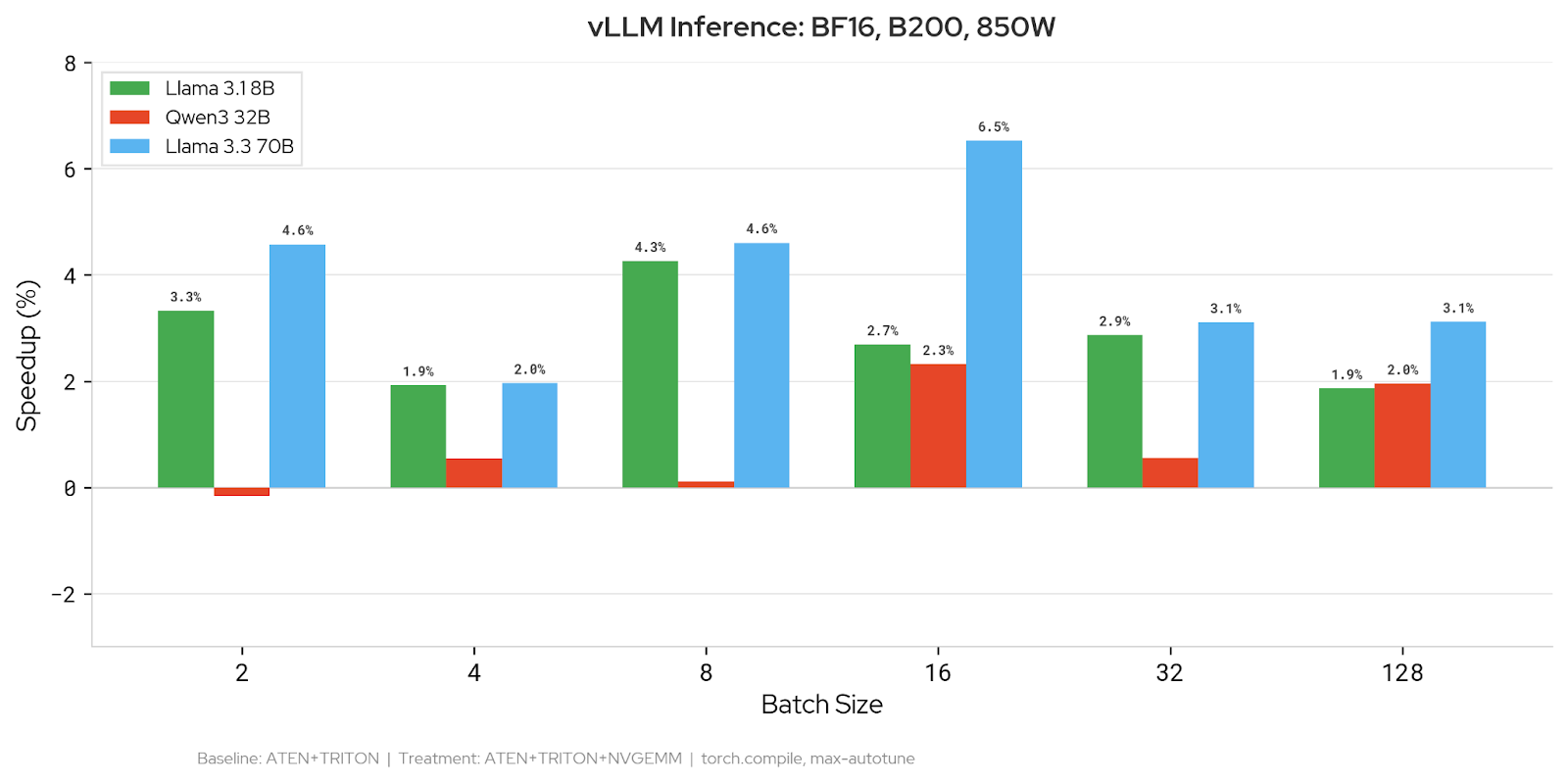

End-to-End vLLM Inference

We measured inference latency on three models across batch sizes 2–128 using vLLM’s V1 model runner. Because vLLM uses dynamic shapes for the batch dimension, Inductor does not know the actual batch size at compile time. We use an autotune_batch_hint to specify the target batch size so that Inductor benchmarks kernel candidates at the shape that will be used at runtime — this is important because optimal kernel configurations are shape-dependent.

BF16: Adding NVGEMM reduces latency on 90% of configurations (19/21 data points). The largest improvement is 6.5% on Llama 3.3 70B at batch size 16. Llama 3.1 8B sees consistent 2–4% gains across batch sizes. Qwen3 32B shows more modest improvements of 0.5–2.4%.

NVFP4: 89% win rate (16/18 data points). Llama 3.1 8B improves by up to 4.2%, Qwen3 32B by up to 3.5%, and Llama 3.3 70B by up to 3.3%. Gains are most consistent at batch sizes 16–64.

CuteDSL Backend Supported Features

How You Can Try It

Installation

The CuteDSL backend requires the cutlass_api library, which is currently installed from a specific branch of the CUTLASS repository. cutlass_api is expected to merge into the main CUTLASS branch in a future release, at which point this separate installation step will no longer be necessary.

bash

# Install CuTeDSL and the matmul heuristics library

pip install nvidia-cutlass-dsl==4.3.5

pip install nvidia-matmul-heuristics

# Clone and install cutlass_api from the cutlass_api branch

git clone --branch cutlass_api https://github.com/NVIDIA/cutlass.git

cd cutlass/python/cutlass_api

pip install -e ".[torch]" You will also need:

– PyTorch 2.11+ (core NVGEMM support for mm, bmm, scaled_mm, grouped_mm). FP4 kernel support (NVFP4, MXF4) and various performance optimizations requires PyTorch nightly.

Note: cutlass_api currently requires CuTeDSL version 4.3.5 or earlier.

Usage

Once installed, enable the backend by adding NVGEMM to the list of Inductor autotuning backends. Here is a minimal runnable example:

import torch

import torch._inductor.config as config

config.max_autotune_gemm_backends = "ATEN,TRITON,NVGEMM"

A = torch.randn(128, 4096, device="cuda", dtype=torch.bfloat16)

B = torch.randn(4096, 4096, device="cuda", dtype=torch.bfloat16)

@torch.compile(mode="max-autotune-no-cudagraphs")

def f(a, b):

return a @ b

out = f(A, B) # first call triggers autotuningWhen Inductor encounters a GEMM during compilation, it will evaluate NVGEMM kernel candidates alongside ATen and Triton and select the fastest. Operations that NVGEMM does not support automatically fall back to other backends.

The same configuration can be set via environment variable:

TORCHINDUCTOR_MAX_AUTOTUNE_GEMM_BACKENDS="ATEN,TRITON,NVGEMM" python my_script.pyTo control how many kernel configurations are profiled per GEMM:

config.nvgemm_max_profiling_configs = 10 # default is 5; set to None for allFuture Work

The following items represent the planned development roadmap for the CuteDSL backend.

Benchmark epilogue fusion. With CuteDSL compile times no longer a bottleneck, TorchInductor can benchmark epilogue fusion decisions for GEMM kernels. This is important because replacing cublas for an individual GEMM may not always be profitable, so epilogue fusion provides an avenue to consistently outperform cublas which can’t perform any fusions. This work involves deferring final kernel selection until after fusion passes complete, evaluating fused and unfused variants across backends, and selecting the globally optimal configuration. cutlass_api already provides epilogue-fusion-capable (EFC) kernels supporting auxiliary tensor loads/stores, elementwise operations (addition, multiplication, subtraction, division), and activations (relu, sigmoid, tanh). The remaining work is on the Inductor side: mapping Inductor’s fusion decisions to the EFC kernel interface and integrating them into the scheduling pipeline. Additional epilogue operations — reductions and row/column broadcasts — are planned in cutlass_api.

Async Parallel precompilation and persistent caching. Currently, kernel candidates are compiled sequentially inline via cute.compile(). We are adding parallel precompilation across subprocesses and persistent on-disk caching of compiled artifacts, so that warm autotuning runs can skip compilation entirely.

Exportable configuration caches. A portable, human-readable format (JSON or protobuf) for autotuned GEMM configurations, with import/export APIs for cache manipulation. This enables configuration portability across autotuning runs and environments.

FlexAttention-style matmul API. A higher-order API allowing users to specify backend preferences, tile configurations, and epilogues at the matmul callsite. This would provide explicit control over autotuning behavior and interoperate with the exportable configuration cache.

Quack GEMM Integration. Tri Dao’s Quack library has optimizer blackwell GEMM implementations, we will investigate to see how the performance compares to our current templates, and integrate these templates if they are more performant.

AOT compilation support. For inference deployments, precompiling CuteDSL kernels at export time would eliminate autotuning overhead. This depends on a precompile API planned for the CuteDSL 4.4 release and will require investigation into C++ accessibility for AOTI integration.

CUTLASS C++ backend replacement. On newer hardware generations, CuteDSL is expected to reach full parity with the C++ backend. At that point, CuteDSL would serve as a replacement, simplifying the TorchInductor codebase by consolidating the CUTLASS integration into a single Python-based path.

Conclusion

In this post, we presented the architecture of the TorchInductor CuteDSL backend, how to enable it today, and our benchmarking results. As shown in the future work, this is the first presentation of this work and there is a lot more to do. If there are any issues, questions, or new ideas you’d like to see with the new backend, file an issue on github and tag us!

RSVP for the 2026 PyTorch Docathon

3 Apr 2026, 5:51 pm

We’re excited to announce that the 2026 PyTorch Docathon will take place May 5-19! This is a hackathon-style event aimed at enhancing PyTorch documentation with the support of the community. Documentation is an important component of any technology, and by refining it, we can simplify the onboarding process for new users, help them effectively utilize PyTorch’s features, and ultimately speed up the transition from research to production in machine learning.

WHY PARTICIPATE

Low Barrier to Entry: Unlike many open-source projects that require deep knowledge of the codebase and previous contributions to join hackathon events, the Docathon is tailored for newcomers. While we expect participants to be familiar with Python, and have basic knowledge of PyTorch and machine learning, there are tasks related to website issues that don’t even require that level of expertise.

Tangible Results: A major advantage of the Docathon is witnessing the immediate impact of your contributions. Enhancing documentation significantly boosts a project’s usability and accessibility, and you’ll be able to observe these improvements directly. Seeing tangible outcomes can also be a strong motivator to continue contributing.