How AI is Helping Vets to Help our Pets

7 Sep 2021, 5:53 am1 in 4 dogs, and 1 in 5 cats, will develop cancer at some point in their lives. Pets today have a better chance of being successfully treated than ever, thanks to advances in early recognition, diagnosis and treatment.

Using a Grapheme to Phoneme Model in Cisco’s Webex Assistant

7 Sep 2021, 5:52 amGrapheme to Phoneme (G2P) is a function that generates pronunciations (phonemes) for words based on their written form (graphemes). It has an important role in automatic speech recognition systems, natural language processing, and text-to-speech engines. In Cisco’s Webex Assistant, we use G2P modelling to assist in resolving person names from voice. See here for further details of various techniques we use to build robust voice assistants.

How Computational Graphs are Constructed in PyTorch

31 Aug 2021, 11:26 pmIn the previous post we went over the theoretical foundations of automatic differentiation and reviewed the implementation in PyTorch. In this post, we will be showing the parts of PyTorch involved in creating the graph and executing it. In order to understand the following contents, please read @ezyang’s wonderful blog post about PyTorch internals.

Autograd components

First of all, let’s look at where the different components of autograd live:

tools/autograd: Here we can find the definition of the derivatives as we saw in the previous post derivatives.yaml, several python scripts and a folder called templates. These scripts and the templates are used at building time to generate the C++ code for the derivatives as specified in the yaml file. Also, the scripts here generate wrappers for the regular ATen functions so that the computational graph can be constructed.

torch/autograd: This folder is where the autograd components that can be used directly from python are located. In function.py we find the actual definition of torch.autograd.Function, a class used by users to write their own differentiable functions in python as per the documentation. functional.py holds components for functionally computing the jacobian vector product, hessian, and other gradient related computations of a given function. The rest of the files have additional components such as gradient checkers, anomaly detection, and the autograd profiler.

torch/csrc/autograd: This is where the graph creation and execution-related code lives. All this code is written in C++, since it is a critical part that is required to be extremely performant. Here we have several files that implement the engine, metadata storage, and all the needed components. Alongside this, we have several files whose names start with python_, and their main responsibility is to allow python objects to be used in the autograd engine.

Graph Creation

Previously, we described the creation of a computational graph. Now, we will see how PyTorch creates these graphs with references to the actual codebase.

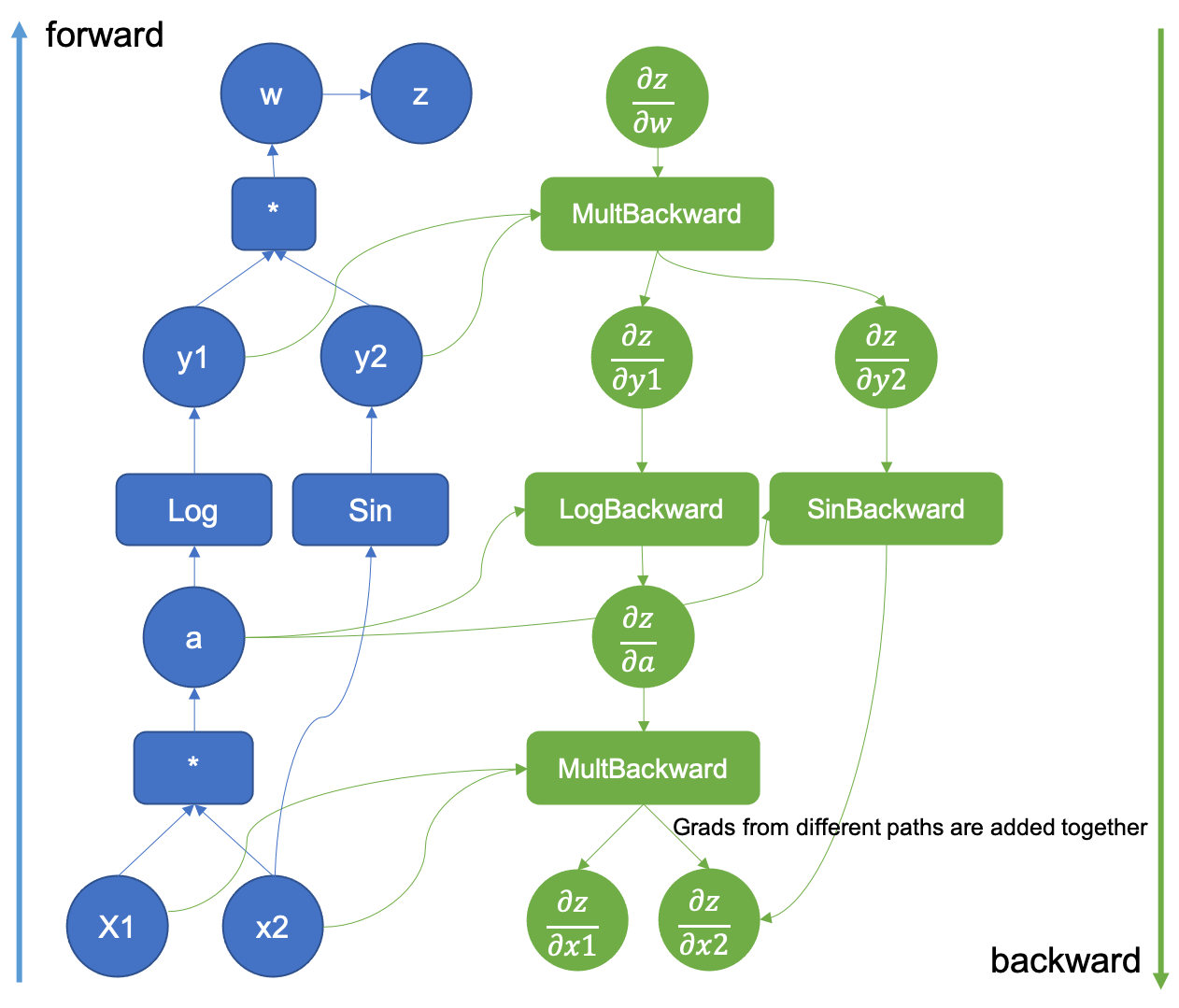

Figure 1: Example of an augmented computational graph

It all starts when in our python code, where we request a tensor to require the gradient.

>>> x = torch.tensor([0.5, 0.75], requires_grad=True)

When the required_grad flag is set in tensor creation, c10 will allocate an AutogradMeta object that is used to hold the graph information.

void TensorImpl::set_requires_grad(bool requires_grad) {

...

if (!autograd_meta_)

autograd_meta_ = impl::GetAutogradMetaFactory()->make();

autograd_meta_->set_requires_grad(requires_grad, this);

}

The AutogradMeta object is defined in torch/csrc/autograd/variable.h as follows:

struct TORCH_API AutogradMeta : public c10::AutogradMetaInterface {

std::string name_;

Variable grad_;

std::shared_ptr<Node> grad_fn_;

std::weak_ptr<Node> grad_accumulator_;

// other fields and methods

...

};

The most important fields in this structure are the computed gradient in grad_ and a pointer to the function grad_fn that will be called by the engine to produce the actual gradient. Also, there is a gradient accumulator object that is used to add together all the different gradients where this tensor is involved as we will see in the graph execution.

Graphs, Nodes and Edges.

Now, when we call a differentiable function that takes this tensor as an argument, the associated metadata will be populated. Let’s suppose that we call a regular torch function that is implemented in ATen. Let it be the multiplication as in our previous blog post example. The resulting tensor has a field called grad_fn that is essentially a pointer to the function that will be used to compute the gradient of that operation.

>>> x = torch.tensor([0.5, 0.75], requires_grad=True)

>>> v = x[0] * x[1]

>>> v

tensor(0.3750, grad_fn=<MulBackward0>)

Here we see that the tensors’ grad_fn has a MulBackward0 value. This function is the same that was written in the derivatives.yaml file, and its C++ code was generated automatically by all the scripts in tools/autograd. It’s auto-generated source code can be seen in torch/csrc/autograd/generated/Functions.cpp.

variable_list MulBackward0::apply(variable_list&& grads) {

std::lock_guard<std::mutex> lock(mutex_);

IndexRangeGenerator gen;

auto self_ix = gen.range(1);

auto other_ix = gen.range(1);

variable_list grad_inputs(gen.size());

auto& grad = grads[0];

auto self = self_.unpack();

auto other = other_.unpack();

bool any_grad_defined = any_variable_defined(grads);

if (should_compute_output({ other_ix })) {

auto grad_result = any_grad_defined ? (mul_tensor_backward(grad, self, other_scalar_type)) : Tensor();

copy_range(grad_inputs, other_ix, grad_result);

}

if (should_compute_output({ self_ix })) {

auto grad_result = any_grad_defined ? (mul_tensor_backward(grad, other, self_scalar_type)) : Tensor();

copy_range(grad_inputs, self_ix, grad_result);

}

return grad_inputs;

}

The grad_fn objects inherit from the TraceableFunction class, a descendant of Node with just a property set to enable tracing for debugging and optimization purposes. A graph by definition has nodes and edges, so these functions are indeed the nodes of the computational graph that are linked together by using Edge objects to enable the graph traversal later on.

The Node definition can be found in the torch/csrc/autograd/function.h file.

struct TORCH_API Node : std::enable_shared_from_this<Node> {

...

/// Evaluates the function on the given inputs and returns the result of the

/// function call.

variable_list operator()(variable_list&& inputs) {

...

}

protected:

/// Performs the `Node`'s actual operation.

virtual variable_list apply(variable_list&& inputs) = 0;

…

edge_list next_edges_;

Essentially we see that it has an override of the operator () that performs the call to the actual function, and a pure virtual function called apply. The automatically generated functions override this apply method as we saw in the MulBackward0 example above. Finally, the node also has a list of edges to enable graph connectivity.

The Edge object is used to link Nodes together and its implementation is straightforward.

struct Edge {

...

/// The function this `Edge` points to.

std::shared_ptr<Node> function;

/// The identifier of a particular input to the function.

uint32_t input_nr;

};

It only requires a function pointer (the actual grad_fn objects that the edges link together), and an input number that acts as an id for the edge.

Linking nodes together

When we invoke the product operation of two tensors, we enter into the realm of autogenerated code. All the scripts that we saw in tools/autograd fill a series of templates that wrap the differentiable functions in ATen. These functions have code to construct the backward graph during the forward pass.

The gen_variable_type.py script is in charge of writing all this wrapping code. This script is called from the tools/autograd/gen_autograd.py during the pytorch build process and it will output the automatically generated function wrappers to torch/csrc/autograd/generated/.

Let’s take a look at how the tensor multiplication generated function looks like. The code has been simplified, but it can be found in the torch/csrc/autograd/generated/VariableType_4.cpp file when compiling pytorch from source.

at::Tensor mul_Tensor(c10::DispatchKeySet ks, const at::Tensor & self, const at::Tensor & other) {

...

auto _any_requires_grad = compute_requires_grad( self, other );

std::shared_ptr<MulBackward0> grad_fn;

if (_any_requires_grad) {

// Creates the link to the actual grad_fn and links the graph for backward traversal

grad_fn = std::shared_ptr<MulBackward0>(new MulBackward0(), deleteNode);

grad_fn->set_next_edges(collect_next_edges( self, other ));

...

}

…

// Does the actual function call to ATen

auto _tmp = ([&]() {

at::AutoDispatchBelowADInplaceOrView guard;

return at::redispatch::mul(ks & c10::after_autograd_keyset, self_, other_);

})();

auto result = std::move(_tmp);

if (grad_fn) {

// Connects the result to the graph

set_history(flatten_tensor_args( result ), grad_fn);

}

...

return result;

}

Let’s walk through the most important lines of this code. First of all, the grad_fn object is created with: ` grad_fn = std::shared_ptr(new MulBackward0(), deleteNode);`.

After the grad_fn object is created, the edges used to link the nodes together are created by using the grad_fn->set_next_edges(collect_next_edges( self, other )); calls.

struct MakeNextFunctionList : IterArgs<MakeNextFunctionList> {

edge_list next_edges;

using IterArgs<MakeNextFunctionList>::operator();

void operator()(const Variable& variable) {

if (variable.defined()) {

next_edges.push_back(impl::gradient_edge(variable));

} else {

next_edges.emplace_back();

}

}

void operator()(const c10::optional<Variable>& variable) {

if (variable.has_value() && variable->defined()) {

next_edges.push_back(impl::gradient_edge(*variable));

} else {

next_edges.emplace_back();

}

}

};

template <typename... Variables>

edge_list collect_next_edges(Variables&&... variables) {

detail::MakeNextFunctionList make;

make.apply(std::forward<Variables>(variables)...);

return std::move(make.next_edges);

}

Given an input variable (it’s just a regular tensor), collect_next_edges will create an Edge object by calling impl::gradient_edge

Edge gradient_edge(const Variable& self) {

// If grad_fn is null (as is the case for a leaf node), we instead

// interpret the gradient function to be a gradient accumulator, which will

// accumulate its inputs into the grad property of the variable. These

// nodes get suppressed in some situations, see "suppress gradient

// accumulation" below. Note that only variables which have `requires_grad =

// True` can have gradient accumulators.

if (const auto& gradient = self.grad_fn()) {

return Edge(gradient, self.output_nr());

} else {

return Edge(grad_accumulator(self), 0);

}

}

To understand how edges work, let’s assume that an early executed function produced two output tensors, both with their grad_fn set, each tensor also has an output_nr property with the order in which they were returned. When creating the edges for the current grad_fn, an Edge object per input variable will be created. The edges will point to the variable’s grad_fn and will also track the output_nr to establish ids used when traversing the graph. In the case that the input variables are “leaf”, i.e. they were not produced by any differentiable function, they don’t have a grad_fn attribute set. A special function called a gradient accumulator is set by default as seen in the above code snippet.

After the edges are created, the grad_fn graph Node object that is being currently created will hold them using the set_next_edges function. This is what connects grad_fns together, producing the computational graph.

void set_next_edges(edge_list&& next_edges) {

next_edges_ = std::move(next_edges);

for(const auto& next_edge : next_edges_) {

update_topological_nr(next_edge);

}

}

Now, the forward pass of the function will execute, and after the execution set_history will connect the output tensors to the grad_fn Node.

inline void set_history(

at::Tensor& variable,

const std::shared_ptr<Node>& grad_fn) {

AT_ASSERT(grad_fn);

if (variable.defined()) {

// If the codegen triggers this, you most likely want to add your newly added function

// to the DONT_REQUIRE_DERIVATIVE list in tools/autograd/gen_variable_type.py

TORCH_INTERNAL_ASSERT(isDifferentiableType(variable.scalar_type()));

auto output_nr =

grad_fn->add_input_metadata(variable);

impl::set_gradient_edge(variable, {grad_fn, output_nr});

} else {

grad_fn->add_input_metadata(Node::undefined_input());

}

}

set_history calls set_gradient_edge, which just copies the grad_fn and the output_nr to the AutogradMeta object that the tensor has.

void set_gradient_edge(const Variable& self, Edge edge) {

auto* meta = materialize_autograd_meta(self);

meta->grad_fn_ = std::move(edge.function);

meta->output_nr_ = edge.input_nr;

// For views, make sure this new grad_fn_ is not overwritten unless it is necessary

// in the VariableHooks::grad_fn below.

// This logic is only relevant for custom autograd Functions for which multiple

// operations can happen on a given Tensor before its gradient edge is set when

// exiting the custom Function.

auto diff_view_meta = get_view_autograd_meta(self);

if (diff_view_meta && diff_view_meta->has_bw_view()) {

diff_view_meta->set_attr_version(self._version());

}

}

This tensor now will be the input to another function and the above steps will be all repeated. Check the animation below to see how the graph is created.

Figure 2: Animation that shows the graph creation

Registering Python Functions in the graph

We have seen how autograd creates the graph for the functions included in ATen. However, when we define our differentiable functions in Python, they are also included in the graph!

An autograd python defined function looks like the following:

class Exp(torch.autograd.Function):

@staticmethod

def forward(ctx, i):

result = i.exp()

ctx.save_for_backward(result)

return result

@staticmethod

def backward(ctx, grad_output):

result, = ctx.saved_tensors

return grad_output * result

# Call the function

Exp.apply(torch.tensor(0.5, requires_grad=True))

# Outputs: tensor(1.6487, grad_fn=<ExpBackward>)

In the above snippet autograd detected our python function when creating the graph. All of this is possible thanks to the Function class. Let’s take a look at what happens when we call apply.

apply is defined in the torch._C._FunctionBase class, but this class is not present in the python source. _FunctionBase is defined in C++ by using the python C API to hook C functions together into a single python class. We are looking for a function named THPFunction_apply.

PyObject *THPFunction_apply(PyObject *cls, PyObject *inputs)

{

// Generates the graph node

THPObjectPtr backward_cls(PyObject_GetAttrString(cls, "_backward_cls"));

if (!backward_cls) return nullptr;

THPObjectPtr ctx_obj(PyObject_CallFunctionObjArgs(backward_cls, nullptr));

if (!ctx_obj) return nullptr;

THPFunction* ctx = (THPFunction*)ctx_obj.get();

auto cdata = std::shared_ptr<PyNode>(new PyNode(std::move(ctx_obj)), deleteNode);

ctx->cdata = cdata;

// Prepare inputs and allocate context (grad fn)

// Unpack inputs will collect the edges

auto info_pair = unpack_input<false>(inputs);

UnpackedInput& unpacked_input = info_pair.first;

InputFlags& input_info = info_pair.second;

// Initialize backward function (and ctx)

bool is_executable = input_info.is_executable;

cdata->set_next_edges(std::move(input_info.next_edges));

ctx->needs_input_grad = input_info.needs_input_grad.release();

ctx->is_variable_input = std::move(input_info.is_variable_input);

// Prepend ctx to input_tuple, in preparation for static method call

auto num_args = PyTuple_GET_SIZE(inputs);

THPObjectPtr ctx_input_tuple(PyTuple_New(num_args + 1));

if (!ctx_input_tuple) return nullptr;

Py_INCREF(ctx);

PyTuple_SET_ITEM(ctx_input_tuple.get(), 0, (PyObject*)ctx);

for (int i = 0; i < num_args; ++i) {

PyObject *arg = PyTuple_GET_ITEM(unpacked_input.input_tuple.get(), i);

Py_INCREF(arg);

PyTuple_SET_ITEM(ctx_input_tuple.get(), i + 1, arg);

}

// Call forward

THPObjectPtr tensor_outputs;

{

AutoGradMode grad_mode(false);

THPObjectPtr forward_fn(PyObject_GetAttrString(cls, "forward"));

if (!forward_fn) return nullptr;

tensor_outputs = PyObject_CallObject(forward_fn, ctx_input_tuple);

if (!tensor_outputs) return nullptr;

}

// Here is where the outputs gets the tensors tracked

return process_outputs(cls, cdata, ctx, unpacked_input, inputs, std::move(tensor_outputs),

is_executable, node);

END_HANDLE_TH_ERRORS

}

Although this code is hard to read at first due to all the python API calls, it essentially does the same thing as the auto-generated forward functions that we saw for ATen:

Create a grad_fn object. Collect the edges to link the current grad_fn with the input tensors one. Execute the function forward. Assign the created grad_fn to the output tensors metadata.

The grad_fn object is created in:

// Generates the graph node

THPObjectPtr backward_cls(PyObject_GetAttrString(cls, "_backward_cls"));

if (!backward_cls) return nullptr;

THPObjectPtr ctx_obj(PyObject_CallFunctionObjArgs(backward_cls, nullptr));

if (!ctx_obj) return nullptr;

THPFunction* ctx = (THPFunction*)ctx_obj.get();

auto cdata = std::shared_ptr<PyNode>(new PyNode(std::move(ctx_obj)), deleteNode);

ctx->cdata = cdata;

Basically, it asks the python API to get a pointer to the Python object that can execute the user-written function. Then it wraps it into a PyNode object that is a specialized Node object that calls the python interpreter with the provided python function when apply is executed during the forward pass. Note that in the code cdata is the actual Node object that is part of the graph. ctx is the object that is passed to the python forward/backward functions and it is used to store autograd related information by both, the user’s function and PyTorch.

As in the regular C++ functions we also call collect_next_edges to track the inputs grad_fn objects, but this is done in unpack_input:

template<bool enforce_variables>

std::pair<UnpackedInput, InputFlags> unpack_input(PyObject *args) {

...

flags.next_edges = (flags.is_executable ? collect_next_edges(unpacked.input_vars) : edge_list());

return std::make_pair(std::move(unpacked), std::move(flags));

}

After this, the edges are assigned to the grad_fn by just doing cdata->set_next_edges(std::move(input_info.next_edges)); and the forward function is called through the python interpreter C API.

Once the output tensors are returned from the forward pass, they are processed and converted to variables inside the process_outputs function.

PyObject* process_outputs(PyObject *op_obj, const std::shared_ptr<PyNode>& cdata,

THPFunction* grad_fn, const UnpackedInput& unpacked,

PyObject *inputs, THPObjectPtr&& raw_output, bool is_executable,

torch::jit::Node* node) {

...

_wrap_outputs(cdata, grad_fn, unpacked.input_vars, raw_output, outputs, is_executable);

_trace_post_record(node, op_obj, unpacked.input_vars, outputs, is_inplace, unpack_output);

if (is_executable) {

_save_variables(cdata, grad_fn);

} ...

return outputs.release();

}

Here, _wrap_outputs is in charge of setting the forward outputs grad_fn to the newly created one. For this, it calls another _wrap_outputs function defined in a different file, so the process here gets a little confusing.

static void _wrap_outputs(const std::shared_ptr<PyNode>& cdata, THPFunction *self,

const variable_list &input_vars, PyObject *raw_output, PyObject *outputs, bool is_executable)

{

auto cdata_if_executable = is_executable ? cdata : nullptr;

...

// Wrap only the tensor outputs.

// This calls csrc/autograd/custom_function.cpp

auto wrapped_outputs = _wrap_outputs(input_vars, non_differentiable, dirty_inputs, raw_output_vars, cdata_if_executable);

...

}

The called _wrap_outputs is the one in charge of setting the autograd metadata in the output tensors:

std::vector<c10::optional<Variable>> _wrap_outputs(const variable_list &input_vars,

const std::unordered_set<at::TensorImpl*> &non_differentiable,

const std::unordered_set<at::TensorImpl*> &dirty_inputs,

const at::ArrayRef<c10::optional<Variable>> raw_outputs,

const std::shared_ptr<Node> &cdata) {

std::unordered_set<at::TensorImpl*> inputs;

…

// Sets the grad_fn and output_nr of an output Variable.

auto set_history = [&](Variable& var, uint32_t output_nr, bool is_input, bool is_modified,

bool is_differentiable) {

// Lots of checks

if (!is_differentiable) {

...

} else if (is_input) {

// An input has been returned, but it wasn't modified. Return it as a view

// so that we can attach a new grad_fn to the Variable.

// Run in no_grad mode to mimic the behavior of the forward.

{

AutoGradMode grad_mode(false);

var = var.view_as(var);

}

impl::set_gradient_edge(var, {cdata, output_nr});

} else if (cdata) {

impl::set_gradient_edge(var, {cdata, output_nr});

}

};

And this is where set_gradient_edge was called and this is how a user-written python function gets included in the computational graph with its associated backward function!

Closing remarks

This blog post is intended to be a code overview on how PyTorch constructs the actual computational graphs that we discussed in the previous post. The next entry will deal with how the autograd engine executes these graphs.

Announcing PyTorch Developer Day 2021

23 Aug 2021, 11:29 pmWe are excited to announce PyTorch Developer Day (#PTD2), taking place virtually from December 1 & 2, 2021. Developer Day is designed for developers and users to discuss core technical developments, ideas, and roadmaps.

Event Details

Technical Talks Live Stream – December 1, 2021

Join us for technical talks on a variety of topics, including updates to the core framework, new tools and libraries to support development across a variety of domains, responsible AI and industry use cases. All talks will take place on December 1 and will be live streamed on PyTorch channels.

Stay up to date by following us on our social channels: Twitter, Facebook, or LinkedIn.

Poster Exhibition & Networking – December 2, 2021

On the second day, we’ll be hosting an online poster exhibition on Gather.Town. There will be opportunities to meet the authors and learn more about their PyTorch projects as well as network with the community. This poster and networking event is limited to people composed of PyTorch maintainers and contributors, long-time stakeholders and experts in areas relevant to PyTorch’s future. Conversations from the networking event will strongly shape the future of PyTorch. As such, invitations are required to attend the networking event.

Call for Content Now Open

Submit your poster abstracts today! Please send us the title and brief summary of your project, tools and libraries that could benefit PyTorch researchers in academia and industry, application developers, and ML engineers for consideration. The focus must be on academic papers, machine learning research, or open-source projects related to PyTorch development, Responsible AI or Mobile. Please no sales pitches. Deadline for submission is September 24, 2021.

Visit the event website for more information and we look forward to having you at PyTorch Developer Day.

PipeTransformer: Automated Elastic Pipelining for Distributed Training of Large-scale Models

18 Aug 2021, 11:31 pmIn this blog post, we describe the first peer-reviewed research paper that explores accelerating the hybrid of PyTorch DDP (torch.nn.parallel.DistributedDataParallel) [1] and Pipeline (torch.distributed.pipeline) – PipeTransformer: Automated Elastic Pipelining for Distributed Training of Large-scale Models (Transformers such as BERT [2] and ViT [3]), published at ICML 2021.

PipeTransformer leverages automated elastic pipelining for efficient distributed training of Transformer models. In PipeTransformer, we designed an adaptive on-the-fly freeze algorithm that can identify and freeze some layers gradually during training and an elastic pipelining system that can dynamically allocate resources to train the remaining active layers. More specifically, PipeTransformer automatically excludes frozen layers from the pipeline, packs active layers into fewer GPUs, and forks more replicas to increase data-parallel width. We evaluate PipeTransformer using Vision Transformer (ViT) on ImageNet and BERT on SQuAD and GLUE datasets. Our results show that compared to the state-of-the-art baseline, PipeTransformer attains up to 2.83-fold speedup without losing accuracy. We also provide various performance analyses for a more comprehensive understanding of our algorithmic and system-wise design.

Next, we will introduce the background, motivation, our idea, design, and how we implement the algorithm and system with PyTorch Distributed APIs.

- Paper: http://proceedings.mlr.press/v139/he21a.html

- Source Code: https://DistML.ai.

- Slides: https://docs.google.com/presentation/d/1t6HWL33KIQo2as0nSHeBpXYtTBcy0nXCoLiKd0EashY/edit?usp=sharing

Introduction

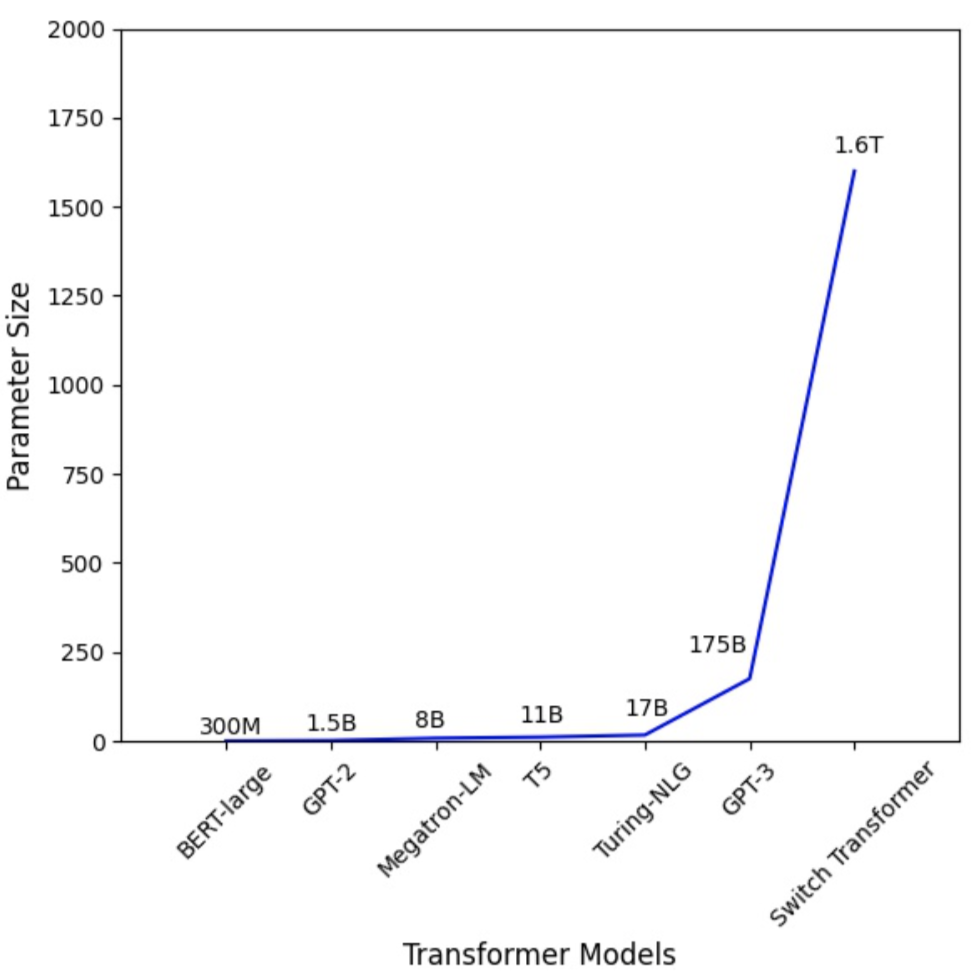

Figure 1: the Parameter Number of Transformer Models Increases Dramatically.

Large Transformer models [4][5] have powered accuracy breakthroughs in both natural language processing and computer vision. GPT-3 [4] hit a new record high accuracy for nearly all NLP tasks. Vision Transformer (ViT) [3] also achieved 89\% top-1 accuracy in ImageNet, outperforming state-of-the-art convolutional networks ResNet-152 and EfficientNet. To tackle the growth in model sizes, researchers have proposed various distributed training techniques, including parameter servers [6][7][8], pipeline parallelism [9][10][11][12], intra-layer parallelism [13][14][15], and zero redundancy data-parallel [16].

Existing distributed training solutions, however, only study scenarios where all model weights are required to be optimized throughout the training (i.e., computation and communication overhead remains relatively static over different iterations). Recent works on progressive training suggest that parameters in neural networks can be trained dynamically:

- Freeze Training: Singular Vector Canonical Correlation Analysis for Deep Learning Dynamics and Interpretability. NeurIPS 2017

- Efficient Training of BERT by Progressively Stacking. ICML 2019

- Accelerating Training of Transformer-Based Language Models with Progressive Layer Dropping. NeurIPS 2020.

- On the Transformer Growth for Progressive BERT Training. NACCL 2021

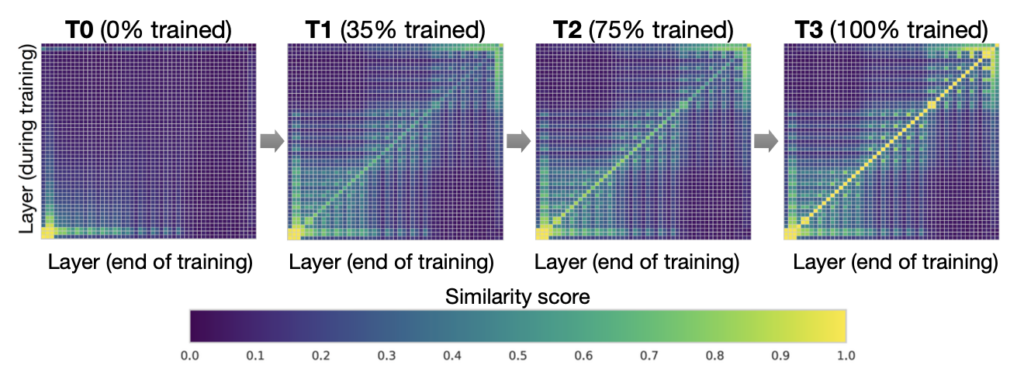

Figure 2. Interpretable Freeze Training: DNNs converge bottom-up (Results on CIFAR10 using ResNet). Each pane shows layer-by-layer similarity using SVCCA [17][18]

For example, in freeze training [17][18], neural networks usually converge from the bottom-up (i.e., not all layers need to be trained all the way through training). Figure 2 shows an example of how weights gradually stabilize during training in this approach. This observation motivates us to utilize freeze training for distributed training of Transformer models to accelerate training by dynamically allocating resources to focus on a shrinking set of active layers. Such a layer freezing strategy is especially pertinent to pipeline parallelism, as excluding consecutive bottom layers from the pipeline can reduce computation, memory, and communication overhead.

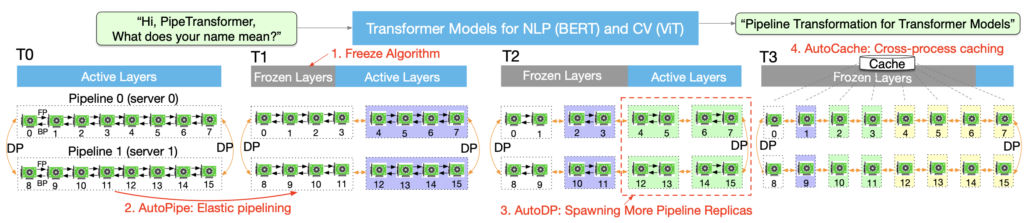

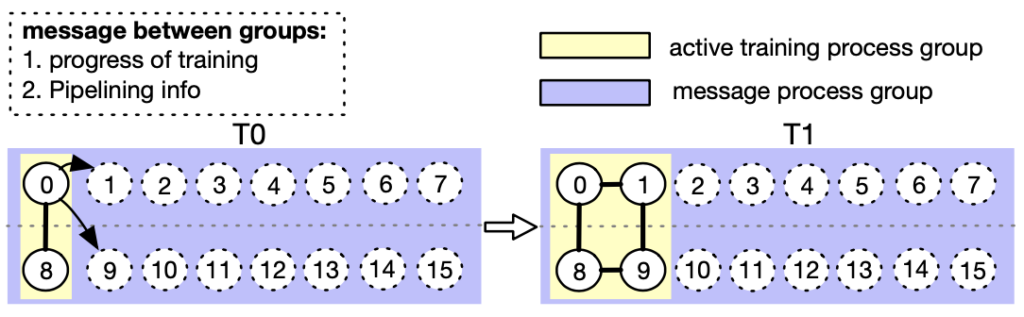

Figure 3. The process of PipeTransformer’s automated and elastic pipelining to accelerate distributed training of Transformer models

We propose PipeTransformer, an elastic pipelining training acceleration framework that automatically reacts to frozen layers by dynamically transforming the scope of the pipelined model and the number of pipeline replicas. To the best of our knowledge, this is the first paper that studies layer freezing in the context of both pipeline and data-parallel training. Figure 3 demonstrates the benefits of such a combination. First, by excluding frozen layers from the pipeline, the same model can be packed into fewer GPUs, leading to both fewer cross-GPU communications and smaller pipeline bubbles. Second, after packing the model into fewer GPUs, the same cluster can accommodate more pipeline replicas, increasing the width of data parallelism. More importantly, the speedups acquired from these two benefits are multiplicative rather than additive, further accelerating the training.

The design of PipeTransformer faces four major challenges. First, the freeze algorithm must make on-the-fly and adaptive freezing decisions; however, existing work [17][18] only provides a posterior analysis tool. Second, the efficiency of pipeline re-partitioning results is influenced by multiple factors, including partition granularity, cross-partition activation size, and the chunking (the number of micro-batches) in mini-batches, which require reasoning and searching in a large solution space. Third, to dynamically introduce additional pipeline replicas, PipeTransformer must overcome the static nature of collective communications and avoid potentially complex cross-process messaging protocols when onboarding new processes (one pipeline is handled by one process). Finally, caching can save time for repeated forward propagation of frozen layers, but it must be shared between existing pipelines and newly added ones, as the system cannot afford to create and warm up a dedicated cache for each replica.

Figure 4: An Animation to Show the Dynamics of PipeTransformer

As shown in the animation (Figure 4), PipeTransformer is designed with four core building blocks to address the aforementioned challenges. First, we design a tunable and adaptive algorithm to generate signals that guide the selection of layers to freeze over different iterations (Freeze Algorithm). Once triggered by these signals, our elastic pipelining module (AutoPipe), then packs the remaining active layers into fewer GPUs by taking both activation sizes and variances of workloads across heterogeneous partitions (frozen layers and active layers) into account. It then splits a mini-batch into an optimal number of micro-batches based on prior profiling results for different pipeline lengths. Our next module, AutoDP, spawns additional pipeline replicas to occupy freed-up GPUs and maintains hierarchical communication process groups to attain dynamic membership for collective communications. Our final module, AutoCache, efficiently shares activations across existing and new data-parallel processes and automatically replaces stale caches during transitions.

Overall, PipeTransformer combines the Freeze Algorithm, AutoPipe, AutoDP, and AutoCache modules to provide a significant training speedup. We evaluate PipeTransformer using Vision Transformer (ViT) on ImageNet and BERT on GLUE and SQuAD datasets. Our results show that PipeTransformer attains up to 2.83-fold speedup without losing accuracy. We also provide various performance analyses for a more comprehensive understanding of our algorithmic and system-wise design. Finally, we have also developed open-source flexible APIs for PipeTransformer, which offer a clean separation among the freeze algorithm, model definitions, and training accelerations, allowing for transferability to other algorithms that require similar freezing strategies.

Overall Design

Suppose we aim to train a massive model in a distributed training system where the hybrid of pipelined model parallelism and data parallelism is used to target scenarios where either the memory of a single GPU device cannot hold the model, or if loaded, the batch size is small enough to avoid running out of memory. More specifically, we define our settings as follows:

Training task and model definition. We train Transformer models (e.g., Vision Transformer, BERT on large-scale image or text datasets. The Transformer model mathcal{F} has L layers, in which the i th layer is composed of a forward computation function f_i and a corresponding set of parameters.

Training infrastructure. Assume the training infrastructure contains a GPU cluster that has N GPU servers (i.e. nodes). Each node has I GPUs. Our cluster is homogeneous, meaning that each GPU and server have the same hardware configuration. Each GPU’s memory capacity is M_\text{GPU}. Servers are connected by a high bandwidth network interface such as InfiniBand interconnect.

Pipeline parallelism. In each machine, we load a model \mathcal{F} into a pipeline \mathcal{P} which has Kpartitions (K also represents the pipeline length). The kth partition p_k consists of consecutive layers. We assume each partition is handled by a single GPU device. 1 \leq K \leq I, meaning that we can build multiple pipelines for multiple model replicas in a single machine. We assume all GPU devices in a pipeline belonging to the same machine. Our pipeline is a synchronous pipeline, which does not involve stale gradients, and the number of micro-batches is M. In the Linux OS, each pipeline is handled by a single process. We refer the reader to GPipe [10] for more details.

Data parallelism. DDP is a cross-machine distributed data-parallel process group within R parallel workers. Each worker is a pipeline replica (a single process). The rth worker’s index (ID) is rank r. For any two pipelines in DDP, they can belong to either the same GPU server or different GPU servers, and they can exchange gradients with the AllReduce algorithm.

Under these settings, our goal is to accelerate training by leveraging freeze training, which does not require all layers to be trained throughout the duration of the training. Additionally, it may help save computation, communication, memory cost, and potentially prevent overfitting by consecutively freezing layers. However, these benefits can only be achieved by overcoming the four challenges of designing an adaptive freezing algorithm, dynamical pipeline re-partitioning, efficient resource reallocation, and cross-process caching, as discussed in the introduction.

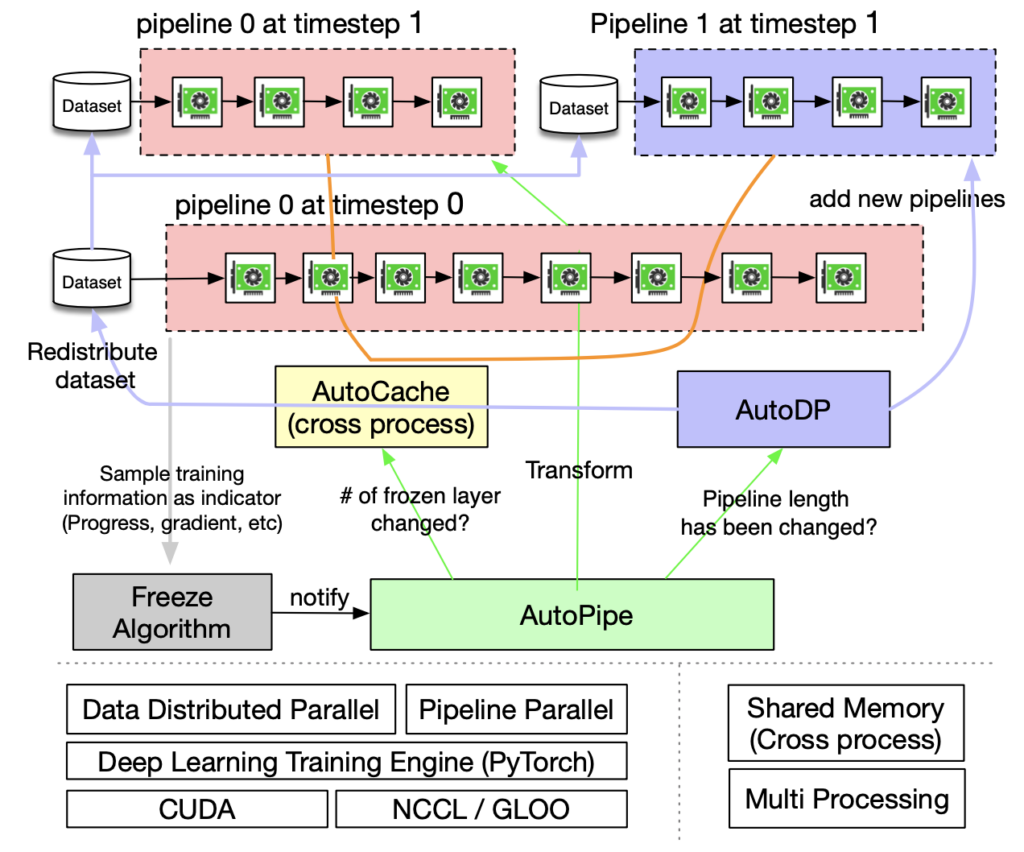

Figure 5. Overview of PipeTransformer Training System

PipeTransformer co-designs an on-the-fly freeze algorithm and an automated elastic pipelining training system that can dynamically transform the scope of the pipelined model and the number of pipeline replicas. The overall system architecture is illustrated in Figure 5. To support PipeTransformer’s elastic pipelining, we maintain a customized version of PyTorch Pipeline. For data parallelism, we use PyTorch DDP as a baseline. Other libraries are standard mechanisms of an operating system (e.g.,multi-processing) and thus avoid specialized software or hardware customization requirements. To ensure the generality of our framework, we have decoupled the training system into four core components: freeze algorithm, AutoPipe, AutoDP, and AutoCache. The freeze algorithm (grey) samples indicators from the training loop and makes layer-wise freezing decisions, which will be shared with AutoPipe (green). AutoPipe is an elastic pipeline module that speeds up training by excluding frozen layers from the pipeline and packing the active layers into fewer GPUs (pink), leading to both fewer cross-GPU communications and smaller pipeline bubbles. Subsequently, AutoPipe passes pipeline length information to AutoDP (purple), which then spawns more pipeline replicas to increase data-parallel width, if possible. The illustration also includes an example in which AutoDP introduces a new replica (purple). AutoCache (orange edges) is a cross-pipeline caching module, as illustrated by connections between pipelines. The source code architecture is aligned with Figure 5 for readability and generality.

Implementation Using PyTorch APIs

As can be seen from Figure 5, PipeTransformers contain four components: Freeze Algorithm, AutoPipe, AutoDP, and AutoCache. Among them, AutoPipe and AutoDP relies on PyTorch DDP (torch.nn.parallel.DistributedDataParallel) [1] and Pipeline (torch.distributed.pipeline), respectively. In this blog, we only highlight the key implementation details of AutoPipe and AutoDP. For details of Freeze Algorithm and AutoCache, please refer to our paper.

AutoPipe: Elastic Pipelining

AutoPipe can accelerate training by excluding frozen layers from the pipeline and packing the active layers into fewer GPUs. This section elaborates on the key components of AutoPipe that dynamically 1) partition pipelines, 2) minimize the number of pipeline devices, and 3) optimize mini-batch chunk size accordingly.

Basic Usage of PyTorch Pipeline

Before diving into details of AutoPipe, let us warm up the basic usage of PyTorch Pipeline (torch.distributed.pipeline.sync.Pipe, see this tutorial). More specially, we present a simple example to understand the design of Pipeline in practice:

# Step 1: build a model including two linear layers

fc1 = nn.Linear(16, 8).cuda(0)

fc2 = nn.Linear(8, 4).cuda(1)

# Step 2: wrap the two layers with nn.Sequential

model = nn.Sequential(fc1, fc2)

# Step 3: build Pipe (torch.distributed.pipeline.sync.Pipe)

model = Pipe(model, chunks=8)

# do training/inference

input = torch.rand(16, 16).cuda(0)

output_rref = model(input)

In this basic example, we can see that before initializing Pipe, we need to partition the model nn.Sequential into multiple GPU devices and set optimal chunk number (chunks). Balancing computation time across partitions is critical to pipeline training speed, as skewed workload distributions across stages can lead to stragglers and forcing devices with lighter workloads to wait. The chunk number may also have a non-trivial influence on the throughput of the pipeline.

Balanced Pipeline Partitioning

In dynamic training system such as PipeTransformer, maintaining optimally balanced partitions in terms of parameter numbers does not guarantee the fastest training speed because other factors also play a crucial role:

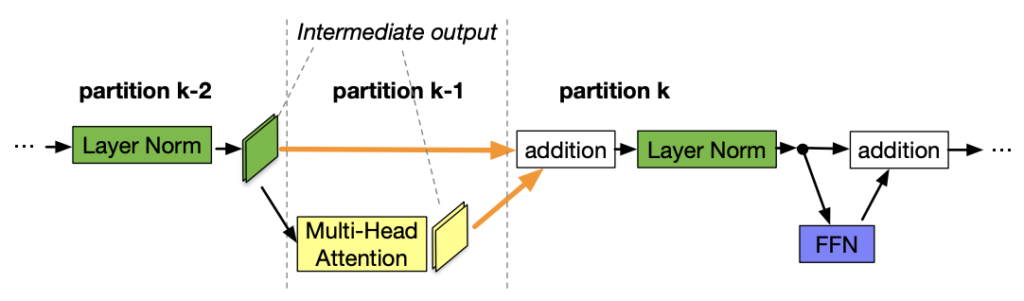

Figure 6. The partition boundary is in the middle of a skip connection

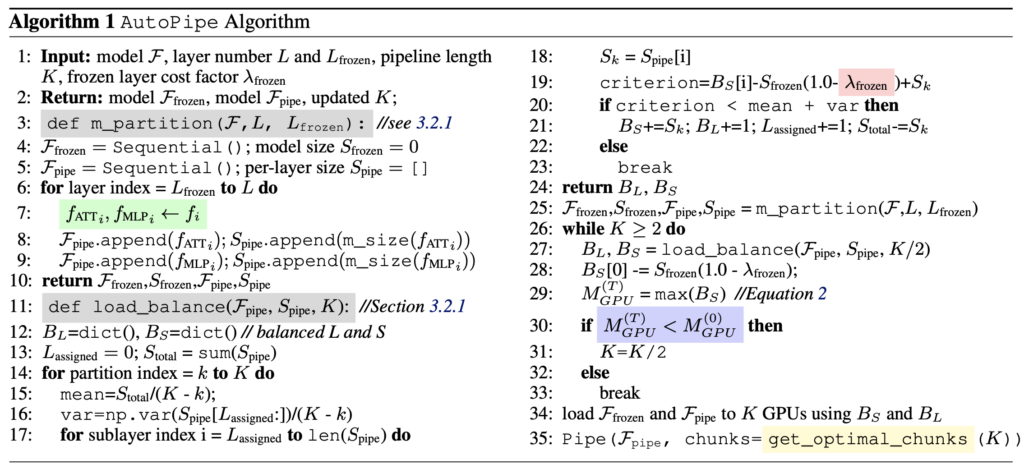

- Cross-partition communication overhead. Placing a partition boundary in the middle of a skip connection leads to additional communications since tensors in the skip connection must now be copied to a different GPU. For example, with BERT partitions in Figure 6, partition k must take intermediate outputs from both partition k-2 and partition k-1. In contrast, if the boundary is placed after the addition layer, the communication overhead between partition k-1 and k is visibly smaller. Our measurements show that having cross-device communication is more expensive than having slightly imbalanced partitions (see the Appendix in our paper). Therefore, we do not consider breaking skip connections (highlighted separately as an entire attention layer and MLP layer in green color at line 7 in Algorithm 1.

- Frozen layer memory footprint. During training, AutoPipe must recompute partition boundaries several times to balance two distinct types of layers: frozen layers and active layers. The frozen layer’s memory cost is a fraction of that inactive layer, given that the frozen layer does not need backward activation maps, optimizer states, and gradients. Instead of launching intrusive profilers to obtain thorough metrics on memory and computational cost, we define a tunable cost factor lambda_{\text{frozen}} to estimate the memory footprint ratio of a frozen layer over the same active layer. Based on empirical measurements in our experimental hardware, we set it to \frac{1}{6}.

Based on the above two considerations, AutoPipe balances pipeline partitions based on parameter sizes. More specifically, AutoPipe uses a greedy algorithm to allocate all frozen and active layers to evenly distribute partitioned sublayers into K GPU devices. Pseudocode is described as the load\_balance() function in Algorithm 1. The frozen layers are extracted from the original model and kept in a separate model instance \mathcal{F}_{\text{frozen}} in the first device of a pipeline.

Note that the partition algorithm employed in this paper is not the only option; PipeTransformer is modularized to work with any alternatives.

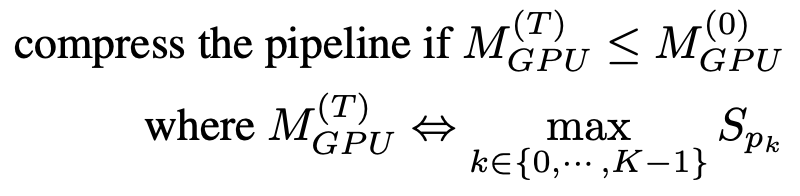

Pipeline Compression

Pipeline compression helps to free up GPUs to accommodate more pipeline replicas and reduce the number of cross-device communications between partitions. To determine the timing of compression, we can estimate the memory cost of the largest partition after compression, and then compare it with that of the largest partition of a pipeline at timestep T=0. To avoid extensive memory profiling, the compression algorithm uses the parameter size as a proxy for the training memory footprint. Based on this simplification, the criterion of pipeline compression is as follows:

Once the freeze notification is received, AutoPipe will always attempt to divide the pipeline length K by 2 (e.g., from 8 to 4, then 2). By using \frac{K}{2} as the input, the compression algorithm can verify if the result satisfies the criterion in Equation (1). Pseudocode is shown in lines 25-33 in Algorithm 1. Note that this compression makes the acceleration ratio exponentially increase during training, meaning that if a GPU server has a larger number of GPUs (e.g., more than 8), the acceleration ratio will be further amplified.

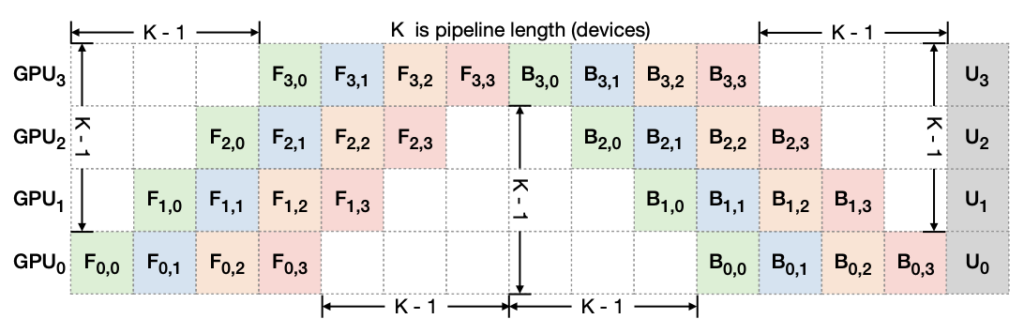

Figure 7. Pipeline Bubble: F_{d,b}, and U_d” denote forward, backward, and the optimizer update of micro-batch b on device d, respectively. The total bubble size in each iteration is K-1 times per micro-batch forward and backward cost.

Additionally, such a technique can also speed up training by shrinking the size of pipeline bubbles. To explain bubble sizes in a pipeline, Figure 7 depicts how 4 micro-batches run through a 4-device pipeline K = 4. In general, the total bubble size is (K-1) times per micro-batch forward and backward cost. Therefore, it is clear that shorter pipelines have smaller bubble sizes.

Dynamic Number of Micro-Batches

Prior pipeline parallel systems use a fixed number of micro-batches per mini-batch (M). GPipe suggests M \geq 4 \times K, where K is the number of partitions (pipeline length). However, given that PipeTransformer dynamically configures K, we find it to be sub-optimal to maintain a static M during training. Moreover, when integrated with DDP, the value of M also has an impact on the efficiency of DDP gradient synchronizations. Since DDP must wait for the last micro-batch to finish its backward computation on a parameter before launching its gradient synchronization, finer micro-batches lead to a smaller overlap between computation and communication. Hence, instead of using a static value, PipeTransformer searches for optimal M on the fly in the hybrid of DDP environment by enumerating M values ranging from K to 6K. For a specific training environment, the profiling needs only to be done once (see Algorithm 1 line 35).

For the complete source code, please refer to https://github.com/Distributed-AI/PipeTransformer/blob/master/pipe_transformer/pipe/auto_pipe.py.

AutoDP: Spawning More Pipeline Replicas

As AutoPipe compresses the same pipeline into fewer GPUs, AutoDP can automatically spawn new pipeline replicas to increase data-parallel width.

Despite the conceptual simplicity, subtle dependencies on communications and states require careful design. The challenges are threefold:

- DDP Communication: Collective communications in PyTorch DDP requires static membership, which prevents new pipelines from connecting with existing ones;

- State Synchronization: newly activated processes must be consistent with existing pipelines in the training progress (e.g., epoch number and learning rate), weights and optimizer states, the boundary of frozen layers, and pipeline GPU range;

- Dataset Redistribution: the dataset should be re-balanced to match a dynamic number of pipelines. This not only avoids stragglers but also ensures that gradients from all DDP processes are equally weighted.

Figure 8. AutoDP: handling dynamical data-parallel with messaging between double process groups (Process 0-7 belong to machine 0, while process 8-15 belong to machine 1)

To tackle these challenges, we create double communication process groups for DDP. As in the example shown in Figure 8, the message process group (purple) is responsible for light-weight control messages and covers all processes, while the active training process group (yellow) only contains active processes and serves as a vehicle for heavy-weight tensor communications during training. The message group remains static, whereas the training group is dismantled and reconstructed to match active processes. In T0, only processes 0 and 8 are active. During the transition to T1, process 0 activates processes 1 and 9 (newly added pipeline replicas) and synchronizes necessary information mentioned above using the message group. The four active processes then form a new training group, allowing static collective communications adaptive to dynamic memberships. To redistribute the dataset, we implement a variant of DistributedSampler that can seamlessly adjust data samples to match the number of active pipeline replicas.

The above design also naturally helps to reduce DDP communication overhead. More specifically, when transitioning from T0 to T1, processes 0 and 1 destroy the existing DDP instances, and active processes construct a new DDP training group using a cached pipelined model (AutoPipe stores frozen model and cached model separately).

We use the following APIs to implement the design above.

import torch.distributed as dist

from torch.nn.parallel import DistributedDataParallel as DDP

# initialize the process group (this must be called in the initialization of PyTorch DDP)

dist.init_process_group(init_method='tcp://' + str(self.config.master_addr) + ':' +

str(self.config.master_port), backend=Backend.GLOO, rank=self.global_rank, world_size=self.world_size)

...

# create active process group (yellow color)

self.active_process_group = dist.new_group(ranks=self.active_ranks, backend=Backend.NCCL, timeout=timedelta(days=365))

...

# create message process group (yellow color)

self.comm_broadcast_group = dist.new_group(ranks=[i for i in range(self.world_size)], backend=Backend.GLOO, timeout=timedelta(days=365))

...

# create DDP-enabled model when the number of data-parallel workers is changed. Note:

# 1. The process group to be used for distributed data all-reduction.

If None, the default process group, which is created by torch.distributed.init_process_group, will be used.

In our case, we set it as self.active_process_group

# 2. device_ids should be set when the pipeline length = 1 (the model resides on a single CUDA device).

self.pipe_len = gpu_num_per_process

if gpu_num_per_process > 1:

model = DDP(model, process_group=self.active_process_group, find_unused_parameters=True)

else:

model = DDP(model, device_ids=[self.local_rank], process_group=self.active_process_group, find_unused_parameters=True)

# to broadcast message among processes, we use dist.broadcast_object_list

def dist_broadcast(object_list, src, group):

"""Broadcasts a given object to all parties."""

dist.broadcast_object_list(object_list, src, group=group)

return object_list

For the complete source code, please refer to https://github.com/Distributed-AI/PipeTransformer/blob/master/pipe_transformer/dp/auto_dp.py.

Experiments

This section first summarizes experiment setups and then evaluates PipeTransformer using computer vision and natural language processing tasks.

Hardware. Experiments were conducted on 2 identical machines connected by InfiniBand CX353A (5GB/s), where each machine is equipped with 8 NVIDIA Quadro RTX 5000 (16GB GPU memory). GPU-to-GPU bandwidth within a machine (PCI 3.0, 16 lanes) is 15.754GB/s.

Implementation. We used PyTorch Pipe as a building block. The BERT model definition, configuration, and related tokenizer are from HuggingFace 3.5.0. We implemented Vision Transformer using PyTorch by following its TensorFlow implementation. More details can be found in our source code.

Models and Datasets. Experiments employ two representative Transformers in CV and NLP: Vision Transformer (ViT) and BERT. ViT was run on an image classification task, initialized with pre-trained weights on ImageNet21K and fine-tuned on ImageNet and CIFAR-100. BERT was run on two tasks, text classification on the SST-2 dataset from the General Language Understanding Evaluation (GLUE) benchmark, and question answering on the SQuAD v1.1 Dataset (Stanford Question Answering), which is a collection of 100k crowdsourced question/answer pairs.

Training Schemes. Given that large models normally would require thousands of GPU-days {\emph{e.g.}, GPT-3) if trained from scratch, fine-tuning downstream tasks using pre-trained models has become a trend in CV and NLP communities. Moreover, PipeTransformer is a complex training system that involves multiple core components. Thus, for the first version of PipeTransformer system development and algorithmic research, it is not cost-efficient to develop and evaluate from scratch using large-scale pre-training. Therefore, the experiments presented in this section focuses on pre-trained models. Note that since the model architectures in pre-training and fine-tuning are the same, PipeTransformer can serve both. We discussed pre-training results in the Appendix.

Baseline. Experiments in this section compare PipeTransformer to the state-of-the-art framework, a hybrid scheme of PyTorch Pipeline (PyTorch’s implementation of GPipe) and PyTorch DDP. Since this is the first paper that studies accelerating distributed training by freezing layers, there are no perfectly aligned counterpart solutions yet.

Hyper-parameters. Experiments use ViT-B/16 (12 transformer layers, 16 \times 16 input patch size) for ImageNet and CIFAR-100, BERT-large-uncased (24 layers) for SQuAD 1.1, and BERT-base-uncased (12 layers) for SST-2. With PipeTransformer, ViT and BERT training can set the per-pipeline batch size to around 400 and 64, respectively. Other hyperparameters (e.g., epoch, learning rate) for all experiments are presented in Appendix.

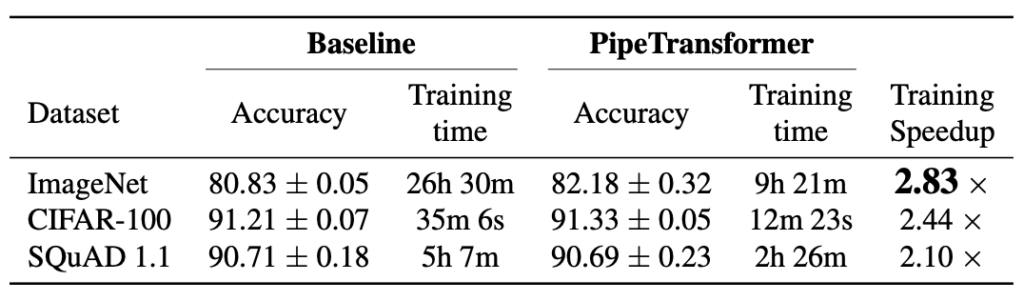

Overall Training Acceleration

We summarize the overall experimental results in the table above. Note that the speedup we report is based on a conservative \alpha \frac{1}{3} value that can obtain comparable or even higher accuracy. A more aggressive \alpha (\frac{2}{5}, \frac{1}{2}) can obtain a higher speedup but may lead to a slight loss in accuracy. Note that the model size of BERT (24 layers) is larger than ViT-B/16 (12 layers), thus it takes more time for communication.

Performance Analysis

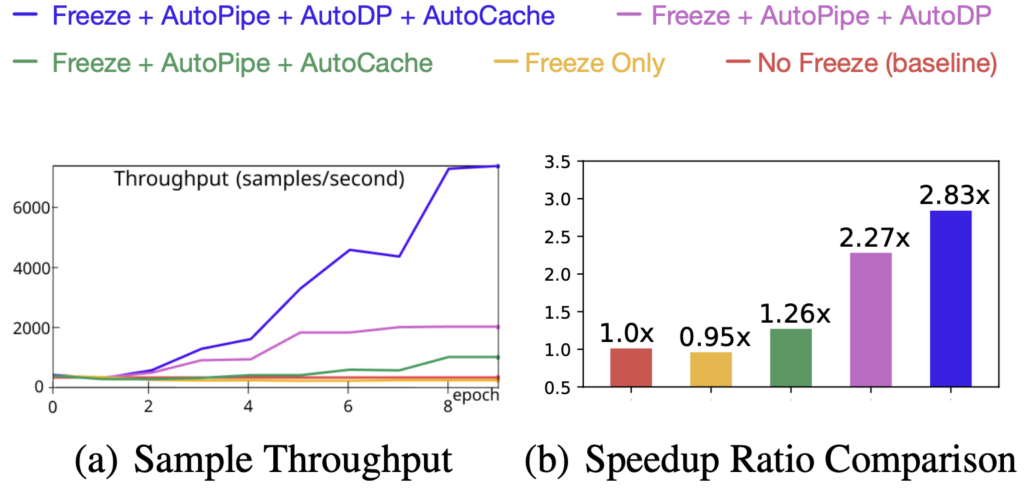

Speedup Breakdown

This section presents evaluation results and analyzes the performance of different components in \autopipe. More experimental results can be found in the Appendix.

Figure 9. Speedup Breakdown (ViT on ImageNet)

To understand the efficacy of all four components and their impacts on training speed, we experimented with different combinations and used their training sample throughput (samples/second) and speedup ratio as metrics. Results are illustrated in Figure 9. Key takeaways from these experimental results are:

- the main speedup is the result of elastic pipelining which is achieved through the joint use of AutoPipe and AutoDP;

- AutoCache’s contribution is amplified by AutoDP;

- freeze training alone without system-wise adjustment even downgrades the training speed.

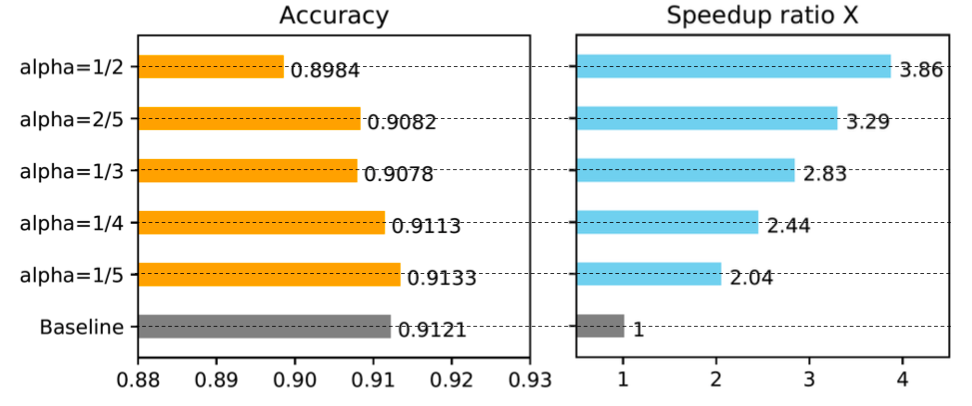

Tuning \alpha in Freezing Algorithm

Figure 10. Tuning \alpha in Freezing Algorithm

We ran experiments to show how the \alpha in the freeze algorithms influences training speed. The result clearly demonstrates that a larger \alpha (excessive freeze) leads to a greater speedup but suffers from a slight performance degradation. In the case shown in Figure 10, where \alpha=1/5, freeze training outperforms normal training and obtains a 2.04-fold speedup. We provide more results in the Appendix.

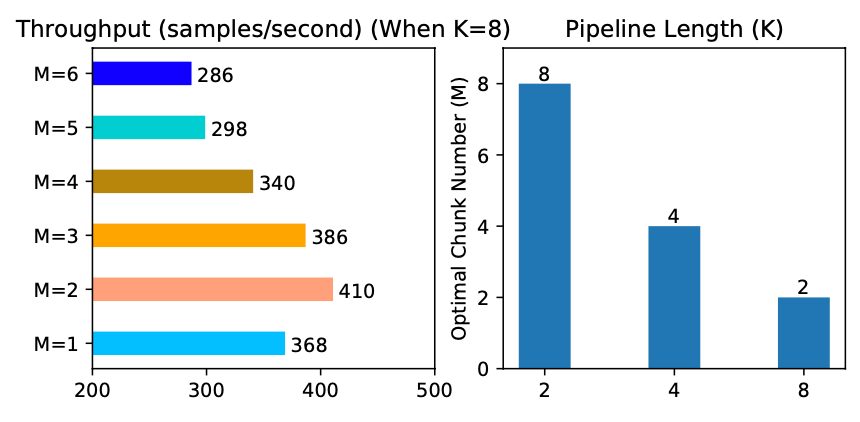

Optimal Chunks in the elastic pipeline

Figure 11. Optimal chunk number in the elastic pipeline

We profiled the optimal number of micro-batches M for different pipeline lengths K. Results are summarized in Figure 11. As we can see, different K values lead to different optimal M, and the throughput gaps across different M values are large (as shown when K=8), which confirms the necessity of an anterior profiler in elastic pipelining.

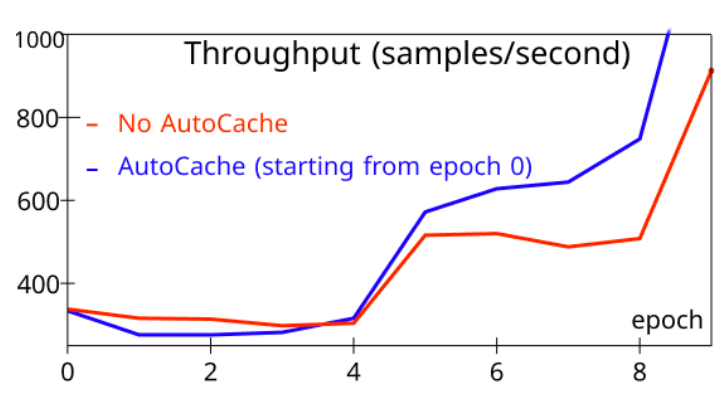

Understanding the Timing of Caching

Figure 12. the timing of caching

To evaluate AutoCache, we compared the sample throughput of training that activates AutoCache from epoch 0 (blue) with the training job without AutoCache (red). Figure 12 shows that enabling caching too early can slow down training, as caching can be more expensive than the forward propagation on a small number of frozen layers. After more layers are frozen, caching activations clearly outperform the corresponding forward propagation. As a result, AutoCache uses a profiler to determine the proper timing to enable caching. In our system, for ViT (12 layers), caching starts from 3 frozen layers, while for BERT (24 layers), caching starts from 5 frozen layers.

For more detailed experimental analysis, please refer to our paper.

Summarization

This blog introduces PipeTransformer, a holistic solution that combines elastic pipeline-parallel and data-parallel for distributed training using PyTorch Distributed APIs. More specifically, PipeTransformer incrementally freezes layers in the pipeline, packs remaining active layers into fewer GPUs, and forks more pipeline replicas to increase the data-parallel width. Evaluations on ViT and BERT models show that compared to the state-of-the-art baseline, PipeTransformer attains up to 2.83× speedups without accuracy loss.

Reference

[1] Li, S., Zhao, Y., Varma, R., Salpekar, O., Noordhuis, P., Li,T., Paszke, A., Smith, J., Vaughan, B., Damania, P., et al. Pytorch Distributed: Experiences on Accelerating Dataparallel Training. Proceedings of the VLDB Endowment,13(12), 2020

[2] Devlin, J., Chang, M. W., Lee, K., and Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In NAACL-HLT, 2019

[3] Dosovitskiy, A., Beyer, L., Kolesnikov, A., Weissenborn, D., Zhai, X., Unterthiner, T., Dehghani, M., Minderer, M., Heigold, G., Gelly, S., et al. An image is Worth 16×16 words: Transformers for Image Recognition at Scale.

[4] Brown, T. B., Mann, B., Ryder, N., Subbiah, M., Kaplan, J., Dhariwal, P., Neelakantan, A., Shyam, P., Sastry, G., Askell, A., et al. Language Models are Few-shot Learners.

[5] Lepikhin, D., Lee, H., Xu, Y., Chen, D., Firat, O., Huang, Y., Krikun, M., Shazeer, N., and Chen, Z. Gshard: Scaling Giant Models with Conditional Computation and Automatic Sharding.

[6] Li, M., Andersen, D. G., Park, J. W., Smola, A. J., Ahmed, A., Josifovski, V., Long, J., Shekita, E. J., and Su, B. Y. Scaling Distributed Machine Learning with the Parameter Server. In 11th {USENIX} Symposium on Operating Systems Design and Implementation ({OSDI} 14), pp. 583–598, 2014.

[7] Jiang, Y., Zhu, Y., Lan, C., Yi, B., Cui, Y., and Guo, C. A Unified Architecture for Accelerating Distributed DNN Training in Heterogeneous GPU/CPU Clusters. In 14th USENIX Symposium on Operating Systems Design and Implementation (OSDI 20), pp. 463–479. USENIX Association, November 2020. ISBN 978-1-939133-19- 9.

[8] Kim, S., Yu, G. I., Park, H., Cho, S., Jeong, E., Ha, H., Lee, S., Jeong, J. S., and Chun, B. G. Parallax: Sparsity-aware Data Parallel Training of Deep Neural Networks. In Proceedings of the Fourteenth EuroSys Conference 2019, pp. 1–15, 2019.

[9] Kim, C., Lee, H., Jeong, M., Baek, W., Yoon, B., Kim, I., Lim, S., and Kim, S. TorchGPipe: On-the-fly Pipeline Parallelism for Training Giant Models.

[10] Huang, Y., Cheng, Y., Bapna, A., Firat, O., Chen, M. X., Chen, D., Lee, H., Ngiam, J., Le, Q. V., Wu, Y., et al. Gpipe: Efficient Training of Giant Neural Networks using Pipeline Parallelism.

[11] Park, J. H., Yun, G., Yi, C. M., Nguyen, N. T., Lee, S., Choi, J., Noh, S. H., and ri Choi, Y. Hetpipe: Enabling Large DNN Training on (whimpy) Heterogeneous GPU Clusters through Integration of Pipelined Model Parallelism and Data Parallelism. In 2020 USENIX Annual Technical Conference (USENIX ATC 20), pp. 307–321. USENIX Association, July 2020. ISBN 978-1-939133- 14-4.

[12] Narayanan, D., Harlap, A., Phanishayee, A., Seshadri, V., Devanur, N. R., Ganger, G. R., Gibbons, P. B., and Zaharia, M. Pipedream: Generalized Pipeline Parallelism for DNN Training. In Proceedings of the 27th ACM Symposium on Operating Systems Principles, SOSP ’19, pp. 1–15, New York, NY, USA, 2019. Association for Computing Machinery. ISBN 9781450368735. doi: 10.1145/3341301.3359646.

[13] Lepikhin, D., Lee, H., Xu, Y., Chen, D., Firat, O., Huang, Y., Krikun, M., Shazeer, N., and Chen, Z. Gshard: Scaling Giant Models with Conditional Computation and Automatic Sharding.

[14] Shazeer, N., Cheng, Y., Parmar, N., Tran, D., Vaswani, A., Koanantakool, P., Hawkins, P., Lee, H., Hong, M., Young, C., Sepassi, R., and Hechtman, B. Mesh-Tensorflow: Deep Learning for Supercomputers. In Bengio, S., Wallach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., and Garnett, R. (eds.), Advances in Neural Information Processing Systems, volume 31, pp. 10414–10423. Curran Associates, Inc., 2018.

[15] Shoeybi, M., Patwary, M., Puri, R., LeGresley, P., Casper, J., and Catanzaro, B. Megatron-LM: Training Multi-billion Parameter Language Models using Model Parallelism.

[16] Rajbhandari, S., Rasley, J., Ruwase, O., and He, Y. ZERO: Memory Optimization towards Training a Trillion Parameter Models.

[17] Raghu, M., Gilmer, J., Yosinski, J., and Sohl Dickstein, J. Svcca: Singular Vector Canonical Correlation Analysis for Deep Learning Dynamics and Interpretability. In NIPS, 2017.

[18] Morcos, A., Raghu, M., and Bengio, S. Insights on Representational Similarity in Neural Networks with Canonical Correlation. In Bengio, S., Wallach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., and Garnett, R. (eds.), Advances in Neural Information Processing Systems 31, pp. 5732–5741. Curran Associates, Inc., 2018.

University of Pécs enables text and speech processing in Hungarian, builds the BERT-large model with just 1,000 euro with Azure

10 Aug 2021, 5:54 amEveryone prefers to use their mother tongue when communicating with chat agents and other automated services. However, for languages like Hungarian—spoken by only 15 million people—the market size will often be viewed as too small for large companies to create software, tools or applications that can process Hungarian text as input. Recognizing this need, the Applied Data Science and Artificial Intelligence team from University of Pécs decided to step up.

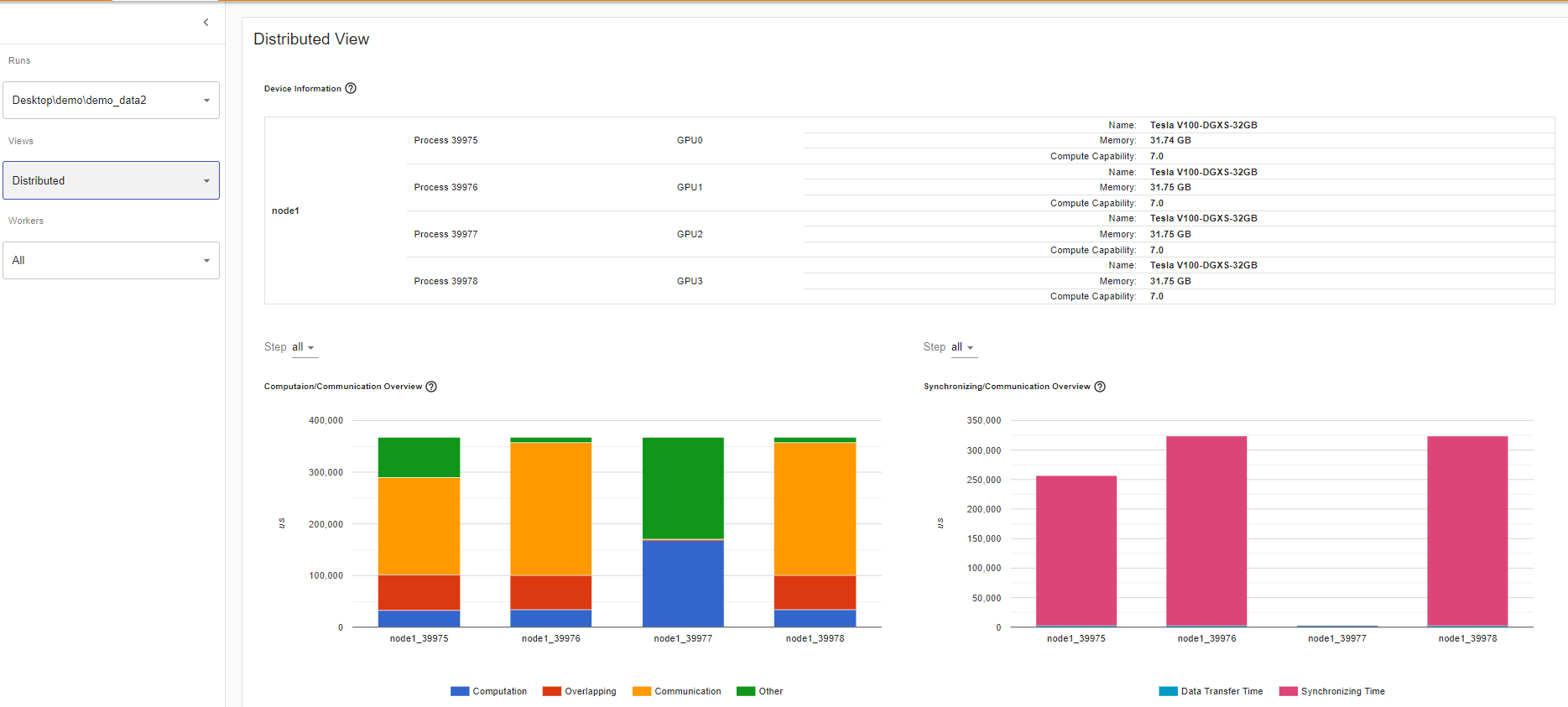

What’s New in PyTorch Profiler 1.9?

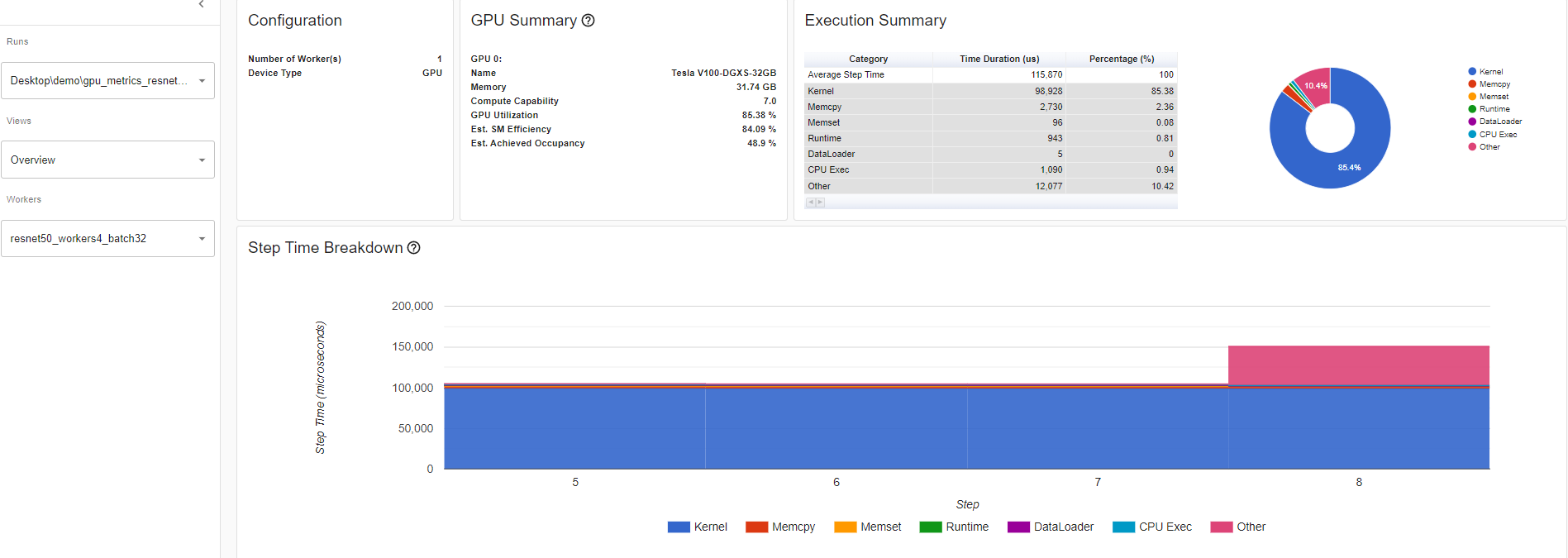

3 Aug 2021, 11:58 pmPyTorch Profiler v1.9 has been released! The goal of this new release (previous PyTorch Profiler release) is to provide you with new state-of-the-art tools to help diagnose and fix machine learning performance issues regardless of whether you are working on one or numerous machines. The objective is to target the execution steps that are the most costly in time and/or memory, and visualize the work load distribution between GPUs and CPUs.

Here is a summary of the five major features being released:

- Distributed Training View: This helps you understand how much time and memory is consumed in your distributed training job. Many issues occur when you take a training model and split the load into worker nodes to be run in parallel as it can be a black box. The overall model goal is to speed up model training. This distributed training view will help you diagnose and debug issues within individual nodes.

- Memory View: This view allows you to understand your memory usage better. This tool will help you avoid the famously pesky Out of Memory error by showing active memory allocations at various points of your program run.

- GPU Utilization Visualization: This tool helps you make sure that your GPU is being fully utilized.

- Cloud Storage Support: Tensorboard plugin can now read profiling data from Azure Blob Storage, Amazon S3, and Google Cloud Platform.

- Jump to Source Code: This feature allows you to visualize stack tracing information and jump directly into the source code. This helps you quickly optimize and iterate on your code based on your profiling results.

Getting Started with PyTorch Profiling Tool

PyTorch includes a profiling functionality called « PyTorch Profiler ». The PyTorch Profiler tutorial can be found here.

To instrument your PyTorch code for profiling, you must:

$ pip install torch-tb-profiler

import torch.profiler as profiler

With profiler.profile(XXXX)

Comments:

• For CUDA and CPU profiling, see below:

with torch.profiler.profile(

activities=[

torch.profiler.ProfilerActivity.CPU,

torch.profiler.ProfilerActivity.CUDA],

• With profiler.record_function(“$NAME”): allows putting a decorator (a tag associated to a name) for a block of function

• Profile_memory=True parameter under profiler.profile allows you to profile CPU and GPU memory footprint

Visualizing PyTorch Model Performance using PyTorch Profiler

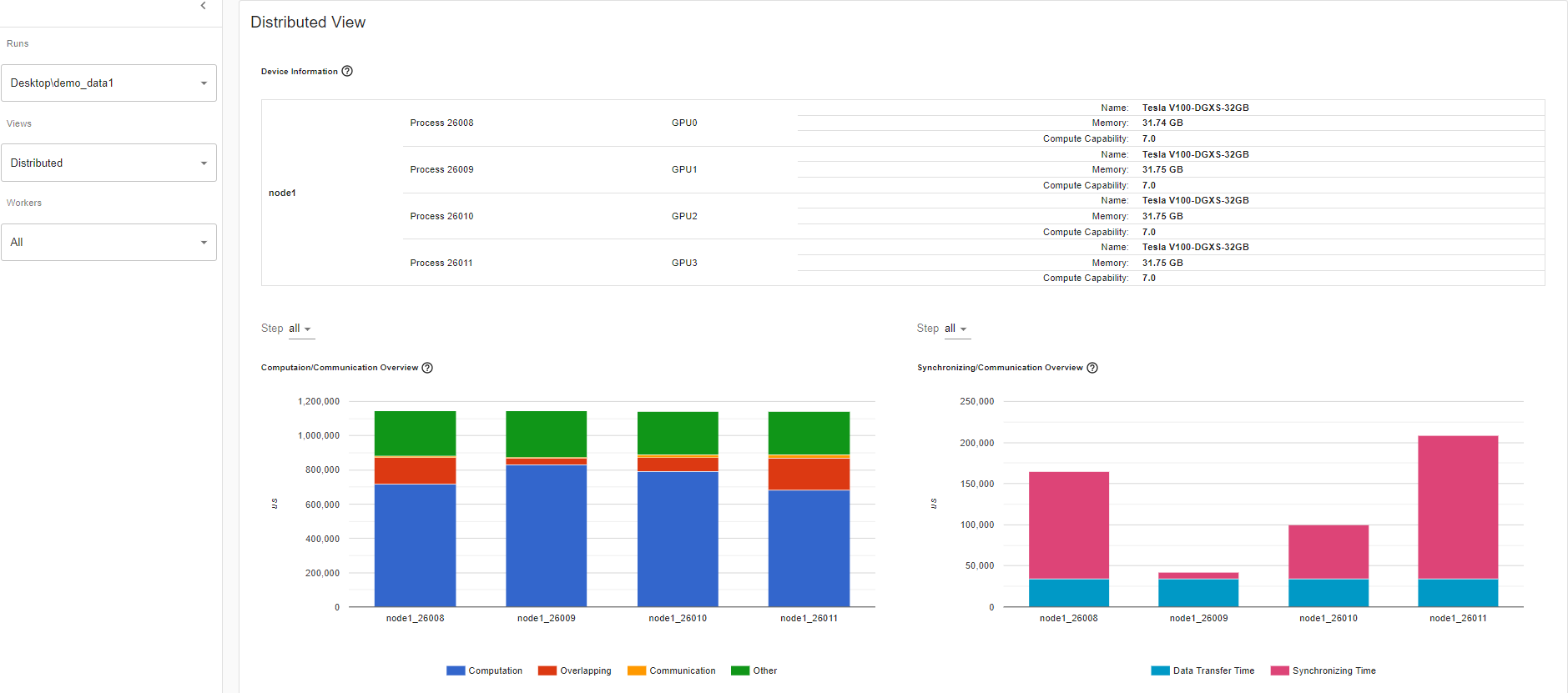

Distributed Training

Recent advances in deep learning argue for the value of large datasets and large models, which requires you to scale out model training to more computational resources. Distributed Data Parallel (DDP) and NVIDIA Collective Communications Library (NCCL) are the widely adopted paradigms in PyTorch for accelerating your deep learning training.

In this release of PyTorch Profiler, DDP with NCCL backend is now supported.

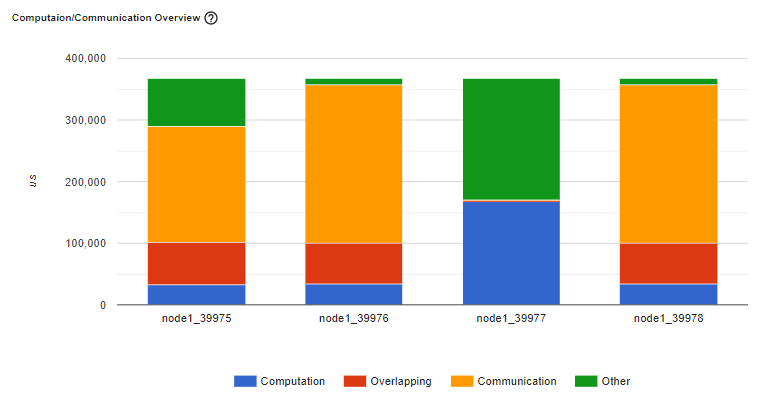

Computation/Communication Overview

In the Computation/Communication overview under the Distributed training view, you can observe the computation-to-communication ratio of each worker and [load balancer](https://en.wikipedia.org/wiki/Load_balancing_(computing) nodes between worker as measured by granularity.

Scenario 1:

If the computation and overlapping time of one worker is much larger than the others, this may suggest an issue in the workload balance or worker being a straggler. Computation is the sum of kernel time on GPU minus the overlapping time. The overlapping time is the time saved by interleaving communications during computation. The more overlapping time represents better parallelism between computation and communication. Ideally the computation and communication completely overlap with each other. Communication is the total communication time minus the overlapping time. The example image below displays how this scenario appears on Tensorboard.

Figure: A straggler example

Scenario 2:

If there is a small batch size (i.e. less computation on each worker) or the data to be transferred is large, the computation-to-communication may also be small and be seen in the profiler with low GPU utilization and long waiting times. This computation/communication view will allow you to diagnose your code to reduce communication by adopting gradient accumulation, or to decrease the communication proportion by increasing batch size. DDP communication time depends on model size. Batch size has no relationship with model size. So increasing batch size could make computation time longer and make computation-to-communication ratio bigger.

Synchronizing/Communication Overview

In the Synchronizing/Communication view, you can observe the efficiency of communication. This is done by taking the step time minus computation and communication time. Synchronizing time is part of the total communication time for waiting and synchronizing with other workers. The Synchronizing/Communication view includes initialization, data loader, CPU computation, and so on Insights like what is the ratio of total communication is really used for exchanging data and what is the idle time of waiting for data from other workers can be drawn from this view.

For example, if there is an inefficient workload balance or straggler issue, you’ll be able to identify it in this Synchronizing/Communication view. This view will show several workers’ waiting time being longer than others.

This table view above allows you to see the detailed statistics of all communication ops in each node. This allows you to see what operation types are being called, how many times each op is called, what is the size of the data being transferred by each op, etc.

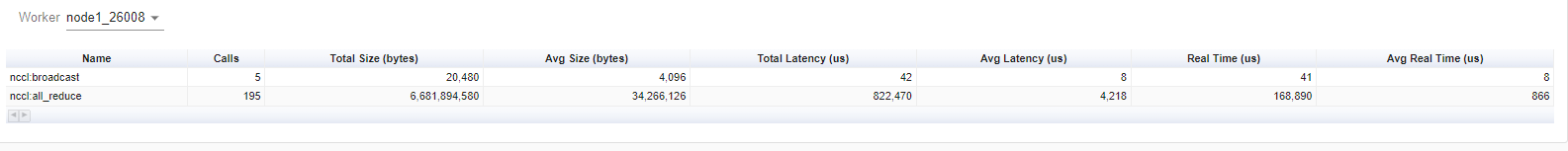

Memory View:

This memory view tool helps you understand the hardware resource consumption of the operators in your model. Understanding the time and memory consumption on the operator-level allows you to resolve performance bottlenecks and in turn, allow your model to execute faster. Given limited GPU memory size, optimizing the memory usage can:

- Allow bigger model which can potentially generalize better on end level tasks.

- Allow bigger batch size. Bigger batch sizes increase the training speed.

The profiler records all the memory allocation during the profiler interval. Selecting the “Device” will allow you to see each operator’s memory usage on the GPU side or host side. You must enable profile_memory=True to generate the below memory data as shown here.

With torch.profiler.profile(

Profiler_memory=True # this will take 1 – 2 minutes to complete.

)

Important Definitions:

• “Size Increase” displays the sum of all allocation bytes and minus all the memory release bytes.

• “Allocation Size” shows the sum of all allocation bytes without considering the memory release.

• “Self” means the allocated memory is not from any child operators, instead by the operator itself.

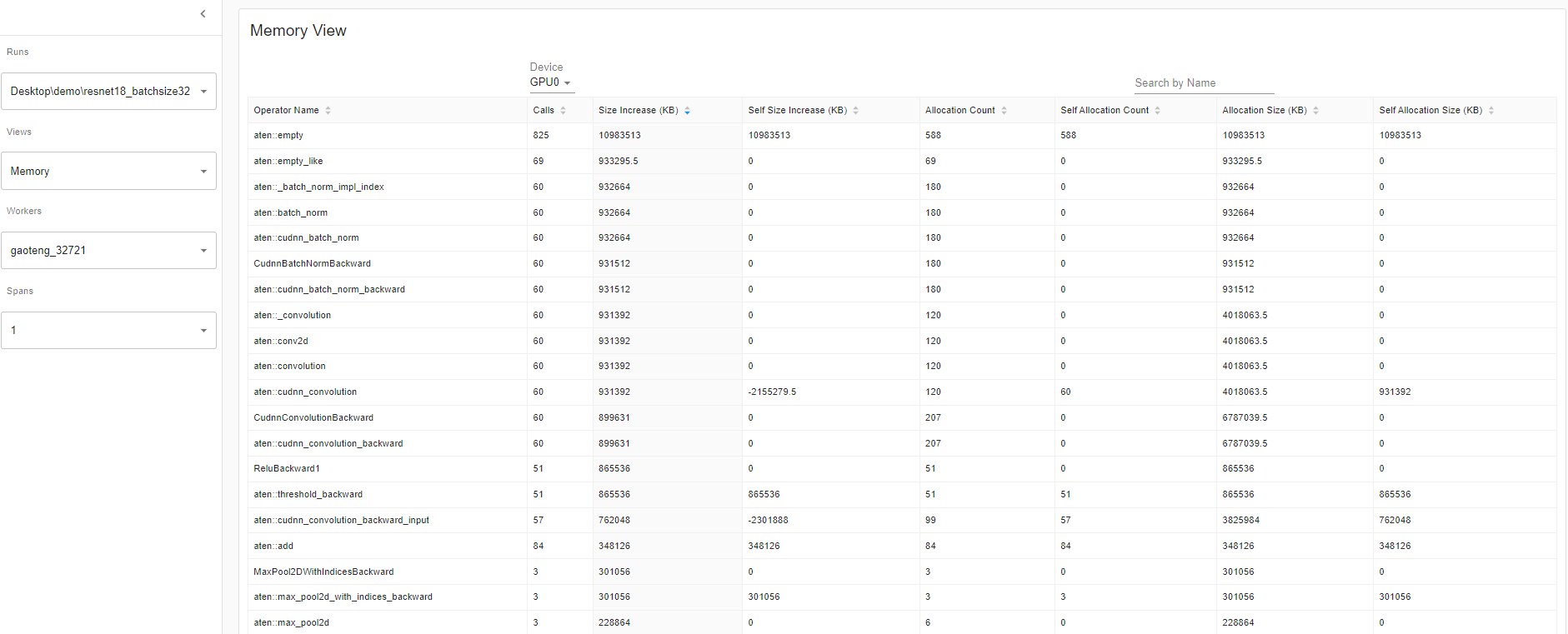

GPU Metric on Timeline:

This feature will help you debug performance issues when one or more GPU are underutilized. Ideally, your program should have high GPU utilization (aiming for 100% GPU utilization), minimal CPU to GPU communication, and no overhead.

Overview: The overview page highlights the results of three important GPU usage metrics at different levels (i.e. GPU Utilization, Est. SM Efficiency, and Est. Achieved Occupancy). Essentially, each GPU has a bunch of SM each with a bunch of warps that can execute a bunch of threads concurrently. Warps execute a bunch because the amount depends on the GPU. But at a high level, this GPU Metric on Timeline tool allows you can see the whole stack, which is useful.

If the GPU utilization result is low, this suggests a potential bottleneck is present in your model. Common reasons:

•Insufficient parallelism in kernels (i.e., low batch size)

•Small kernels called in a loop. This is to say the launch overheads are not amortized

•CPU or I/O bottlenecks lead to the GPU not receiving enough work to keep busy

Looking of the overview page where the performance recommendation section is where you’ll find potential suggestions on how to increase that GPU utilization. In this example, GPU utilization is low so the performance recommendation was to increase batch size. Increasing batch size 4 to 32, as per the performance recommendation, increased the GPU Utilization by 60.68%.

GPU Utilization: the step interval time in the profiler when a GPU engine was executing a workload. The high the utilization %, the better. The drawback of using GPU utilization solely to diagnose performance bottlenecks is it is too high-level and coarse. It won’t be able to tell you how many Streaming Multiprocessors are in use. Note that while this metric is useful for detecting periods of idleness, a high value does not indicate efficient use of the GPU, only that it is doing anything at all. For instance, a kernel with a single thread running continuously will get a GPU Utilization of 100%

Estimated Stream Multiprocessor Efficiency (Est. SM Efficiency) is a finer grained metric, it indicates what percentage of SMs are in use at any point in the trace This metric reports the percentage of time where there is at least one active warp on a SM and those that are stalled (NVIDIA doc). Est. SM Efficiency also has it’s limitation. For instance, a kernel with only one thread per block can’t fully use each SM. SM Efficiency does not tell us how busy each SM is, only that they are doing anything at all, which can include stalling while waiting on the result of a memory load. To keep an SM busy, it is necessary to have a sufficient number of ready warps that can be run whenever a stall occurs

Estimated Achieved Occupancy (Est. Achieved Occupancy) is a layer deeper than Est. SM Efficiency and GPU Utilization for diagnosing performance issues. Estimated Achieved Occupancy indicates how many warps can be active at once per SMs. Having a sufficient number of active warps is usually key to achieving good throughput. Unlike GPU Utilization and SM Efficiency, it is not a goal to make this value as high as possible. As a rule of thumb, good throughput gains can be had by improving this metric to 15% and above. But at some point you will hit diminishing returns. If the value is already at 30% for example, further gains will be uncertain. This metric reports the average values of all warp schedulers for the kernel execution period (NVIDIA doc). The larger the Est. Achieve Occupancy value is the better.

Overview details: Resnet50_batchsize4

Overview details: Resnet50_batchsize32

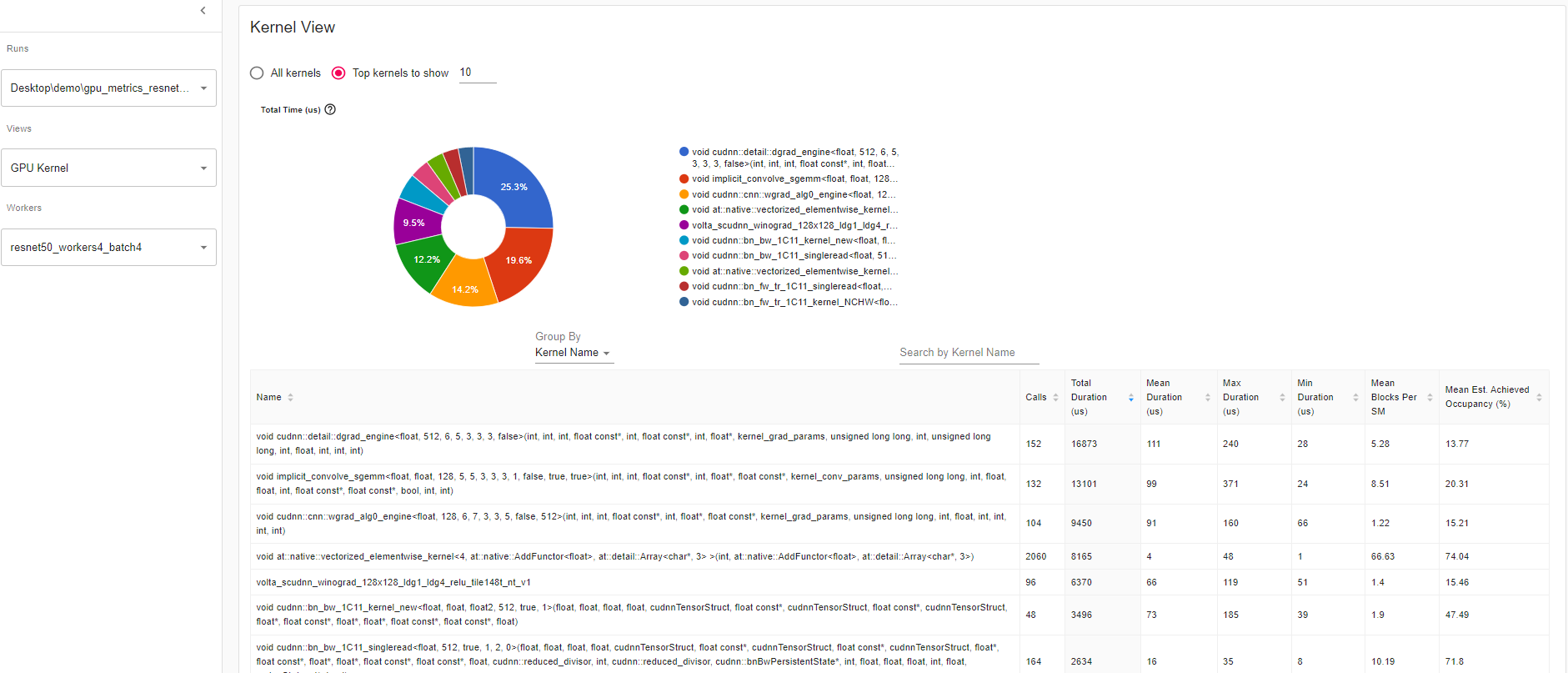

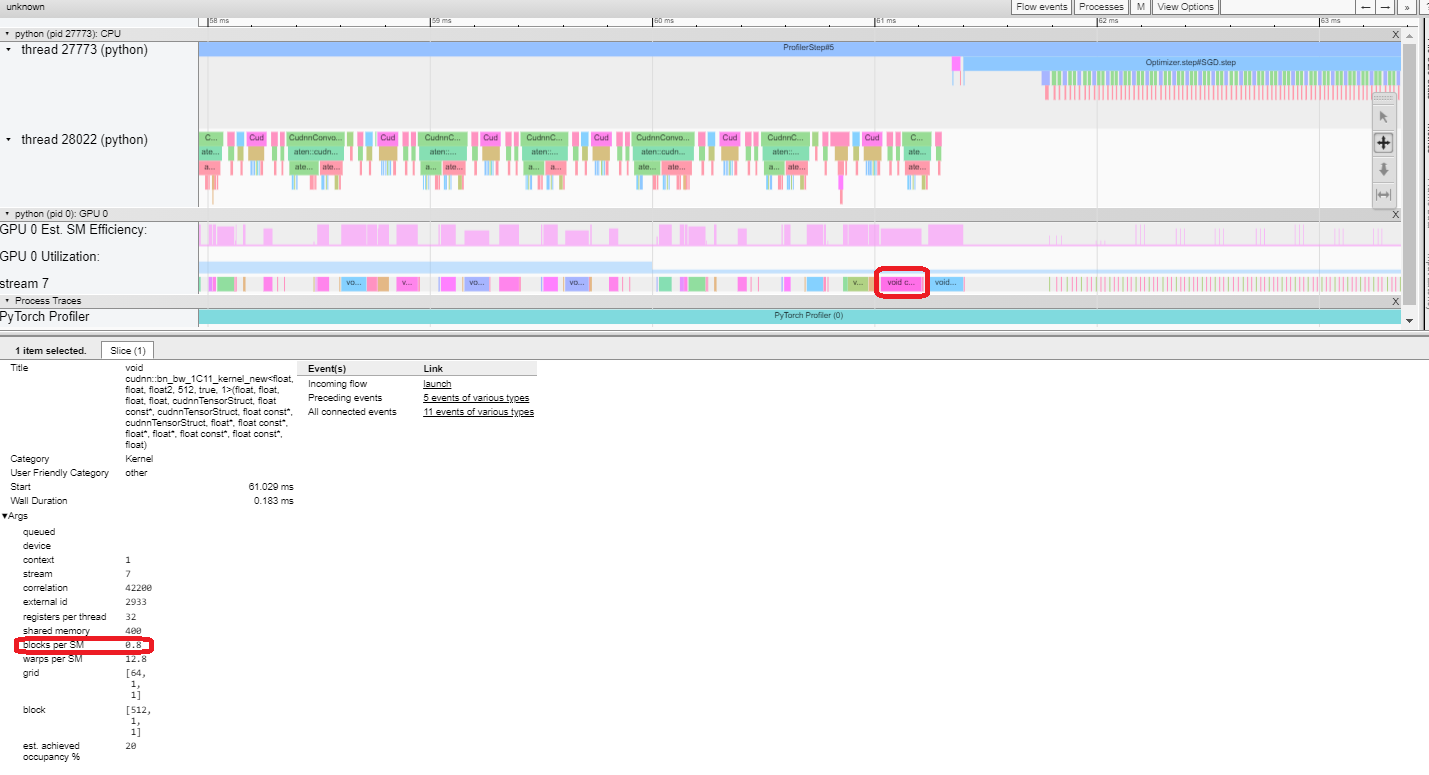

Kernel View The kernel has “Blocks per SM” and “Est. Achieved Occupancy” which is a great tool to compare model runs.

Mean Blocks per SM:

Blocks per SM = Blocks of this kernel / SM number of this GPU. If this number is less than 1, it indicates the GPU multiprocessors are not fully utilized. “Mean Blocks per SM” is weighted average of all runs of this kernel name, using each run’s duration as weight.

Mean Est. Achieved Occupancy:

Est. Achieved Occupancy is defined as above in overview. “Mean Est. Achieved Occupancy” is weighted average of all runs of this kernel name, using each run’s duration as weight.

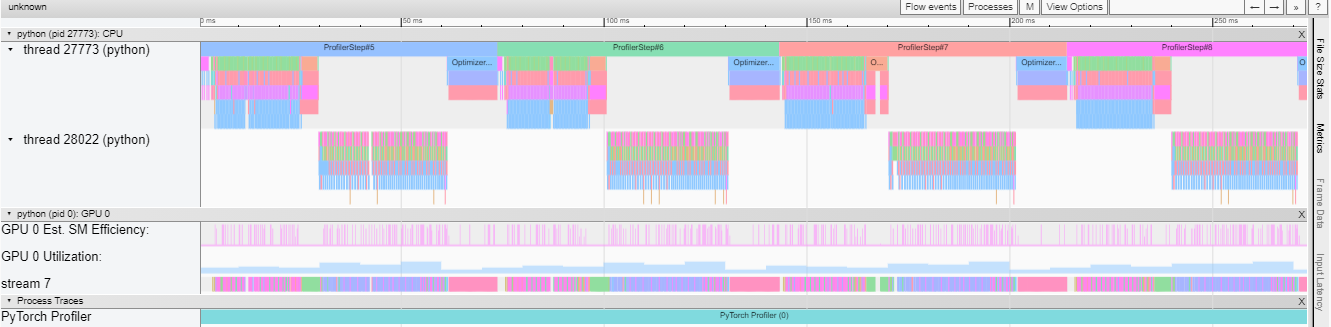

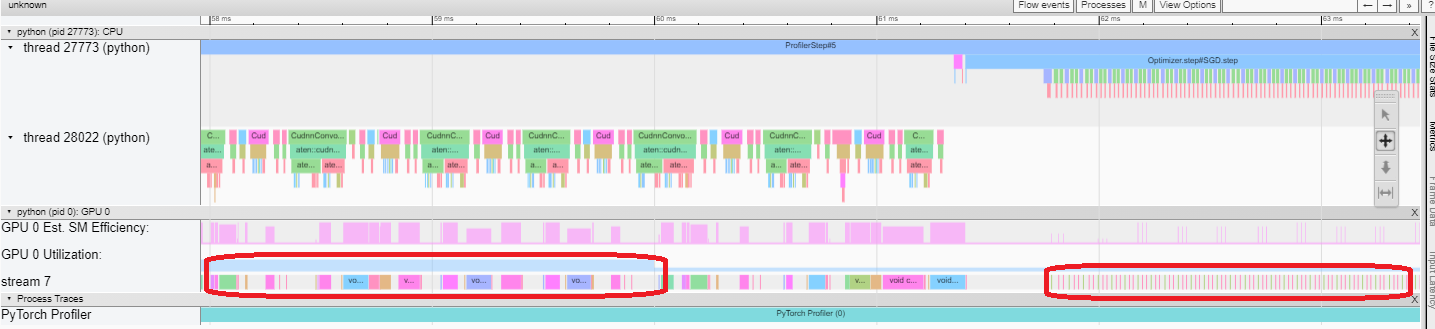

Trace View This trace view displays a timeline that shows the duration of operators in your model and which system executed the operation. This view can help you identify whether the high consumption and long execution is because of input or model training. Currently, this trace view shows GPU Utilization and Est. SM Efficiency on a timeline.

GPU utilization is calculated independently and divided into multiple 10 millisecond buckets. The buckets’ GPU utilization values are drawn alongside the timeline between 0 – 100%. In the above example, the “ProfilerStep5” GPU utilization during thread 28022’s busy time is higher than the following the one during “Optimizer.step”. This is where you can zoom-in to investigate why that is.

From above, we can see the former’s kernels are longer than the later’s kernels. The later’s kernels are too short in execution, which results in lower GPU utilization.

Est. SM Efficiency: Each kernel has a calculated est. SM efficiency between 0 – 100%. For example, the below kernel has only 64 blocks, while the SMs in this GPU is 80. Then its “Est. SM Efficiency” is 64/80, which is 0.8.

Cloud Storage Support

After running pip install tensorboard, to have data be read through these cloud providers, you can now run:

torch-tb-profiler[blob]

torch-tb-profiler[gs]

torch-tb-profiler[s3]

pip install torch-tb-profiler[blob], pip install torch-tb-profiler[gs], or pip install torch-tb-profiler[S3] to have data be read through these cloud providers. For more information, please refer to this README.

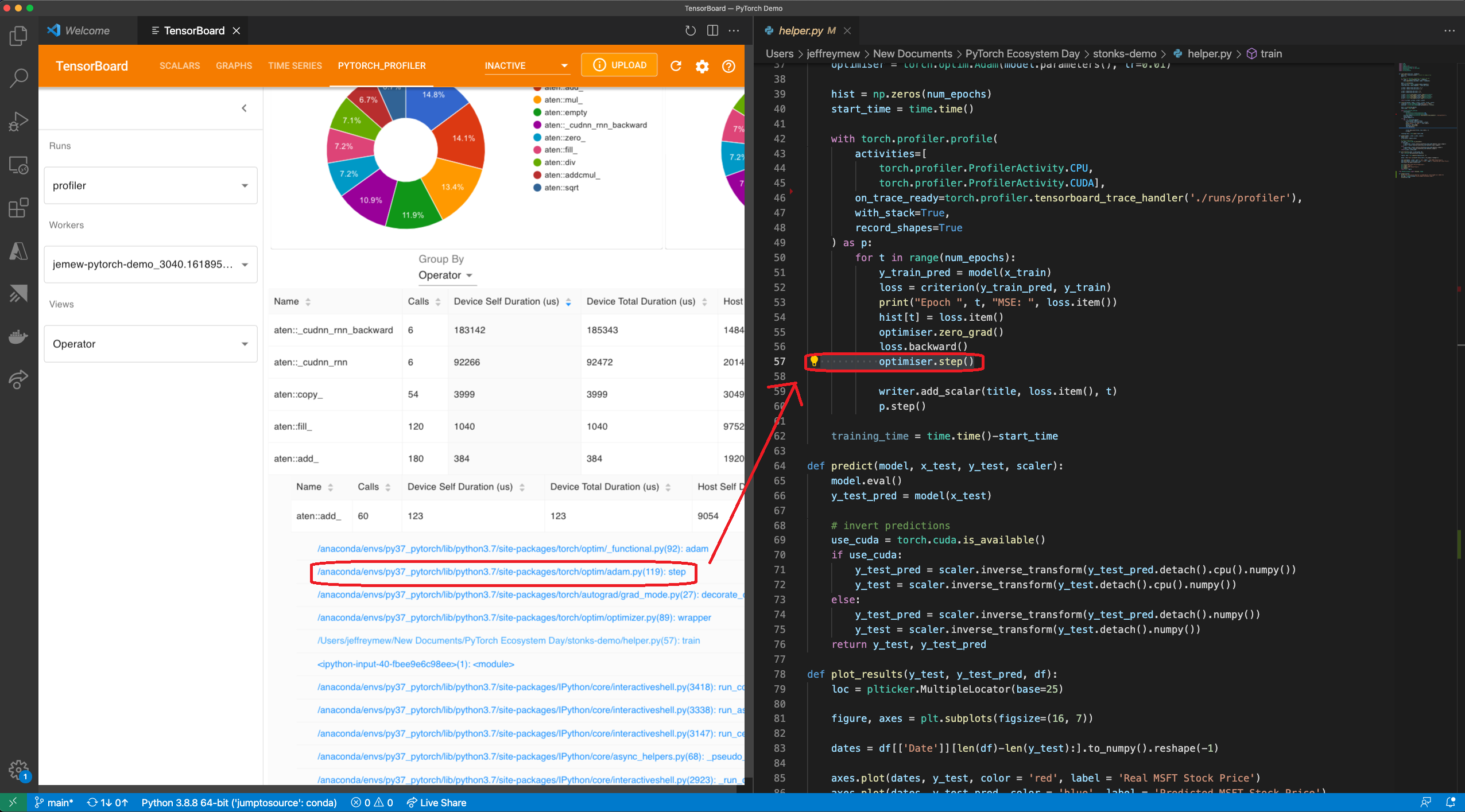

Jump to Source Code:

One of the great benefits of having both TensorBoard and the PyTorch Profiler being integrated directly in Visual Studio Code (VS Code) is the ability to directly jump to the source code (file and line) from the profiler stack traces. VS Code Python Extension now supports TensorBoard Integration.

Jump to source is ONLY available when Tensorboard is launched within VS Code. Stack tracing will appear on the plugin UI if the profiling with_stack=True. When you click on a stack trace from the PyTorch Profiler, VS Code will automatically open the corresponding file side by side and jump directly to the line of code of interest for you to debug. This allows you to quickly make actionable optimizations and changes to your code based on the profiling results and suggestions.

Gify: Jump to Source using Visual Studio Code Plug In UI

For how to optimize batch size performance, check out the step-by-step tutorial here. PyTorch Profiler is also integrated with PyTorch Lightning and you can simply launch your lightning training jobs with –trainer.profiler=pytorch flag to generate the traces.

What’s Next for the PyTorch Profiler?

You just saw how PyTorch Profiler can help optimize a model. You can now try the Profiler by pip install torch-tb-profiler to optimize your PyTorch model.

Look out for an advanced version of this tutorial in the future. We are also thrilled to continue to bring state-of-the-art tool to PyTorch users to improve ML performance. We’d love to hear from you. Feel free to open an issue here.

For new and exciting features coming up with PyTorch Profiler, follow @PyTorch on Twitter and check us out on pytorch.org.

Acknowledgements

The author would like to thank the contributions of the following individuals to this piece. From the Facebook side: Geeta Chauhan, Gisle Dankel, Woo Kim, Sam Farahzad, and Mark Saroufim. On the Microsoft side: AI Framework engineers (Teng Gao, Mike Guo, and Yang Gu), Guoliang Hua, and Thuy Nguyen.

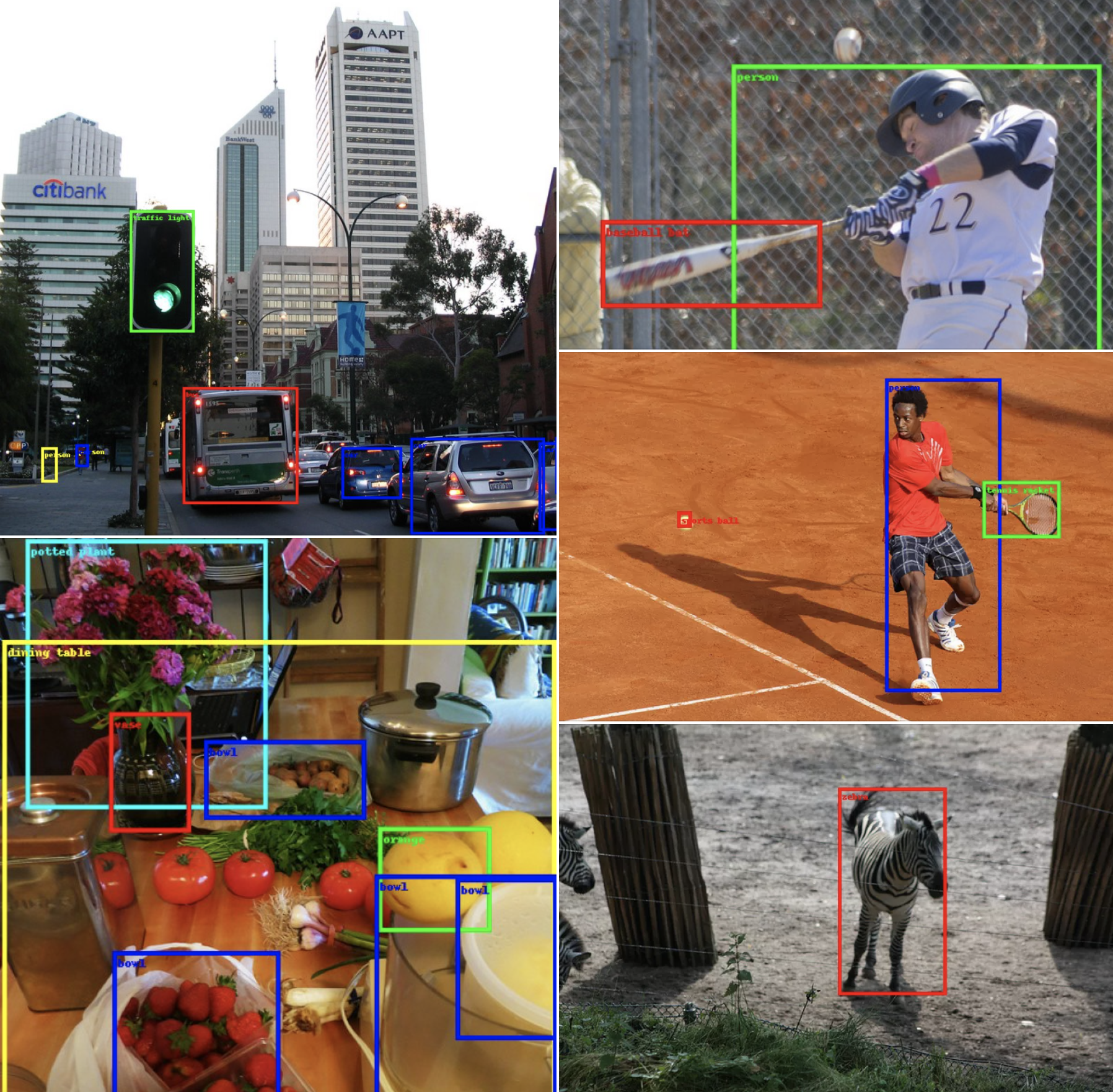

Everything You Need To Know About Torchvision’s SSDlite Implementation

27 Jun 2021, 12:02 amIn the previous article, we’ve discussed how the SSD algorithm works, covered its implementation details and presented its training process. If you have not read the previous blog post, I encourage you to check it out before continuing.

In this part 2 of the series, we will focus on the mobile-friendly variant of SSD called SSDlite. Our plan is to first go through the main components of the algorithm highlighting the parts that differ from the original SSD, then discuss how the released model was trained and finally provide detailed benchmarks for all the new Object Detection models that we explored.

The SSDlite Network Architecture

The SSDlite is an adaptation of SSD which was first briefly introduced on the MobileNetV2 paper and later reused on the MobileNetV3 paper. Because the main focus of the two papers was to introduce novel CNN architectures, most of the implementation details of SSDlite were not clarified. Our code follows all the details presented on the two papers and where necessary fills the gaps from the official implementation.

As noted before, the SSD is a family of models because one can configure it with different backbones (such as VGG, MobileNetV3 etc) and different Heads (such as using regular convolutions, separable convolutions etc). Thus many of the SSD components remain the same in SSDlite. Below we discuss only those that are different

Classification and Regression Heads

Following the Section 6.2 of the MobileNetV2 paper, SSDlite replaces the regular convolutions used on the original Heads with separable convolutions. Consequently, our implementation introduces new heads that use 3×3 Depthwise convolutions and 1×1 projections. Since all other components of the SSD method remain the same, to create an SSDlite model our implementation initializes the SSDlite head and passes it directly to the SSD constructor.

Backbone Feature Extractor

Our implementation introduces a new class for building MobileNet feature extractors. Following the Section 6.3 of the MobileNetV3 paper, the backbone returns the output of the expansion layer of the Inverted Bottleneck block which has an output stride of 16 and the output of the layer just before the pooling which has an output stride of 32. Moreover, all extra blocks of the backbone are replaced with lightweight equivalents which use a 1×1 compression, a separable 3×3 convolution with stride 2 and a 1×1 expansion. Finally to ensure that the heads have enough prediction power even when small width multipliers are used, the minimum depth size of all convolutions is controlled by the min_depth hyperparameter.

The SSDlite320 MobileNetV3-Large model

This section discusses the configuration of the provided SSDlite pre-trained model along with the training processes followed to replicate the paper results as closely as possible.

Training process

All of the hyperparameters and scripts used to train the model on the COCO dataset can be found in our references folder. Here we discuss the most notable details of the training process.

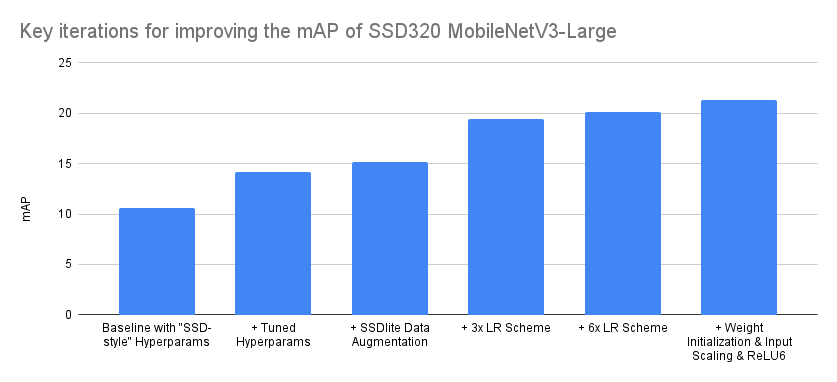

Tuned Hyperparameters

Though the papers don’t provide any information on the hyperparameters used for training the models (such as regularization, learning rate and the batch size), the parameters listed in the configuration files on the official repo were good starting points and using cross validation we adjusted them to their optimal values. All the above gave us a significant boost over the baseline SSD configuration.

Data Augmentation